Advatronix Nimbus 400 File Server Review

by Ganesh T S on August 12, 2015 8:00 AM EST- Posted in

- NAS

- storage server

- Avoton

- Advatronix

Setup Impressions and Platform Analysis

The Nimbus 400 chassis looks solid and well-built. The hands-on experience doesn't disappoint either. The ventilated front panel design with curved edges provides a premium look to the system.

The side panels have ventilation support at the bottom to allow the air to be drawn in by the fans placed inside. The PSU is mounted at the top end of the chassis and the motherboard at the bottom end. The rear panel shows a clean motherboard face plate with a serial port above the VGA port, a management LAN port with two USB 2.0 ports and two GbE LAN ports next to it. At the far end, we see two USB 3.0 ports from what is obviously a PCIe card.

Removing the side panels allows us to see two fixed SATA drives (Intel 3Gbps 120GB SSDs) mounted on either side of the 4-bay drive cage. The cables are tucked neatly. The first photo below shows the mounting of the fan directly beaneath the drive cage. This fan pulls the air in from the front and down through the openings in the drive cage (visible in one of the gallery photos further down) and then pushes it out over the motherboard and through the ventilation holes in the rear as well as the sides. This creates airflow over the passive heatsink on top of the SoC. All in all, this looks like a very nifty design that ought to keep the temperatures down while also creating a relatively quiet system (the fan is not even visible from the outside).

The other side panel reveals the PCIe card that serves up the four USB 3.0 ports. We have two ports directly on the card, while the other two are enabled by headers (allowing them to place the ports in the front panel).

The drive trays don't give us any cause for complaint - they support both 2.5" and 3.5" drives, and have an intuitive removal mechanism with a distinctive blue tab. It would have been nice to have a screwless drive tray design, but the ones that come with the Nimbus 400 serve their purpose well. The gallery below provides some more photographs of the Nimbus 400 chassis and internals.

We had no trouble accessing the server using the management LAN interface. The gallery below shows some of options available over IPMI.

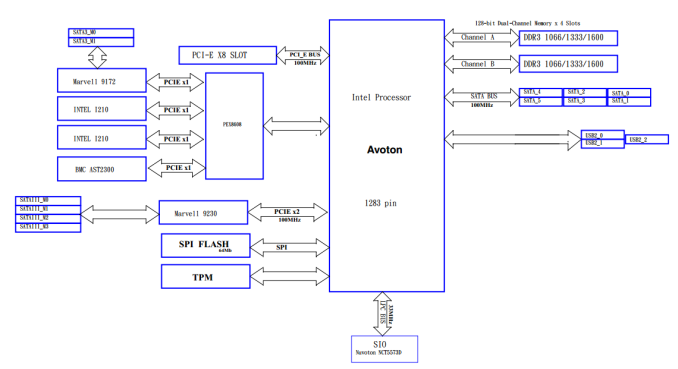

Our review unit came with Windows Server 2012 R2 Essentials pre-installed in a hardware RAID-1 volume. Even though the boot process showed the Advatronix logo, it didn't take long for us to find that the internal motherboard was the ASRock Rack C2550D4I. The diagram below shows the layout of the motherboard. It is the same as that of the ASRock Rack C2750D4I that we reviewed in a DIY configuration earlier.

The photographs above confirm that all the four hot-swap bays come the SATA III 6Gbps connectors (two of which are direct from the SoC). The Marvell RAID 1 volume for the OS is from two of the four SATA III ports enabled by the Marvell SE9230. This setup ensures that there are no bottlenecks in accessing the storage drives. The PCIe slot is occupied by the USB 3.0 card. Despite the available x8 link, the card only uses one lane. This implies that it is possible to not obtain expected bandwidth numbers if two or more USB 3.0 devices are concurrently active.

18 Comments

View All Comments

lwatcdr - Wednesday, August 12, 2015 - link

I would really like to see some data using FreeNAS and Windows as well as Ubuntu. With the cost of drivers so low both of the NAS systems offer a huge amount of data for home or business.wintermute000 - Thursday, August 13, 2015 - link

nowhere near enough free RAM for Freenas. 1Gb per Tb is the recommendation. With modern drives @ even RAIDZ1, you do the mathwintermute000 - Thursday, August 13, 2015 - link

Sorry, no idea why but I read it as 4Gb not 4x4Gb, my badBrutalizer - Sunday, August 16, 2015 - link

For zfs, it is recommened to use 1GB ram per 1TB disk space - only if you use deduplication. If not, 4GB in total is enough. Zfs has a very efficient disk cache, if you only have 2GB ram in your server you will not any disk cache, which is no big deal actually. Myself used 1GB ram server for a year with solaris and zfs for a year without problems. Lot of ignorance about zfs. Try it out yourself with 2-4 GB ram server and see it will work fine.DanNeely - Wednesday, August 12, 2015 - link

Aside from the front panel having USB3, this case looks identical to one I bought from Chenbro a few years ago for my DIY nas. I'd be a bit concerned about the quality. The plastic locking half of the handle on one of the drive sleds popped when I pulled it out a month or two ago to add an additional drive to my setup. The metal half was still usable to pull the drive out and it appears to be held in place securely from the rear; but the normal latch mechanism is obviously not working any more.Anonymous Blowhard - Wednesday, August 12, 2015 - link

I'm concerned about the presence of a Marvell SATA controller + FreeBSD based OS like FreeNAS, since there's been many reports of drives performing poorly or dropping out of ZFS pools under high I/O.bobbozzo - Friday, August 14, 2015 - link

Remove USB card and insert IBM 1015 RAID card. Hope cabling is compatible.SirGCal - Wednesday, August 12, 2015 - link

I personally have two 8-drive, one RAID6 and one RAIDZ2, both running Ubuntu. Both of them also run swifter then this. Curious.Ratman6161 - Wednesday, August 12, 2015 - link

Data is a little stilted because the Asrock is using an 8C 2750 vs the 4 C in the Advantronix - so anything CPU sensitive is not really fair - particularly since the Advantronix is available with the 8C CPU.That said, I sort of doubt many people will be running DB's on this sort of machine. And the other tests seem to indicate that the faster CPU doesn't really buy you anything.

And...4 SSD's in a RAID 5? The cost per GB for doing things that way is very high compared to spinning disks and if its being used in a home setting the performance of the SSD's is not needed. comparing prices online I could get 4x WD Black 750 GB drives for almost $100 cheaper than the 4 x 128 GB Vectors. Take a look at the read performance of the two units. Theoretically the Asrock with an 8 drive array should get better read speed than the Nimbus 400 with only 4. But it doesn't leading me to believe that a lot of the SSD's performance is wasted. Spinning disks is probably the most cost effective way to go with these.

lwatcdr - Wednesday, August 12, 2015 - link

Encryption uses up a good amount of CPU time.