PCI-SIG Finalizes PCIe 5.0 Specification: x16 Slots to Reach 64GB/sec

by Ryan Smith on May 29, 2019 6:30 PM EST

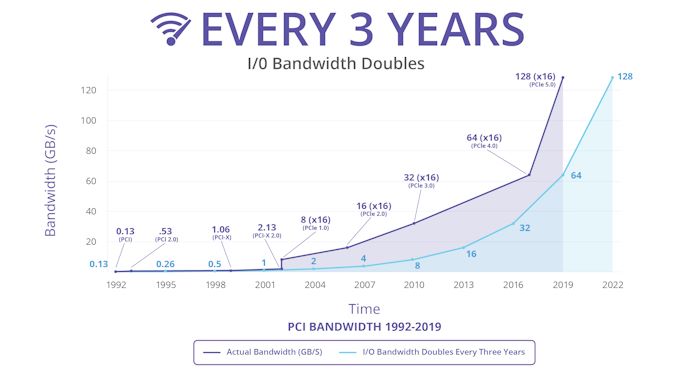

Following the long gap after the release of PCI Express 3.0 in 2010, the PCI Special Interest Group (PCI-SIG) set about a plan to speed up the development and release of successive PCIe standards. Following this plan, in late 2017 the group released PCIe 4.0, which doubled PCIe 3.0’s bandwidth. Now less than two years after PCIe 4.0 – and with the first hardware for that standard just landing now – the group is back again with the release of the PCIe 5.0 specification, which once again doubles the amount of bandwidth available over a PCI Express link.

Built on top of the PCIe 4.0 standard, the PCIe 5.0 standard is a relatively straightforward extension of 4.0. The latest standard doubles the transfer rate once again, which now reaches 32 GigaTransfers/second. Which, for practical purposes, means PCIe slots can now reach anywhere between ~4GB/sec for a x1 slot up to ~64GB/sec for a x16 slot. For comparison’s sake, 4GB/sec is as much bandwidth as a PCIe 1.0 x16 slot, so over the last decade and a half, the number of lanes required to deliver that kind of bandwidth has been cut to 1/16th the original amount.

The fastest standard on the PCI-SIG roadmap for now, PCIe 5.0’s higher transfer rates will allow vendors to rebalance future designs between total bandwidth and simplicity by working with fewer lanes. High-bandwidth applications will of course go for everything they can get with a full x16 link, while slower hardware such as 40GigE and SSDs can be implemented using fewer lanes. PCIe 5.0’s physical layer is also going to be the cornerstone of other interconnects in the future; in particular, Intel has announced that their upcoming Compute eXpress Link (CXL) cache coherent interconnect will be built on top of PCIe 5.0.

| PCI Express Bandwidth (Full Duplex) |

|||||||

| Slot Width | PCIe 1.0 (2003) |

PCIe 2.0 (2007) |

PCIe 3.0 (2010) |

PCIe 4.0 (2017) |

PCIe 5.0 (2019) |

||

| x1 | 0.25GB/sec | 0.5GB/sec | ~1GB/sec | ~2GB/sec | ~4GB/sec | ||

| x2 | 0.5GB/sec | 1GB/sec | ~2GB/sec | ~4GB/sec | ~8GB/sec | ||

| x4 | 1GB/sec | 2GB/sec | ~4GB/sec | ~8GB/sec | ~16GB/sec | ||

| x8 | 2GB/sec | 4GB/sec | ~8GB/sec | ~16GB/sec | ~32GB/sec | ||

| x16 | 4GB/sec | 8GB/sec | ~16GB/sec | ~32GB/sec | ~64GB/sec | ||

Meanwhile the big question, of course, is when we can expect to see PCIe 5.0 start showing up in products. The additional complexity of PCIe 5.0’s higher signaling rate aside, even with PCIe 4.0’s protracted development period, we’re only now seeing 4.0 gear start showing up in server products; meanwhile the first consumer gear technically hasn’t started shipping yet. So even with the quick turnaround time on PCIe 5.0 development, I’m not expecting to see 5.0 show up until 2021 at the earliest – and possibly later than that depending on just what that complexity means for hardware costs.

Ultimately, the PCI-SIG’s annual developer conference is taking place in just a few weeks, on June 18th, at which point we should get some better insight as to when the SIG members expect to finish developing and start shipping their first PCIe 5.0 products.

Source: PCI-SIG

55 Comments

View All Comments

Hxx - Wednesday, May 29, 2019 - link

will 10 duct taped 2018TIs bottleneck the PCIE 5.0 bandwidth limit?PeachNCream - Wednesday, May 29, 2019 - link

Probably nothing in the next couple of generations of GPUs will benefit much from the additional bandwidth, but if it can be done without a drastic cost increase, why not as it may become relevant in the future. Devices that are currently using say 4x could get by just fine on 2x and free up more PCIe lanes for other components or reduce the overall need and maybe save cost by reducing PCB wiring complexity.mode_13h - Wednesday, May 29, 2019 - link

You're missing the point. This is not about your consumer GPUs.It's primarily about networking, storage, HPC, and multi-GPU deep learning.

For consumers, maybe M.2 drives will get replaced by something with only half the lanes. Perhaps you'll also start seeing more CPUs and GPUs with only x8 interfaces (and corresponding cost savings).

StevenD - Thursday, May 30, 2019 - link

The question is fair. We've had 16xgen3 for a while now but only recently with the 1080ti and 2080ti was there any real need to use anything above 8xgen3. And this cause a serious issue for consumer CPUs since with NVME you start needing more lanes. Basically you could reach a bottleneck with a gpu NVME combo.You always think you're not gonna need it, but right now there are more than enough use-cases that show that gen4 for consumers should have been last year's news. By the time PC parts come out we're going to be well past gen3 being an acceptable compromise.

nevcairiel - Thursday, May 30, 2019 - link

With NVMe getting more wide spread, I would rather see an increase in CPU lanes to cover at least one 4x device in addition to the 16x GPU on mainstream platforms. You know, like AMDs Ryzen. Thats one short-coming I hope Intel really fixes.That way you can run a full x16 device, and one SSD. And if you need more, you can split 8 off of the GPU an run 2 more full bandwidth SSDs.

This would overall be more future proof, even if switching to PCIe4 or 5 would hold us off for a while.

mode_13h - Thursday, May 30, 2019 - link

Um, what on earth are you doing that you need so much storage bandwidth in a _desktop_?cyberbug - Sunday, June 2, 2019 - link

Why are people under the impression NMVE is shared witgh the PCI-e16x slot ? this is simply not true.AM4 for example has:

- 16 dedicated lanes from the cpu to pci-e16x

- 4 dedicated lanes from the cpu to the NMVE slot

- 4 dedicated lanes from the cpu to the chipset...

mode_13h - Thursday, May 30, 2019 - link

In case you didn't notice, neither this article nor that comment were about Gen 4.compvter - Thursday, May 30, 2019 - link

^this. I really fail to understand why people think GPU would need more bandwidth even though there have been multiple benchmarks done that this is not the case. As long as CPU/motherboard keeps feeding data at the rate GPU requires it won't be affecting GPU performance and that point is relatively low... like mining crypto was perfectly fine with 1x lane because there is not that much traffic between GPU and rest of the system.I would correct "maybe M.2 drives will get replaced" assumption since m.2 is essentially using (depending on solution) either 2x or 4x pcie lanes, so having them using standard that doubles transfer speed doubles theoretical m.2 speed as well, so there is no need to replace m.2, just use newer PCIE standard and we get benefits from having same standard on laptops, desktops and servers. If you want something beefier you get PCIe card like one of those optain 905p or pcie add-on card where you can stack m.2 drives and set them up on raid 0 mode. Some youtuber already made a video about this, and I think this is the point AMD should be saying on their marketing videos and a thing that deserves more investigation... like why games don't really load faster if you have 10x higher bandwidth to your data and extremely good latencies. There is some hidden bottleneck that should be addressed ASAP.

mode_13h - Thursday, May 30, 2019 - link

Why do you need 16 GB/sec storage, in a desktop or laptop? That's what doesn't make sense to me about transitioning M.2 to PCIe 5.0. I think the mainstream PC market will prefer the cost savings of reducing lane count, and be happy with a x2 (or even x1) interface.