SeaMicro Announces SM10000 Server with 512 Atom CPUs and Low Power Consumption

by Anand Lal Shimpi on June 14, 2010 1:38 PM EST- Posted in

- IT Computing

- CPUs

- SeaMicro

Two years ago when I first covered Intel’s Atom architecture I proposed that Moore’s Law has paved the way for two things: 1) ridiculously fast microprocessors, and 2) fast enough microprocessors.

The first category is used to push the bleeding edge of software. Everything from scientific computation to 3D gaming. If it’d never been done before, Moore’s Law enabled companies like AMD, Intel and NVIDIA to build the microprocessors we needed to make it happen.

The second category is a more recent development. If you don’t need the compute power, Moore’s Law enabled the creation of smaller, cheaper, more power efficient microprocessors to deliver performance that’s good enough. These types of chips are found in everything from netbooks to smartphones.

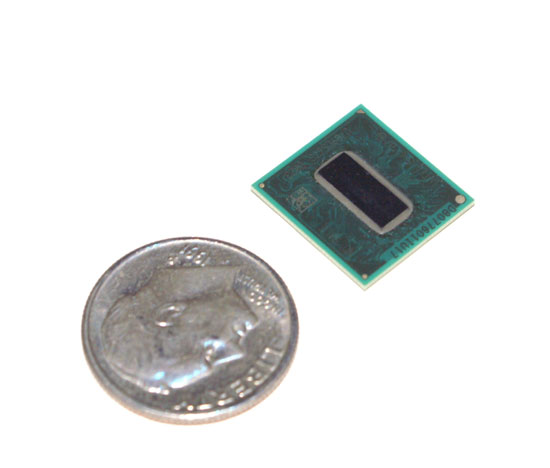

Intel's Atom (Silverthorne) Processor

A number of folks are arguing that the same approach can be applied to server workloads. They argue that the majority of time the servers that drive your favorite websites or cloud services are idle, at least from the CPU perspective. For servers that aren’t virtualized, this is largely true. You don’t build servers for average load, you build them to make sure they can withstand maximum load. The unfortunate result is that when these servers are running during periods of low traffic, they aren’t very power efficient.

For a small operation like AnandTech this isn’t much of a problem. But on a larger scale, it adds up. Data centers are easily power constrained. High density servers give us dozens of microprocessor cores in the space of several rack units. That’s great for compute, but terrible for power consumption.

Also keep in mind that even the slowest servers you can buy today are still pretty powerful. In many cases you need physical box redundancy but not the added horsepower of having more hardware. Add in live fail-over support to minimize downtime and you’ve got even more wasted power.

Today if you don’t need the performance a multi-core Xeon can offer you, but you need tons of physical servers, there are very few options. You can stick with a simple single core server but then your power consumption even at idle is still in the range of dozens of watts. You could make the argument that as CPUs get more powerful, there’s room for a category of “fast enough” servers. And thankfully we already have a processor that’s “fast enough”. The Atom.

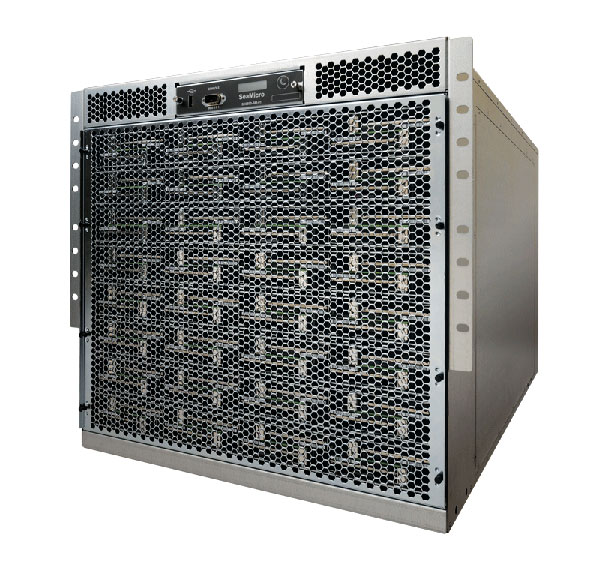

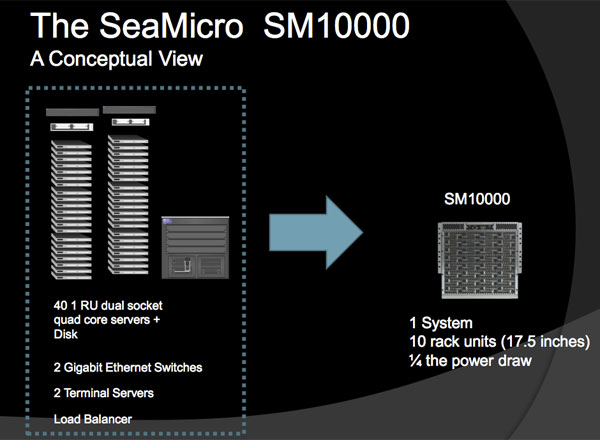

Take 512 Atom based servers, cram them into a box that consumes 2kW of power, give it boatloads of networking and you’ve got the SM10000 by SeaMicro. This $139K box is designed to replace dozens of quad core Xeon/Opteron boxes that remain idle most of the time. According to SeaMicro if your business model is that you’re giving something away for free on the Internet, then the SM10000 might be for you.

SeaMicro’s CEO comes from the network field, while its CTO is a former Sun and AMD microprocessor architect (he was apparently one of five chief architects on AMD’s Bulldozer core). The company not only makes the 512 Atom server but also a custom ASIC inside that makes the technology work.

The SM philosophy is simple; you don’t take a space shuttle to the grocery store. If you don’t need such a beefy server for your workload, why continue to use one?

53 Comments

View All Comments

datasegment - Monday, June 14, 2010 - link

Looks to me like the savings go up, but the amount of power used steadily *increases* when CPU usage drops... I'm guessing the charts and titles are mismatched?JarredWalton - Monday, June 14, 2010 - link

No... it's a poorly made marketing chart IMO. Look at the number of systems listed:1000 Dell vs. 40 SeaMicro = $1084110 ($52.94 per "server")

2000 Dell vs. 80 SeaMicro = $1638120 ($39.99 per "server")

4000 Dell vs. 160 SeaMicro = $2718720 ($33.19 per "server")

Based on that, the savings per "server" actually drops at lower workloads -- that's with 512 Atom chips in each SeaMicro server. It's also a bit of a laugh that 20480 Atom chips are "equal" to 8000 Nehalem cores (dual socket + quad-core, plus Hyper-Threading) under 100% load. Although they're probably using the slowest/cheapest E5502 CPU as a comparison point (dual-core, no HTT). Under 100% load, the Nehalem cores are far more useful than Atom.

cdillon - Monday, June 14, 2010 - link

Virtualization has already solved the problem they've attempted to solve, and I think virtualization does a better job at it. For example, I'm going to be implementing desktop virtualization at my workplace by next year, and have been looking at blade systems to house the virtualization hosts. You can pack at least twice the computing power into the same rack space, and use less than twice the wall-power (using low-power Xeon or Opteron CPUs and low-power DIMMs). You actually have an opportunity to SAVE EVEN MORE power because systems like VMware vSphere can pack idle virtual machines on to fewer hosts and can dynamically power on and off entire hosts (blades) as demand requires it. Because the DM10000 does non-virtualized (other than I/O) 1:1 computing, there's no opportunity to power-down completely idle CPU nodes, yet keep the software "running" for when that odd network request comes in and needs attention. Virtualization does that.PopcornMachine - Monday, June 14, 2010 - link

Couldn't you also use this box as a VMware server, and then save even more energy?cdillon - Monday, June 14, 2010 - link

No, for several reasons, but mainly the licensing costs for vSphere for 512 CPU sockets. You wouldn't want to waste a fixed per-socket licensing cost on such slow, single-core CPUs. You want the best performance/dollar ratio, and that generally means as many cores as you can fit per socket. With free virtualization solutions that wouldn't really matter, but there's no free virtualization solutions that I'd want to manage 512 individual VM hosts with. Remember, the DM10000 is NOT a single 512-core server. It's 512 independent 1-core servers. Not only that, but each single Atom core is going to have a hard time running more than one or two VM guests at a time anyway, so trying to virtualize an Atom seems rather pointless.Sorry if this is a double post, first time I tried to post it just hung for about 10 minutes until I gave up on it and tried again...

PopcornMachine - Tuesday, June 15, 2010 - link

In to OS section, the article mentions running Windows under a VM. So sounds like it can handle VMware to me.On the other hand, if the box does not let the cores work together in some type of cluster, which I assumed was the point of it, then I don't see the point of it. Just a bunch of cores to weak to power a netbook properly?

fr500 - Tuesday, June 15, 2010 - link

You got 512 cores, why would you go for virtualization?beginner99 - Tuesday, June 15, 2010 - link

yeah not to mention other advantages of virtualisation especially when performing upgrades on these web applications.Or single-threaded performance. Really only useful if your apps never ever do anything moderatley cpu intensive.

PopcornMachine - Monday, June 14, 2010 - link

When Intel introduce the Atom, this was what I thought would be one its main uses.Cluster a bunch together. Low power, scalable, cheap. No brainer. Makes more sense that using them in under powered netbooks.

How come it took someone this long to do it?

It would be nice to see some benchmarks to be sure, though.

moozoo - Monday, June 14, 2010 - link

I'd love to see something like this made using a beefed up Tegra 2 type chipsets supporting OpenCL.