Supermicro Ultra SYS-120U-TNR Review: Testing Dual 10nm Ice Lake Xeon in 1U

by Dr. Ian Cutress on July 22, 2021 9:00 AM ESTBIOS, Software, BMC

The networked management for the Supermicro SYS-120U-TNR uses the latest interface from Supermicro through the ASpeed AST2600 which is given an IP my DHCP upon connection. Interestingly enough trying to access the interface did not work with Chrome at all - after logging in it would just freeze on the system page while trying to get basic system details. In the end I had to use non-Chromium based Edge. On top of that both Chrome and Edge warned that the certificate for the BMC webpage was invalid, resulting in jumping through a hoop to access it.

The username and password to access the system are no longer the default admin/admin or admin/password: due to the 2018 law in California known as SB-327, all IoT devices (including servers) that have administrator access to settings and configurations must have unique passwords. The username for us was still ADMIN however the password was found on a pull-out tab on the front of the server - or alternatively just on the inside of the double width PCIe slot inside the chassis.

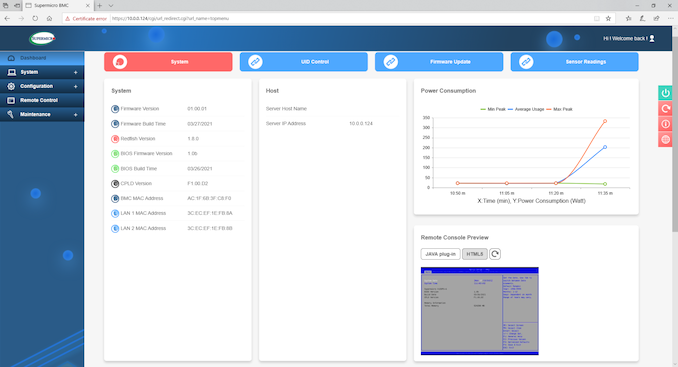

The Supermicro interface is as detailed as a management interface needs to be, with this main dashboard showcasing firmware versions, power consumption, the remote console, and recent system messages and actions.

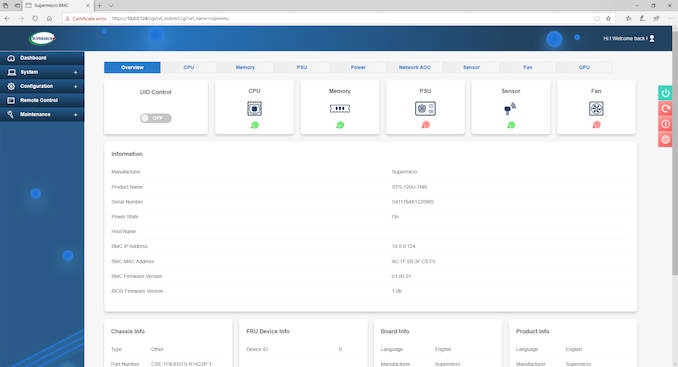

The System tab states a lot of similar information to the dashboard, with links to the separate component detection of the server.

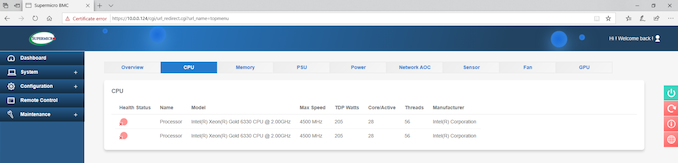

The CPUs are both detected here, and although it says they have with a base frequency of 2.00 GHz (actually 2.6 GHz) and a turbo frequency of 4.5 GHz (actually 3.1 GHz), we actually measure the correct numbers in the operating system.

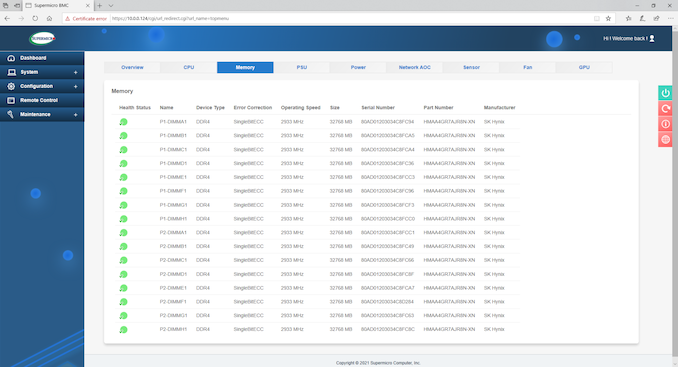

All sixteen memory modules are detected, with ECC enabled, for a total of 512 GB.

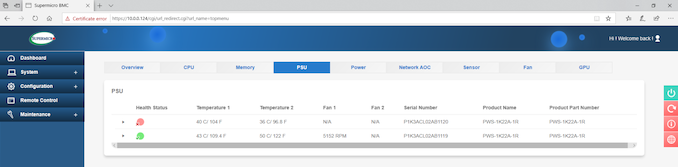

Power supplies as well – in this image we only have one of the 1200W models connected to the mains, but even without it will still showcase the thermal sensor on the power supply not connected.

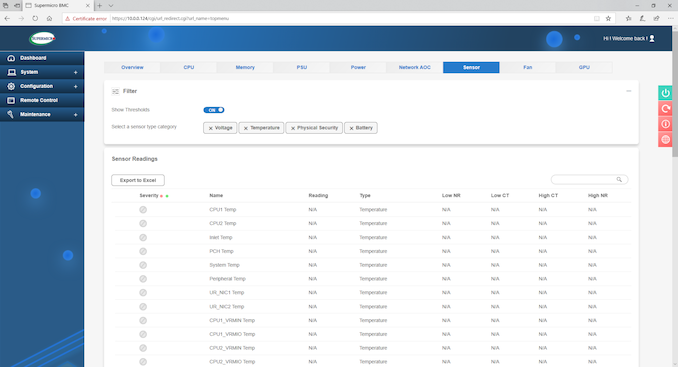

In our system, the sensor module didn’t seem to read anything from the hardware, however we did run the fans at full speed regardless.

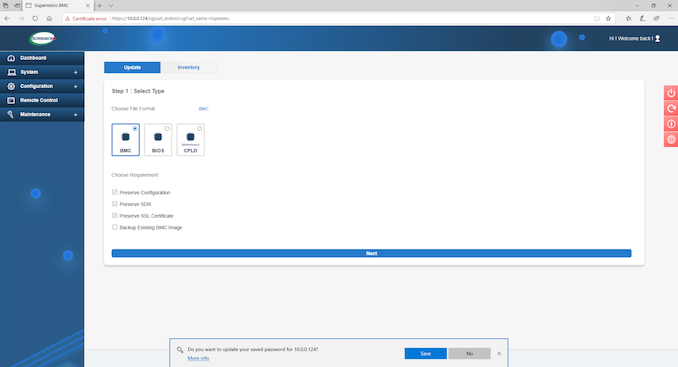

Updating the BMC or BIOS is relatively easy through the update interface when you have a file to hand. The system also keeps track of when it was updated and with what version firmware.

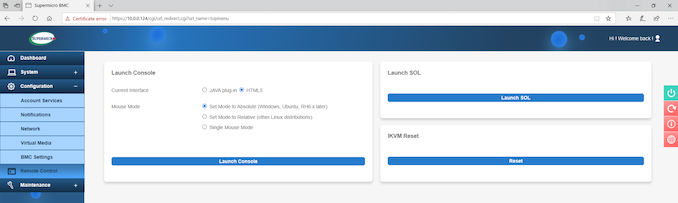

For remote control, both HTML5 and Java are supported, however we could not get the HTML 5 interface to work during our testing. Java worked well, and is likely kept here for the specific reason of legacy and fallback support despite Java not being recommended.

Overall the management options were as standard as we normally expect from this sort of system. On the plus side it looks a lot nicer than some of the base AMI / older interfaces we still encounter from time to time, but on the minus side I’m still unsure why it wouldn’t work in Chrome.

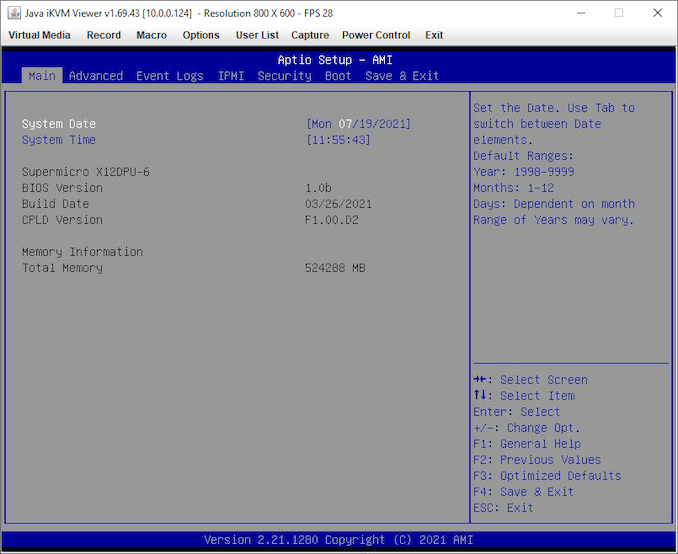

BIOS

On the BIOS/UEFI side of the equation, we get a simple blue and grey interface from AMI which runs as standard on enterprise systems. The X12DPU-6 motherboard we are using has BIOS version 1.0b and a total of 512 GB of memory detected.

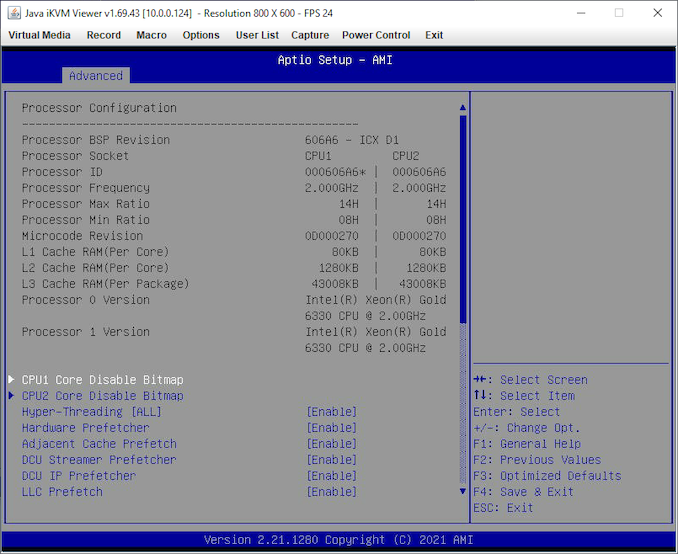

In the Advanced CPU section, it showcases that we have two Xeon Gold 6330 processors, with the D1 stepping. Similar to the BMC, it says here a 2.0 GHz base frequency (Intel’s official specifications state 2.5 GHz) but everything else looks in order. Individual cores can be disabled with the bitmaps as shown here:

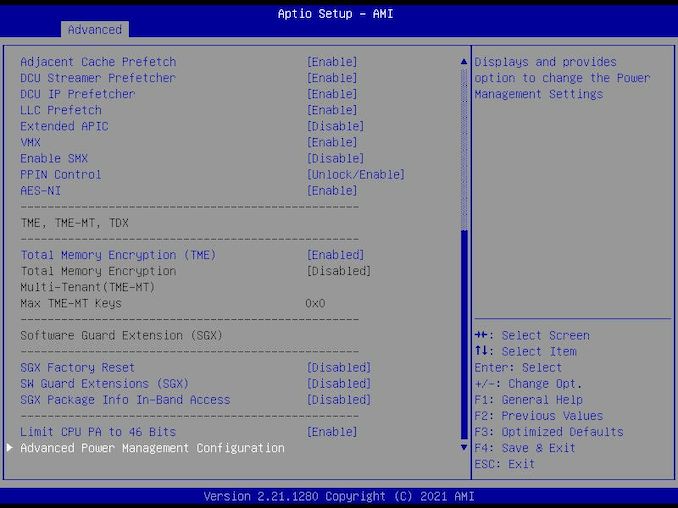

One of the new features of the Xeon Gold processors is SGX enclaves, which require TME to be enabled.

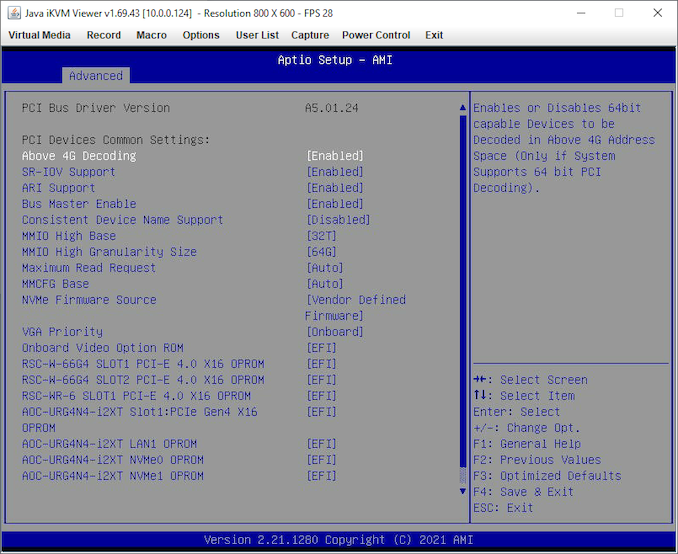

In the PCIe section, Above 4G Decoding was enabled by default (often disabled by default on consumer platforms), and the system allows a selection of NVMe firmware such that it can be software driven rather than vendor firmware driven.

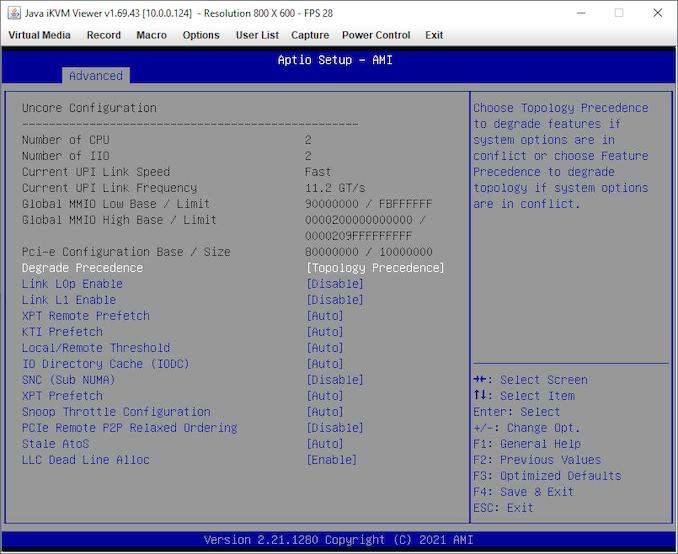

For the uncore / mesh sub-system, we can see that this system is configured to 11.2 GT/s speed UPI links (one of the upgrades over previous generation), but there are also a number of options here that could affect the system based on use case. Customers can select the system to prioritize topologically at the expense of feature performance (e.g. cores over IO), or vice versa. Similarly a user can select SNC2 (Sub-NUMA Clustering) to partition the processor into two hemispheres for lower latency memory accesses at the expense of immediate bandwidth. There is also an option to throttle cache snooping to manage power based on what sort of workloads the system would end up running.

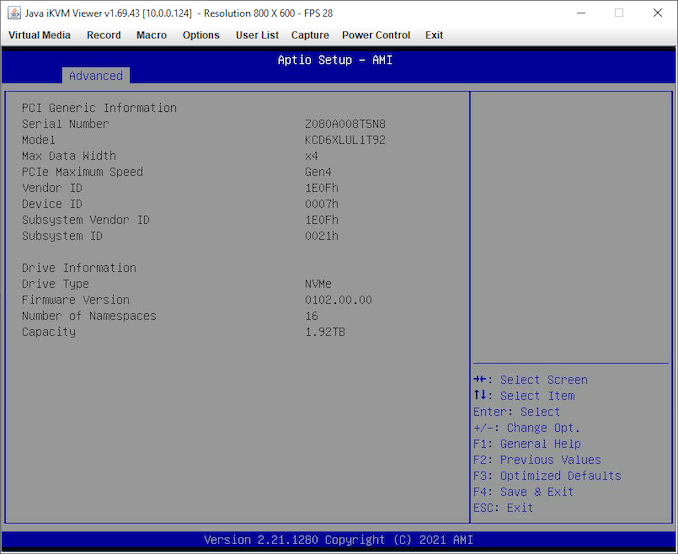

All the NVMe slots in the front panel of the system can be PCIe 4.0 x4 enabled, and there’s an option to check that here as well.

Other options in the BIOS include IMPI network settings, event logs, and traditional BIOS security.

Power_thumb.png)

Main_thumb.png)

System_thumb.png)

CPU_thumb.png)

Memory_thumb.png)

PSU_thumb.png)

- Main_thumb.png)

- Advanced_thumb.png)

- Boot Feature_thumb.png)

- CPU1_thumb.png)

- CPU2_thumb.png)

- PCIe_thumb.png)

53 Comments

View All Comments

mode_13h - Friday, July 23, 2021 - link

> It's a real-world workloadExcept it's not. It started out that way, but then he gave it to Intel to optimize the AVX-512 path. So, the AVX-512 is optimized by "a world expert, according to Jim Keller" (to paraphrase Ian). And yet, the AVX-512 results are put up against the AVX2 results, on AMD CPUs, as if they're both optimized to the same degree and that just happens to be the *actual* difference in performance.

As an excuse for this, Ian points out that he gave AMD the same opportunity, but they haven't taken him up on it. Well, that still doesn't make it a fair representation of AVX2 vs. AVX-512 performance.

> I'm not sure the point should be to microoptimize it to the ends of the world,

> or it wouldn't be a realistic workload any longer.

A lot of workloads are heavily-optimized. This includes kernels in HPC programs, many games, and the most popular video compression engines. Probably a lot of stuff in SPEC Bench has been optimized a high degree. And let's not even start on AI frameworks.

All I want to do is see if people can close the gap between AVX2 and AVX-512 somewhat, or at least explain why it's as big as it is. Maybe there's some magic AVX-512 instructions that have no equivalent in AVX2, which turn out to be huge wins. It would at least be nice to know.

Plus, there's my point about optimizing it for ARM NEON and SVE, so it could be used in a somewhat apples-to-apples comparison with ARM processors.

GeoffreyA - Friday, July 23, 2021 - link

I agree it's unfair. On the "non-AVX" test, the Ryzens go to the top. On one hand, the test shows how much faster an AVX512 processor can be. On the other hand, it's unfair that some are running the AVX2 path and some the AVX512, and the results are put together. (Reminiscent of the Athlon XP's SSE not being used in some benchmarks.)Others, I don't know, but in a thing like HEVC encoding, the gains aren't all that much for these instructions. It leads me to feel the 3DPM test is gaining disproportionately from AVX512, in a narrow sort of way, and that's being magnified. The result shows, "Look at how fast these AVX512 CPUs are, leaving their AMD counterparts in the dust."

https://networkbuilders.intel.com/docs/acceleratin...

https://software.intel.com/content/www/us/en/devel...

mode_13h - Saturday, July 24, 2021 - link

> it's unfair that some are running the AVX2 path and some the AVX512,> and the results are put together.

That's a reasonable position, but I'm not even going that far. I'm okay with putting up AVX2 against AVX-512, but I think they need to be optimized somewhat comparably. That way, the difference you see only shows the true difference in hardware capability, and not also the (unknown) difference in the level of code optimization.

> "Look at how fast these AVX512 CPUs are, leaving their AMD counterparts in the dust."

It does have a few specialized instructions that have no AVX2 counterpart. And if you're doing something they were specifically designed to accelerate, then you can get a legit order of magnitude speedup. And it's not impossible 3DPM hit one of those cases. But, in order to know, Ian really needs to disclose the code.

GeoffreyA - Saturday, July 24, 2021 - link

"it's not impossible 3DPM hit one of those cases"Possible, even likely. And if so, it's a bit of an unbalanced picture. It will be interesting to see what happens when AMD adds support.

mode_13h - Sunday, July 25, 2021 - link

> Possible, even likely.We don't know, so don't presume. There are some obvious things you can get wrong that sabotage performance. Cache thrashing, pointer aliasing, and false sharing, just to name a few. Probably a lot of the speedup, in the AVX-512 case, was fixing just such things.

Spunjji - Monday, July 26, 2021 - link

@GeoffreyA - I would argue that it wouldn't necessarily be unbalanced if the benchmark benefits particularly heavily from AVX-512, simply because there are going to be workloads like that out there, and the people who have them are probably going to be aware of that to some extent.With comparable optimisation between the AVX2 and AVX-512 code paths, it could still be a helpful example of a best-case for the feature, for those few people for whom it's going to work out like that.

For everyone else, we could definitely do with more generalised real-world examples (like x264) where the AVX-512 part of the workload isn't necessarily dominant.

GeoffreyA - Wednesday, July 28, 2021 - link

That's a good way of looking at it, Spunjji. You're right. Hopefully we can those balanced, real-world examples in addition.GeoffreyA - Saturday, July 24, 2021 - link

And for a best AVX2 vs. best AVX512, I think we probably need some bigger test, something like encoding I would think. I could be wrong, but remember reading that x264 had AVX512 support. I doubt whether it's been optimised to the fullest, though. And most of the critical work on x264 was done a long time ago.GeoffreyA - Sunday, July 25, 2021 - link

My mistake. x265.mode_13h - Sunday, July 25, 2021 - link

Yeah, some of the rendering and encoding benchmarks use it.