The Intel Xe-LP GPU Architecture Deep Dive: Building Up The Next Generation

by Ryan Smith on August 13, 2020 9:00 AM EST- Posted in

- GPUs

- Intel

- Tiger Lake

- Xe

- Xe-LP

- DG1

- Intel Arch Day 2020

- SG1

As part of today’s Intel Architecture Day, Intel is devoting a good bit of its time to talking about the company’s GPU architecture plans. Though not a shy spot for Intel, per-se, the company is still best known for its CPU cores, and the amount of marketing attention they’ve put into the graphics side of their business has always been a bit weaker as a result. But, like so many other things at Intel, times are changing – not only is Intel devoting ever more die real estate to GPUs, but over the next two years they are transitioning into a true third player in the PC GPU space, launching their first new discrete GPU in several generations.

As part of Intel’s previously-announced Xe GPU architecture, the company intends to become a top-to-bottom GPU provider. This means offering discrete and integrated GPUs for everything from datacenters and HPC clusters to high-end gaming machines and laptops. This is a massive expansion for a company whom for the last decade has only been offering integrated GPUs, and one that has required a lot of engineering to get here. But, at long last, after a couple of years of talking up Xe and laying out their vision, Xe is about to become a reality for Intel’s customers.

While we’ll focus on different Xe-related announcements in separate articles – with this one focusing on Xe-LP – let’s quickly recap the state of Intel’s Xe plans, what’s new as of today, and where Xe-LP fits into the bigger picture.

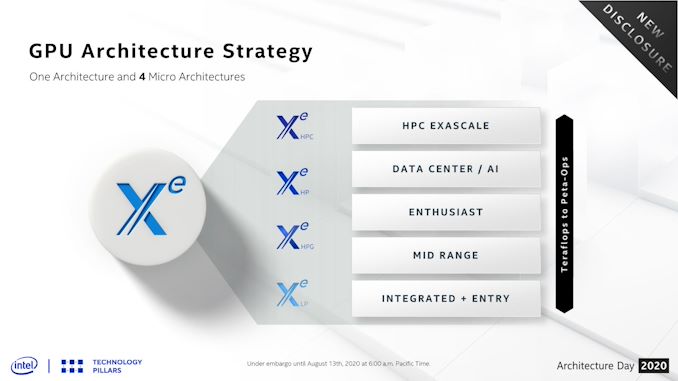

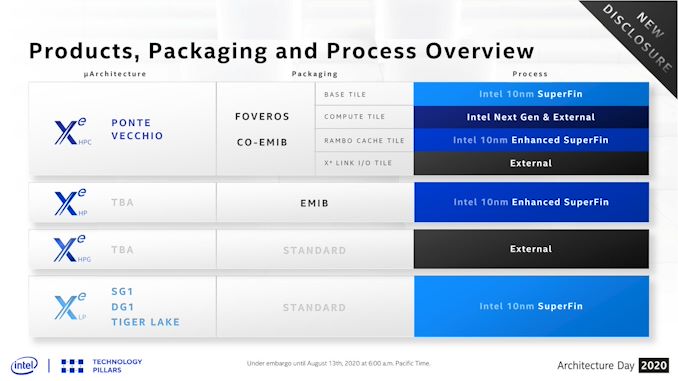

When first announced back in 2018, Intel laid out plans for a single GPU architecture, Xe, comprised of three different microarchitectures: Xe-LP, Xe-HP, and Xe-HPC. Spanning the market from the bottom to the top respectively, Xe-LP would go into integrated and entry-level discrete graphics, Xe-HP into enthusiast and datacenter parts, and finally Xe-HPC would be for high performance computing clusters such as the upcoming Aurora supercomputer, the US Department of Energy’s long-awaited exaflop machine.

Since then, Intel has revised that plan a bit, and what was three microarchitectures is now four. Being announced as part of Intel Architecture Day today, Intel is revealing Xe-HPG, an additional microarchitecture for gaming-focused chips. We have more on Xe-HPG in this article, but at a high level it’s meant to be the missing piece of the puzzle in Intel’s product stack, offering a high-performance gaming and graphics-focused chip as opposed to Xe-HP, which is specializing in datacenter features like FP64 and multi-tile scalability. Xe-HPG is set to arrive in 2021, and notably will be built entirely at a third-party fab, unlike the rest of the Xe family.

Which to bring things back to the immediate topic of Xe-LP, makes this year’s launch of Intel’s first Xe microarchitecture all the more important. Intel’s plans for Xe involve building up successive Xe parts – quite literally in Xe-HPC’s case – going for wider designs that incorporate ever increasing numbers of base building blocks, and then scaling out the number of GPUs when even that isn’t enough. So Xe-LP is very much the foundation of the Xe family, not just in diagrams but in architecture as well; and consequently, what Intel has designed for Xe-LP will have repercussions for the entire Xe product stack.

Xe-LP: Integrated with Tiger Lake, But Discrete as Well

While Intel’s product plans for Xe have them eventually reaching far and wide, it’s only fitting that things start at the same place they always have for Intel’s GPUs: integrated graphics. Xe and Xe-LP will be making their first appearance in the market as part of Intel’s new Tiger Lake SoC, which the company will be launching on September 2nd. And despite the fact that Intel isn’t talking much about the product side of Tiger Lake itself – preferring to keep today about architecture and making September about products – Tiger Lake was very clearly the focal point for Xe-LP’s design. So Tiger Lake is the catalyst for it all, as we’ll see when discussing Xe-LP’s features.

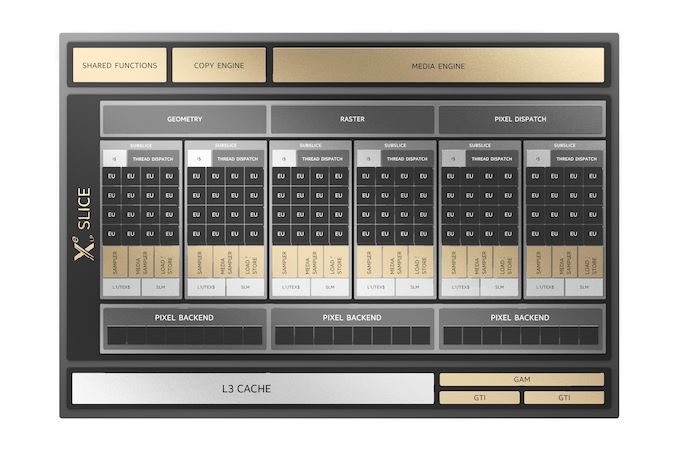

Given Intel’s official disclosures today (never mind the many, many leaks), it’s clear that Tiger Lake parts are going to top out with a single Xe-LP slice. Which, new with this generation, is now 96 of Intel’s more fundamental GPU Execution Units (EUs). Overall, the company is targeting a 2x increase in performance over Ice Lake (Gen11) graphics,

But Tiger Lake won’t be the only place where Xe-LP will show up. As previously disclosed by Intel, the company is developing a discrete GPU version of it, which they are calling DG1. Designed to be paired with Tiger Lake in notebooks and other mobile devices, DG1 is Intel’s first discrete GPU in over twenty years, and it’s the spiritual-successor of sorts to Intel’s GT3 and GT4e integrated GPU configurations. Only instead of building low-volume CPU designs with a larger GPU, Intel will instead sell OEMs a discrete GPU based on the same architecture and built on the same 10nm SuperFin process as the integrated GPU.

DG1 will be shipping this year, so expect to see it show up in higher-performing Tiger Lake laptops. However Intel is otherwise disclosing very little about the part, as they are not disclosing much of anything with regards to product configurations today. So while we know that it’s based on Xe-LP and that it’s mobile-focused (Intel has dropped all discussion of desktop usage), we don’t have official details on anything such as its configuration or what type of memory it uses.

And forming the final pillar, the sever space won’t be left out on Xe-LP either. Intel will be delivering a quad-GPU product for servers that they are calling SG1. Based on four DG1 GPUs, this will be a replacement of sorts for Intel’s Xeon Visual Compute Accelerator family of products. Designed to leverage Intel’s earlier integrated GPUs, the Xeon VCA cards were aimed at the video encoding market, using Intel’s QuickSync media blocks to accelerate the process. Now that Intel has discrete GPUs, they no longer need to gang together CPUs for this market, and instead can sell accelerators with just the GPU. It’s a bit of a niche market with regards to the larger GPU ecosystem, but it’s an important one for Intel, so they’re hoping that SG1 will make server operators stand up and take notice – or at least those pesky Goa'ulds.

33 Comments

View All Comments

mode_13h - Thursday, August 13, 2020 - link

I can't speak to Direct 3D, but OpenGL talks about work group invocations. I don't believe "threads" is mentioned anywhere in the API.Dolda2000 - Thursday, August 13, 2020 - link

Admittedly I haven't read the whole article yet, but it strikes me how the presentations seems to be comparing the new GPU to the previous GPU, rather than presenting it as a new architecture. Does this confirm that using the "Xe" moniker for this product is just marketing, and that it in fact is an evolution of previous Gen architectures?I mean, I don't mind if that's the case, I just wish they wouldn't overmarket it.

Ryan Smith - Thursday, August 13, 2020 - link

" is an evolution of previous Gen architectures?"It is an evolution of the previous Gen architectures. A major evolution, but an evolution none the less. Not even Intel is going to do a clean sheet design when they have bits and pieces that already work fine.

Dolda2000 - Thursday, August 13, 2020 - link

Certainly, they're not going to create a new clean-slate ALU design just for the sake of it, but it has always been my impression that Xe (at least Xe-HPC) was going to be a more-or-less new architecture. Maybe that has just been my misunderstanding the whole, and Xe-HPC too is going to be fundamentally Gen-based (though I seem to recall that being explicitly denied at some point), but what I was getting at here was that Xe-HPC is going to be the new architecture, and meanwhile this is "merely" an evolution of Gen for which they're just borrowing the product name of their higher-end offering to make it seem like more than what it is.mode_13h - Thursday, August 13, 2020 - link

You should distinguish between the ISA and uArch of the shader cores (EUs) vs. the macro-architecture of the GPU (e.g. buses, memories, caches, fixed-function units, etc.).So, you can have a macro-architecture that's *very* different, even while the ISA is a small evolution and the uArch of the EUs is somewhere in between.

tipoo - Thursday, August 13, 2020 - link

RDNA 1 still has significant GCN bits in it, I'm sure Nvidia does the same a few generations in a row, there's no necessary contention between it being an evolution and it being marked as something substantially new.abufrejoval - Thursday, August 13, 2020 - link

IMHO the overhead of multi GPU rendering with an iGPU and dGPU can't really be offset by the small contribution the iGPU is likely to make to a beefy dGPU.More likely will be dGPU via Thunderbolt 4 and very seamless transitions on docking/undocking and that's good enough.

Too bad that won't work nearly as well with Ryzen notebooks so there again consumer choice goes down the drain somewhat. Not that I believe TB dGPU is a really an attractive market unless prices change dramatically.

mode_13h - Thursday, August 13, 2020 - link

Agreed. I think it would work much better to task the iGPU with other compute tasks that involve less communication bandwidth with the dGPU. Things like physics, AI, audio processing, etc.brucethemoose - Thursday, August 13, 2020 - link

Maybe post processing? Like an Intel version lf ReShade? IIRC the frames have to come back to the IGPU's display block anyway.tipoo - Thursday, August 13, 2020 - link

In this case the IGP would be nearly equivalent to DG1