MSI’s GeForce N470GTX & GTX 470 SLI

by Ryan Smith on July 30, 2010 1:28 PM ESTMSI N470GTX: Power, Temperature, Noise, & Overclocking

As we’ve discussed in previous articles, with the Fermi family GPUs no longer are binned for operation at a single voltage, rather they’re assigned whatever level of voltage is required for them to operate at the desired clockspeeds. As a result any two otherwise identical cards can have a different core voltage, which muddies the situation some. This is particularly the case for our GTX 470 cards, as our N470GTX has a significantly different voltage than our reference GTX 470 sample.

|

GeForce GTX 400 Series Voltage |

||||

| Ref GTX 480 | Ref GTX 470 | MSI N470GTX | Ref GTX 460 768MB | Ref GTX 460 1GB |

|

0.987v

|

0.9625v

|

1.025v

|

0.987v

|

1.025v

|

While our reference GTX 470 has a VID of 0.9625v, our N470GTX sample has a VID of 1.025v, a 0.0625v difference. Bear in mind that this comes down to the luck of the draw, and the situation could easily have been reversed. In any case this is the largest difference we’ve seen among any of the GTX 400 series cards we’ve tested, so we’ve gone ahead and recorded separate load numbers for our N470GTX sample to highlight the power/temperature/noise range that exists within a single product line.

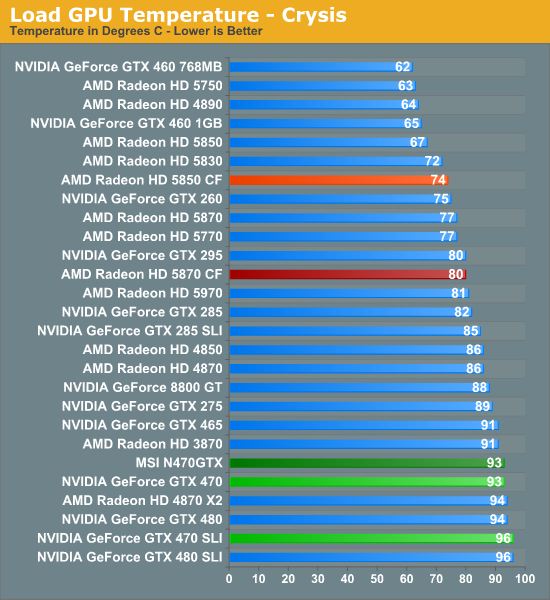

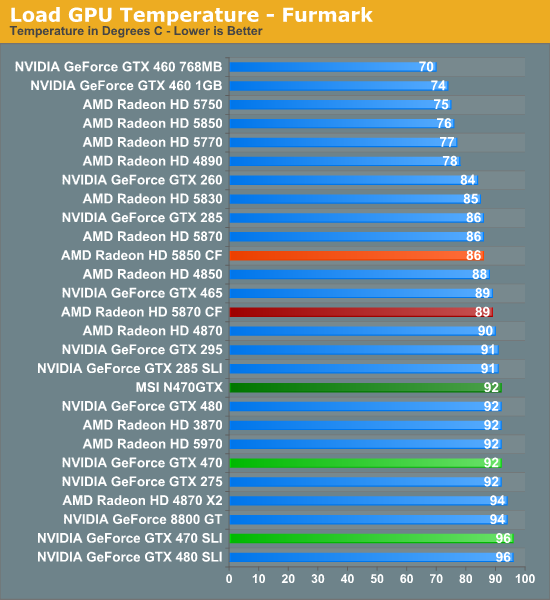

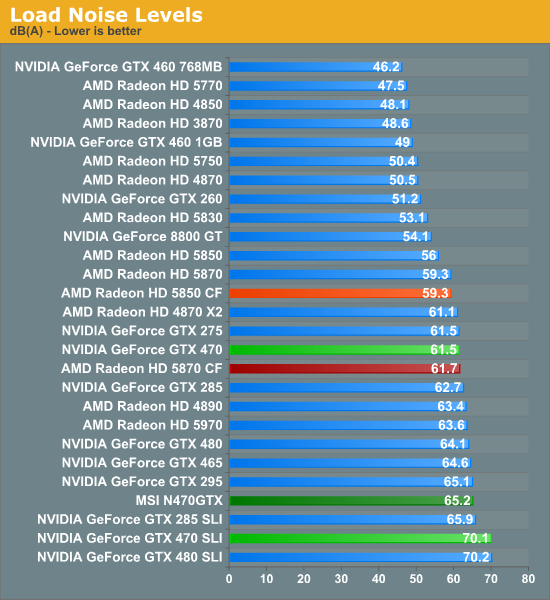

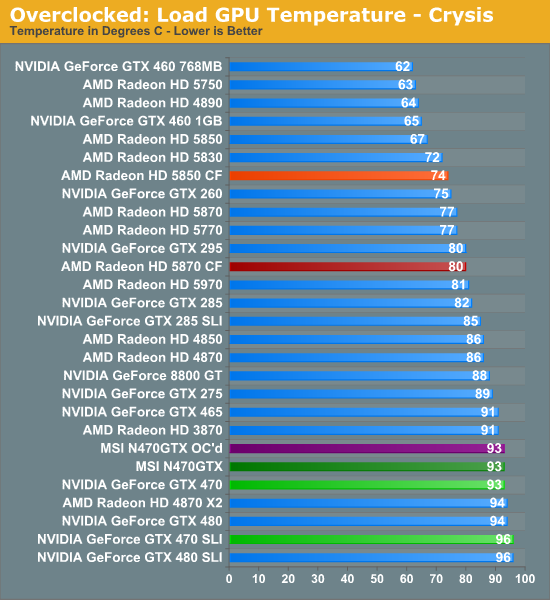

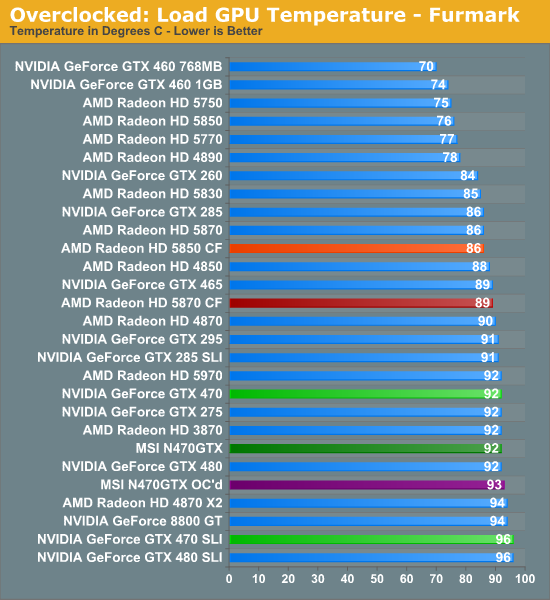

Quickly looking at load temperatures, in practice these don’t vary due to the fact that the cooler is programed to keep the card below a predetermined temperature and will simply ramp up to a higher speed on a hotter card.

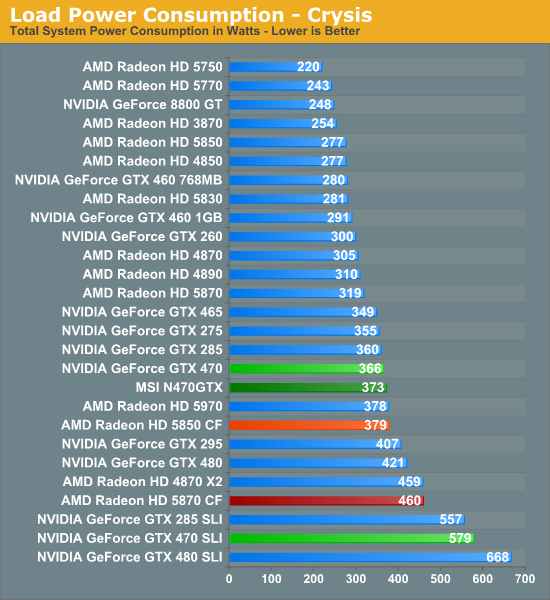

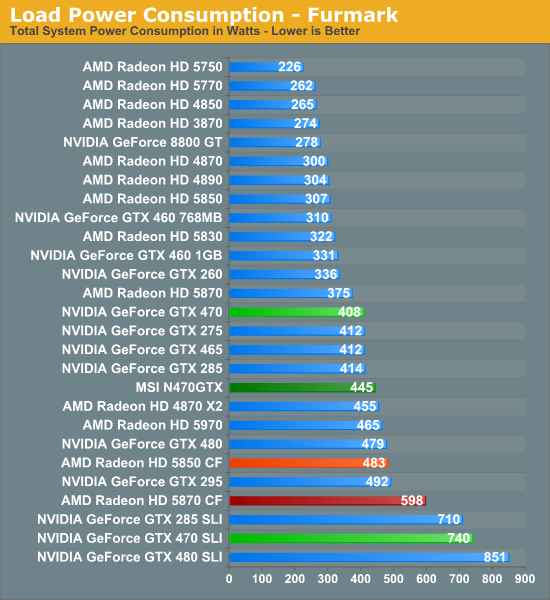

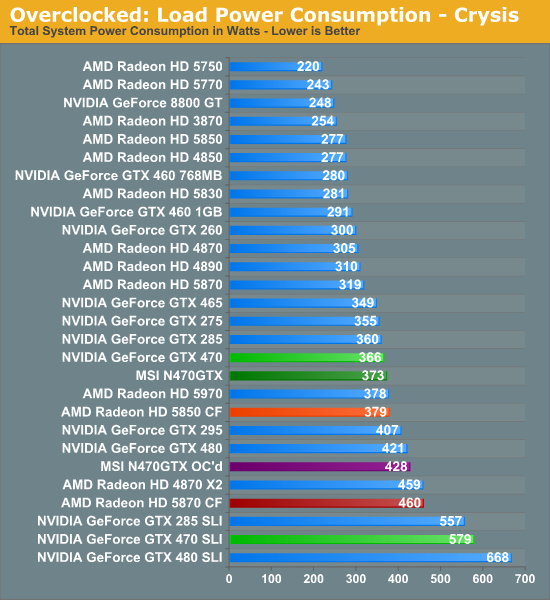

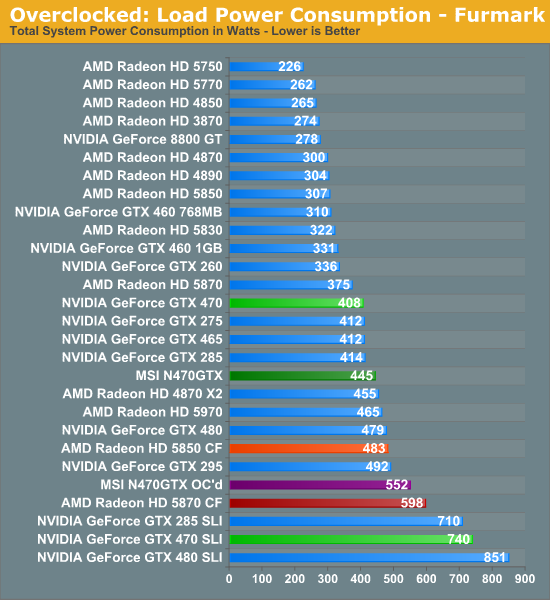

This brings us to power consumption where the difference in VID makes itself much more apparent. With all things being held equal, under Crysis our N470GTX sample ends up consuming 7W more than our reference sample GTX 470. However under Furmark this becomes a 37W difference, showcasing just how wide of a variance the use of multiple VIDs can lead to in a single product. Ultimately for most games such a large VID isn’t going to result in more than a few watts’ difference in power consumption, but under extreme loads having a card with a lower VID GPU can have its advantages.

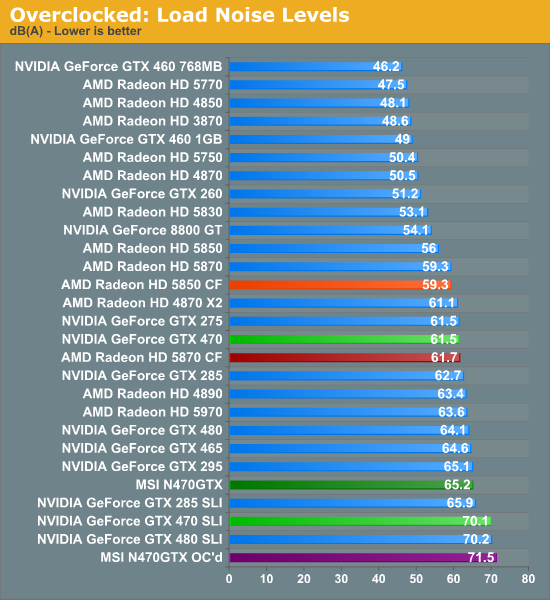

As we started before, the cooler on the GTX 470 targets a specific temperature, varying the fan speed to match it. With the higher VID and greater power consumption of our N470GTX sample, this means the card ends up being a good 3.7dB louder under Furmark than our reference sample, thanks to the higher power draw (and hence heat dissipation) of the card. Note that this is a worst-case scenario though, as under most games there’s a much smaller power draw difference between cards of different VIDs, and as a result the difference in load noise is also minimal.

Overclocking

With MSI’s Afterburner software it’s possible to increase the core voltage on the N470GTX up to 1.0875v, 0.0625v above the stock voltage of our sample card. Although by no means a small difference, neither is it more than the reference GTX 470 cooler can handle, so in a well-ventilated case we’ve found that it’s safe to go all the way to 1.0875v.

| Stock Clock | Max Overclock | Stock Voltage | Overclocked Voltage | |

| MSI N470GTX | 675MHz | 790MHz | 1.025v | 1.087v |

With our N470GTX cranked up to 1.0875v, we were able to increase the core clock to 790MHz (which also gives us a 1580MHz shader clock), a 183MHz (30%) increase in the core clock speed. Anything beyond 790MHz would result in artifacting.

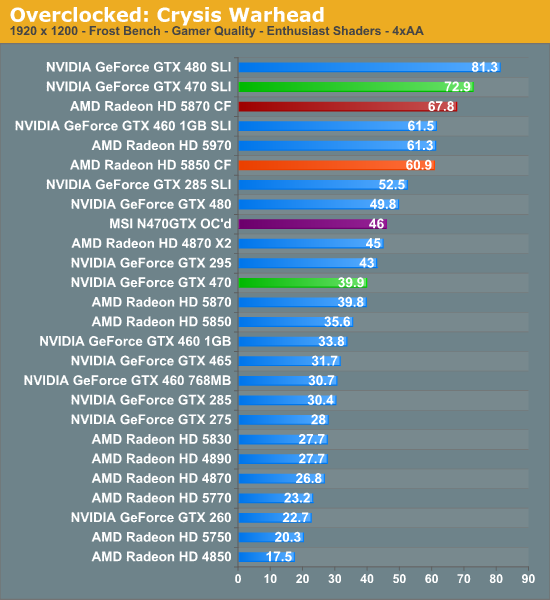

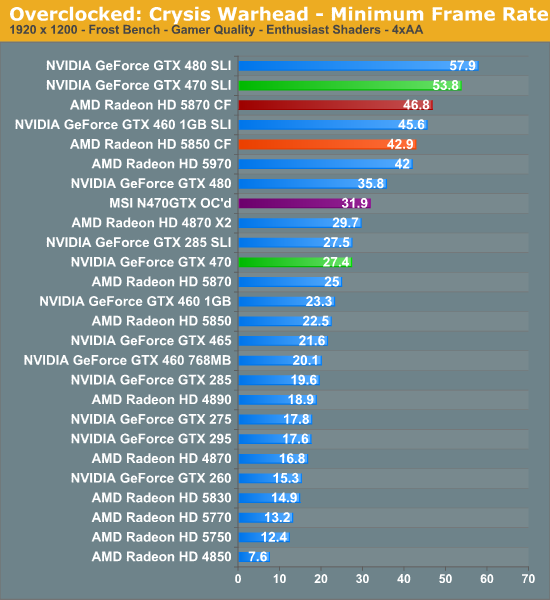

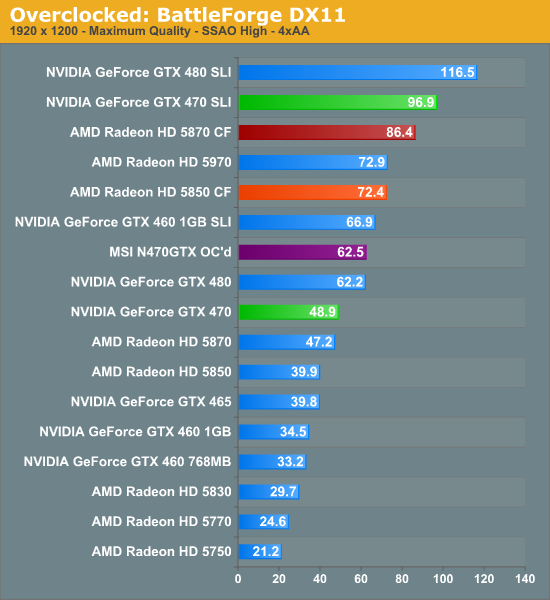

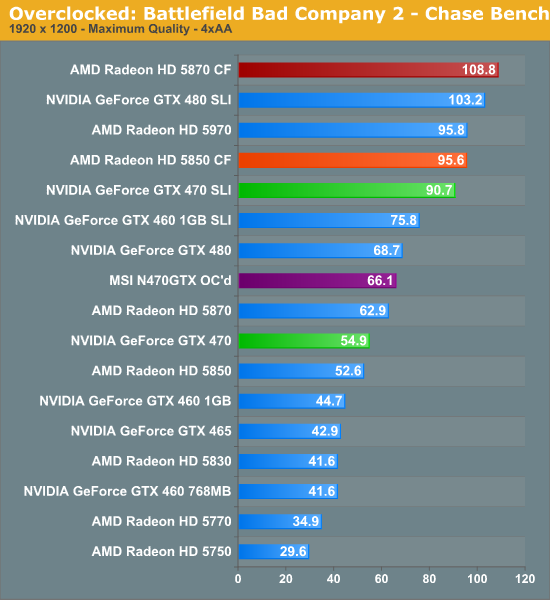

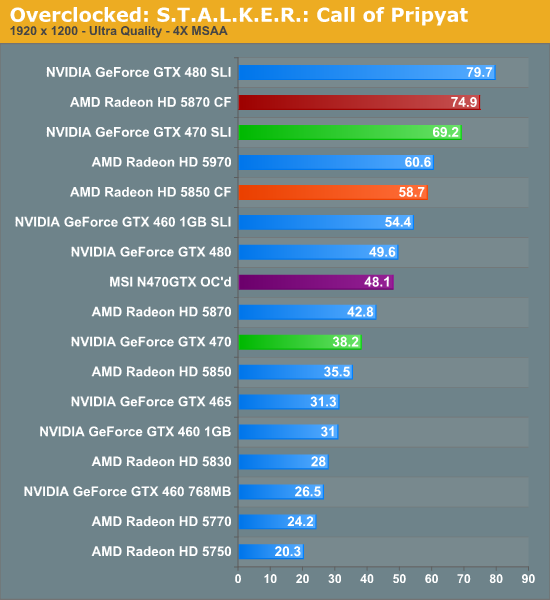

Overclocking alone isn’t enough to push the N470GTX to GTX 480 levels, but it’s enough to come close much of the time. In cases where the GTX 480 already has a solid lead over its competition the overclocked N470GTX is often right behind it – in this case this means the overclocked N470GTX manages to consistently beat the Radeon 5870, something a stock-clocked GTX 470 cannot do.

But due to the use of overvolting, that extra performance means there comes a time to pay the piper. While our temperatures hold consistent the additional voltage directly leads to additional power draw and higher fan speeds. The overclocked N470GTX can approach GTX 480 performance, but it exceeds the GTX 480 in these metrics. In terms of load noise the overclocked N470GTX is pushing just shy of 100% fan speed, making it the loudest card among our test suite. Similarly, load power consumption under both Crysis and Furmark exceeds any of our stock-clocked single-GPU cards.

Ultimately overclocking the N470GTX provides a very generous performance boost, but to make use of it you need to put up with an incredible amount of heat and noise, so it’s not by any means an easy tradeoff.

41 Comments

View All Comments

tech6 - Friday, July 30, 2010 - link

Is it just me getting old or have desktop PC become somewhat boring? There seems to be a lot more sizzle and innovation in mobile, server and even home theater tech.lunarx3dfx - Friday, July 30, 2010 - link

I have to agree, I really miss the days of Overclocking with dip switches and jumpers, and when a 100 MHz OC actually meant performance you could feel and see. It's not as much fun as it used to be. The mobile market, smartphones especially, has gotten very interesting in recent years especially if you have gotten into homebrew and seeing what these devices are really capable of.araczynski - Saturday, July 31, 2010 - link

i wouldn't say boring, just that each new iteration of cards is becoming less and less important.game developers aren't pushing hardware as hard as fast as the manufacturers would want them to.

i've had my 4850's in CF since they came out, and i'm still playing everything at 1080p to this day, granted, i don't use AA, but i never have, so don't care.

dragon age, mass effect 2, star craft 2 all smooth as butter, why am i going to waste time/money with a new video solution?

this is still on my E8600(?) 3.16Ghz C2D. (win7).

people are buying too much into the marketing, so cudos to their marketing departments, or which anandtech is one i suppose.

7Enigma - Monday, August 2, 2010 - link

Hmm...have you checked to see if you are CPU limited in games? I have the same CPU and would guess that at 1080p resolution you could very well be CPU limited at stock E8600 speeds. I have the same proc and it is easy as pie to OC to close to 4GHz. I currently run at 3.65GHz at stock voltage and game at 3.85GHz (again stock voltage) with little more tweaking than upping the frequency (9.5X multi, 385MHz bus). And that's just with an OC'd 4870, dual 5850's surely could use the extra cpu power at such a (relatively) low resolution.HTH

quibbs - Monday, August 2, 2010 - link

In my humble opinion, games drove the PC market into mainstream. It spurred the development of most of the major components. This includes graphics cards. Especially graphic cards. But it seems that game development for the PC, while still major, isn't what it once was. This has to slow down the video card market as the games for PC are less numerous.Perhaps a major breakthrough in gaming (3d, holograms,etc) will continue the card wars, but I think it will eventually head in a different direction. A reversal if you will, energy efficient cards in smaller form factor that are very potent at pushing graphics. Think about it, the cards are getting bigger and more power hungry as they grow in capabilities. At some point this will be unsustainable (in many ways).

Some company will realize that less is more and will produce such a product (when technology permits), and will kick off the new wars.

Just a thought....

piroroadkill - Monday, August 2, 2010 - link

We're getting more and more powerful hardware, but most games are being developed with consoles in mind, so the benefit of having a vastly more powerful machine is very small.I don't feel like I need to upgrade my 4890 and C2D @ 3.4 for gaming, at all. To be honest, my 2900XT didn't really need upgrading, but it ran really hot and started to balls up

softdrinkviking - Monday, August 2, 2010 - link

i don't know what a 2900xt is, but i upgraded from a radeon 3870 because i couldn't play butcher bay or a couple of other titles at my screens native 1920x1200. that was without AA or MSAA or any kind of ambient occlusion or anything, it just couldn't hold the framerates at all.so i got a 5850, and it's been great for everything under the sun. sometimes i can even max out the AA and stuff and the games play fine. (not in crysis)

anyway, the current gen of consoles games can usually take advantage of a regular LCDTV's 1080p, so if i can't play a game at that resolution without any extra visual enhancements selected, that sounds like there is room for improvement.

As for the future of gaming, i personally believe that computers will supplant consoles eventually.

maybe as soon as 15 years?

it's just a guess, i can't back it up, but i personally can't see how sony will be able to afford losing so much money on a new generation console.

if the current generation lasts long enough for sony to take advantage of the, only recently profitable, PS3, then home PCs will have a chance to get much faster and cheaper before a PS4 has time to come to fruition.

it just seems like the consoles will eventually become financially untennable.

damn, my dog just died, gotta go.

afkrotch - Monday, August 2, 2010 - link

All depends on hardware and games. I have to turn down my game settings on Metro 2033. I use a C2D @ 3.3 ghz and a GTS 250. I get way too much framerate drops at 1920x1200 no AA/AF.Granted, I can still play any game out there, so long as I'm willing to lower the quality/resolutions.

arnavvdesai - Friday, July 30, 2010 - link

I just wanted to ask if the author or the staff on AT feel that desktop graphics card are a shrinking market? Is the continued investment by ATi and Nvidia into the development of newer cards seem justified? I own a 5870 and barring a technical fault I dont plan to upgrade for another 3 years as I dont see myself upgrading my monitors.Is the market slowly but surely reducing or am I just wrong in assuming that? If yes, then where should these companies research into?

nafhan - Friday, July 30, 2010 - link

Well, the tech that goes into making a high end card eventually makes it into mobile, low end, integrated, cell phone, and console parts through a combination of cutting down the high end and successive silicon process improvements. So, ATI and Nvidia don't expect to make all their R&D money back from the initial run of high end GPU's. High end GPU's are basically proof of concepts for new technology. They'd certainly like to sell a bunch of them, but mostly they want to make sure the technology works.