ASRock X99 WS-E/10G Motherboard Review: Dual 10GBase-T for Prosumers

by Ian Cutress on December 15, 2014 10:00 AM EST- Posted in

- Motherboards

- IT Computing

- Intel

- ASRock

- Enterprise

- X99

- 10GBase-T

For a number of months I have been wondering when 10GBase-T would be getting some prime time in the consumer market. Aside from add-in cards, there was no onboard solution, until ASRock announced the X99 WS-E/10G. We were lucky enough to get one in for review.

10GBase-T is somewhat of an odd standard. Based on upgraded RJ-45 connections, it pushes the standard of regular wired networking in terms of performance and capability. The controllers required for it are expensive, as the situations that normally require this bandwidth tend to use different standards that afford other benefits such as lower power, lower heat generation and more efficient signaling standards. Put bluntly, 10GBase-T is hot, power hungry, expensive, but ultimately the easiest to integrate into a home, small office or prosumer environment. Users looking into 10GBase-T calculate cost in hundreds of monies per port, rather than pennies, as the cheapest unmanaged switches cost $800 or so. A standard two port X540-T2 PCIe 2.0 x8 card can cost $400-800 depending on your location, meaning a minimum $2000 for a 3 system setup.

The benefits of 10GBase-T outside the data center sound somewhat limited. It doesn't increase your internet performance, as that is determined by the line outside the building. For a home network, its best use is in computer to computer data transfer. Normally a prosumer environment might have a server or workstation farm for large dataset analysis and GBit just isn't enough. Or the most likely home scenario is streaming lossless 4K content to several devices at once. For most users this sounds almost a myth, but for a select few it is a reality, or at least something near it. Some users are teaming individual GBit ports for similar connectivity as well.

Moving the 10GBase-T controller and ports ultimately frees up PCIe slots for other devices, and makes integration easier, although you lose the ability to transfer the card to another machine if needed. The X540-BT2 used in the X99 WS-E/10G has eight PCIe 3.0 lanes on a 40 PCIe lane CPU, but can also work with four lanes via the 28-lane i7-5820K CPU if required. Using the controller on the motherboard also helps with pricing, providing an integrated system and hopefully shaving $100 or so from the ultimate cost. That being said, as it ends up in the high end model, it is aimed at those where hardware cost is a minimal part of their prosumer activities, where an overclocked i7-5960X system with 4+ PCIe devices is par for the course.

ASRock X99 WS-E/10G Overview

In an ideal testing scenario, we would test motherboards the same way we do medicine – with a double blind randomized test. In this circumstance, there would be no markings to give away who made the device, and during testing there would be no indication of the device either. With CPUs this is relatively easy if someone else sets the system up. With motherboards, it is almost impossible due to the ecosystem of motherboard design that directly impacts expectation and use model. Part of the benefit of a system is in the way it is presented as well as the ease of use of software, to the point where manufacturers will spend time and resources developing the extra tools. Providing the tools is easy enough, but developing it into an experience is an important aspect. So when ASRock presents a motherboard with 10GBase-T, the main key points here are ‘10GBase-T functionality’ coming from ‘ASRock’.

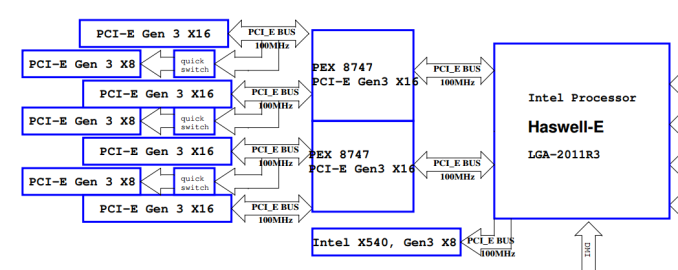

Due to the cost of the 10GBase-T controller, the Intel X540-BT2, ASRock understandably went high-end in their first implementation. This means a full PCIe 3.0 x16/x16/x16/x16 layout due to the use of two PLX 8747 chips that act as FIFO buffer/muxes to increase the lane count. For those new to PLX 8747 chips, we went in-depth on their function when they were first released which you can read here. These PLX chips also are quite expensive, at least adding $40 each to the cost of the board for the consumer, but allow ASRock to implement top inter-GPU bandwidth. This means that from the 40 PCIe lanes of an LGA2011-3 CPU, 8 go to the X540-BT2 and 16 each go to the PLX chips which output 32 each. For users wanting to go all out with single slot PCIe co-processors, the X99 WS-E/10G will allow an x16/x8/x8/x8/x8/x8/x8 arrangement.

If the WS in the name was not a giveaway, with the cost of these extra controllers, ASRock is aiming at the 1P workstation market. As a result the motherboard has shorter screws to allow 1U implementation and full Xeon support with ECC/RDIMM up to 128GB. The power delivery package is ASRock’s 12-phase solution along with the Super Alloy branding indicating XXL heatsinks as well as server grade components. The two PLX chips are cooled by a large heatsink with a small fan, although this can be disabled if the users cooling is sufficient. Another couple of nods to the WS market is also the two Intel I210 network interfaces with the dual 10GBase-T, affording a potential teaming rate of 22 Gbps all in. There is also a USB Type-A port sticking out for license dongles as well as a SATA DOM port. TPM, COM and two BIOS chips are also supported.

On the consumer side of the equation, the chipset IO is split into four lanes for an M.2 x4 port, the two Intel I210 NICs mentioned before and a SATA Express implementation. The M.2 slot has some PCIe sharing duties with a Marvell 9172 SATA controller as well, meaning that using the Marvell SATA ports puts the M.2 into x2 mode. The board has 12 total SATA ports, with 6 PCH RAID capable, four PCH non-RAID capable and two from the Marvell. Alongside this is eight USB 3.0 ports, four from two onboard headers and four ports on the rear panel from an ASMedia ASM1074 hub. An eSATA port is on the rear panel as well, sharing bandwidth with a non-RAID SATA ports. Finally the audio solution is ASRock’s upgraded ALC1150 package under the Purity Sound 2 branding.

Performance wise, ASRock uses an aggressive form of MultiCore Turbo to score highly in our CPU tests. Due to the 10G controller, the power consumption is higher than other motherboards we have tested, and it also impacts the DPC Latency. USB 2.0 speed was a little slow, and the audio had a low THD+N result, but POST times were ballpark for X99. The software and BIOS from ASRock followed similarly from our previous ASRock X99 WS review.

The 10GBase-T element of the equation was interesting, given that for PC-to-PC individual transfers from RAMDisk to RAMDisk peaked at 2.5 Gbps. To get the most from the protocol the data transfer requires several streams (more than one transfer function to allow for interleaving), at least four for 6 Gbps+ or eight for 8 Gbps+. One bottleneck in the transfer is the CPU, showing 50% load on an eight-thread VM during transfer using five streams, perhaps indicating that an overclocked CPU (or something like the i7-4790K with a higher threaded speed) might be preferable.

Whenever a motherboard company asks what a user looks for in a motherboard, I always mention that if they have a particular need, they will only look at motherboards that have the functionality. Following this, users would look choosing the right socket, then filter by price, brand, looks and reviews (one would hope in that vague order). The key point here being that the X99 WS-E/10G caters to that specific crowd that need a 10GBase-T motherboard. If you do not need it, the motherboard is overly expensive.

Visual Inspection

Motherboards with lots of additions tend to be bigger than usual, and the WS-E/10G sits in the E-ATX form factor. This allows the addition of the X540-BT2 controller and the two PLX 8747 switches with more PCB room for routing. As the 10G controller is rated at 14W at full tilt it comes covered with a large heatsink which is connected via a heatpipe to the heatsink covering the power delivery. The smaller heatsink covering the chipset and two PLX chips is not connected to the others, however it does have a small fan (which can be disconnected) to improve cooling potential.

As this motherboard is oriented towards the workstation market we get features such as COM and TPM headers, with a total of five fan headers around the motherboard. The two CPU fan headers, one four-pin and one three-pin, are at the top right of the board, with a 3-pin CHA header just above the SATA ports and another just below. The final header is on the bottom panel, this time four-pin. The ‘white thing that looks like a fan header’ at the bottom of the board is actually used for SATA DOM power. Note that HDD Saver does not feature on this motherboard.

The DRAM slots are single-sided with the latches due to the close proximity of the first PCIe slot, which means that users should ensure that their DRAM is fully pushed in at both ends. Next to the DRAM is one of the PCIe power connectors, a horrible looking 4-pin molex connector right in the middle of the board. I asked ASRock about these connectors (because I continually request they be replaced) and ASRock’s response was that they would prefer a single connector at the bottom but some users complain that their cases will not allow another connector angled down in that location, so they put one here as well. Users should also note that only one needs to be connected when 3+ PCIe devices are used to help boost power. I quizzed them on SATA power connectors instead, or a 6-pin PCIe, however the response was not enthusiastic.

Next to this power connector is a USB 2.0 type-A port on the motherboard itself, which we normally see on server/workstation motherboards for USB license keys or other forms of not-to-be-removed devices.

On the right hand side of the motherboard is our TPM header followed by the 24-pin ATX power connector and two USB 3.0 headers, where both of these come from the PCH. With the SATA ports there are twelve in total in this segment with the first two being powered by a Marvell controller. The next ten are from the PCH with the first six RAID capable, then the next four are not. As part of this final four there is also a SATA Express port coming from the chipset. For more connectivity we have a black SATA DOM port at the bottom of the board and a PCIe 2.0 x4 M.2 slot from the chipset supporting 2230 to 22110 sized devices. If a device is plugged into the final four SATA ports, the M.2 bandwidth drops to M.2 x2. This suggests that ASRock can partition some of the bandwidth from the second non-RAID AHCI controller in the chipset for M.2 usage, and that the second AHCI controller is in-part based on PCIe. This further implicates my prediction that the chipset is just turning into a mass of PCIe lanes / FPGA as required by the motherboard manufacturer.

At the bottom of the motherboard are our power/reset buttons alongside the two-digit debug. The two BIOS chips are also here with a BIOS select switch, two SATA-SGPIO headers, two USB 2.0 headers, a COM header, a Thunderbolt header, two of the fan headers and that ugly molex power connector. As usual the front panel audio and control headers are here too, as well as two other headers designated FRONT_LAN, presumably to allow server builders to route the signals from the network ports to LEDs on the front of the case.

The audio subsystem uses an upgraded Realtek ALC1150 package, meaning an EMI shield, PCB separation and enhanced filter caps. The PCIe layout is relatively easy to follow:

From the 40 PCIe lanes from the CPU, these are split into x16/x16/x8. The final x8 goes to the 10GBase-T controller, whereas the other lanes get filtered into one PLX controller each. This gives the effect of muxing 16 lanes into 32 (with an extra buffer), allowing each PLX controller to feed two x16 slots for a total of four PCIe 3.0 x16 (hence x16/x16/x16/x16 support). Three of these x16 slots are quick switched to x8 slots, creating x8/x8 from three of the x16 ports.

This means:

Four PCIe devices or less: x16/-/x16/-/x16/-/x16

Four to Seven PCIe devices: x8/x8/x8/x8/x8/x8/x16

So for anyone that wants to strap on some serious PCIe storage, RAID cards or single slot PCIe co-processors, everyone gets at least PCIe 3.0 x8 bandwidth.

For users on the i7-5820K, things are a little different but not so much. Due to only having 28 PCIe lanes, the outputs are split x16/x8/x4, with x4 going to the X540. This leaves x16 and x8 going to the PLX controllers, but in both cases each PLX chip will configure to 32 PCIe lanes, still giving an x16/x16/x16/x16 or x8/x8/x8/x8/x8/x8/x16 arrangement. With only four lanes, the two 10GBase-T ports are still designated to work with PCIe 3.0 x4 (given the original requirement of PCIe 2.0 x8 for the controller), but full bandwidth might not be possible according to Intel’s FAQ on the X540 range – check point 2.27 here.

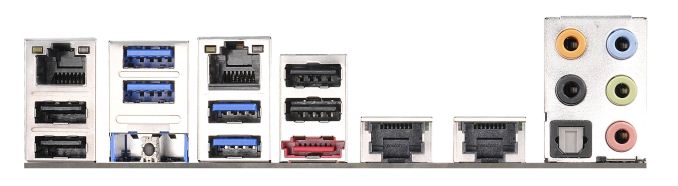

The rear panel removes any PS/2 ports and gives four USB 2.0 alongside four USB 3.0, with the latter coming from an ASMedia hub. The two network ports on the left are from Intel I210 controllers, whereas the two on the right are the 10GBase-T ports from the Intel X540-BT2 controller. There is a Clear_CMOS button, an eSATA port and the audio jacks to round off the set.

Board Features

| ASRock X99 WS-E/10G | |

| Price | US (Newegg) |

| Size | E-ATX |

| CPU Interface | LGA2011-3 |

| Chipset | Intel X99 |

| Memory Slots | Eight DDR4 DIMM slots Supporting up to 64 GB UDIMM, 128 GB RDIMM Up to Quad Channel, 1066-3200 MHz |

| Video Outputs | None |

| Network Connectivity | 2 x Intel I210 (1Gbit) 2 x Intel X540-BT2 (10GBase-T) |

| Onboard Audio | Realtek ALC1150 |

| Expansion Slots | 4 x PCIe 3.0 x16 3 x PCIe 3.0 x8 |

| Onboard Storage | 6 x SATA 6 Gbps, RAID 0/1/5/10 4 x S_SATA 6 Gbps, no RAID 2 x SATA 6 Gbps, Marvell 9172 1 x SATA Express 1 x M.2 PCIe 2.0 x4 / x2 |

| USB 3.0 | 4 x USB 3.0 on Rear Panel (ASMedia ASM1042 Hub) 2 x USB 3.0 Headers onboard (PCH) |

| Onboard | 12 x SATA 6 Gbps 1 x SATA DOM 1 x M.2 x4 2 x USB 2.0 Headers 2 x USB 3.0 Headers 5 x Fan Headers 1 x USB 2.0 Type-A TPM Header COM Header Thunderbolt Header 2 x FRONT_LAN Headers 2 x SATA_SPGIO Headers Power/Reset Switches Two Digit Debug BIOS Switch SATA DOM Power Front Panel Header Front Audio Header |

| Power Connectors | 1 x 24-pin ATX 1 x 8-pin CPU 2 x VGA Molex |

| Fan Headers | 2 x CPU (4-pin, 3-pin) 3 x CHA (4-pin, 2 x 3-pin) |

| IO Panel | 2 x USB 2.0 2 x USB 3.0 (ASMedia Hub) 2 x Intel I210 Gbit Network 2 x Intel X540-BT2 10GBase-T Network eSATA Clear_CMOS Button Audio Jacks |

| Warranty Period | 3 Years |

| Product Page | Link |

45 Comments

View All Comments

gsvelto - Tuesday, December 16, 2014 - link

Where I worked we had extensive 10G SFP+ deployments with ping latency measured in single-digit µs. The latency numbers you gave are for pure-throughput oriented, low CPU overhead transfers and are obviously unacceptable if your applications are latency sensitive. Obtaining those numbers usually requires tweaking your power-scaling/idle governors as well as kernel offloads. The benefits you get are very significant on a number of loads (e.g. lots of small file over NFS for example) and 10GBase-T can be a lot slower on those workloads. But as I mentioned in my previous post 10GBase-T is not only slower, it's also more expensive, more power hungry and has a minimum physical transfer size of 400 bytes. So if you're load is composed of small packets and you don't have the luxury of aggregating them (because latency matters) then your maximum achievable bandwidth is greatly diminished.shodanshok - Wednesday, December 17, 2014 - link

Sure, packet size play a far bigger role for 10GBase-T then optical (or even copper) SFP+ links.Anyway, the pings tried before were for relatively small IP packets (physical size = 84 bytes), which are way lower then typical packet size.

For message-passing workloads SFP+ is surely a better fit, but for MPI it is generally better to use more latency-oriented protocol stacks (if I don't go wrong, Infiniband use a lightweight protocol stack for this very reason).

Regards.

T2k - Monday, December 15, 2014 - link

Nonsense. CAT6a or even CAT6 would work just fine.Daniel Egger - Monday, December 15, 2014 - link

You're missing the point. Sure Cat.6a would be sufficient (it's hard to find Cat.7 sockets anyway but the cabling used nowadays is mostly Cat.7 specced, not Cat.6a) but the problem is to end up with a properly balanced wiring that is capable of properly establishing such a link. Also copper cabling deteriorates over time so the measurement protocol might not be worth snitch by the time you try to establish a 10GBase-T connection...Cat.6 is only usable with special qualification (TIA-155-A) over short distances.

DCide - Tuesday, December 16, 2014 - link

I don't think T2k's missing the point at all. Those cables will work fine - especially for the target market for this board.You also had a number of other objections a few weeks ago, when this board was announced. Thankfully most of those have already been answered in the excellent posts here. It's indeed quite possible (and practical) to use the full 10GBase-T bandwidth right now, whether making a single transfer between two machines or serving multiple clients. At the time you said this was *very* difficult, implying no one will be able to take advantage of it. Fortunately, ASRock engineers understood the (very attainable) potential better than this. Hopefully now the market will embrace it, and we'll see more boards like this. Then we'll once again see network speeds that can keep up with everyday storage media (at least for a while).

shodanshok - Tuesday, December 16, 2014 - link

You are right, but the familiar RJ45 & cables can be a strong motivation to go with 10GBase-T in some cases. For a quick example: one of our customer bought two Dell 720xd to use as virtualization boxes. The first R720xd is the active one, while the second 720xd is used as hot-standby being constantly synchronized using DRBD. The two boxes are directly connected with a simple Cat 6e cable.As the final customer was in charge to do both the physical installation and the normal hardware maintenance, a familiar networking equipment as RJ45 port and cables were strongly favored by him.

Moreover, it is expected that within 2 die shrinks 10GBase-T controller become cheap/low power enough that they can be integrated pervasively, similar to how 1GBase-T replaced the old 100 Mb standard.

Regards.

DigitalFreak - Monday, December 15, 2014 - link

Don't know why the went with 8 PCI-E lanes for the 10Gig controller. 4 would have been plenty.1 PCI-E 3.0 lane is 1GB per second (x4 = 4GB). 10Gig max is 1.25 GB per second, dual port = 2.5 GB per second. Even with overhead you'd still never saturate an x4 link. Could have used the extra x4 for something else.

The Melon - Monday, December 15, 2014 - link

I personally think it would be a perfect board if they replaced the Intel X540 controller with a Mellanox ConnectX-3 dual QSFP solution so we could choose between FDR IB and 40/10/1Gb Ethernet per port.Either that or simply a version with the same slot layout and drop the Intel X540 chip.

Bottom line though is no matter how they lay it out we will find something to complain about.

Ian Cutress - Tuesday, November 1, 2016 - link

The controller is PCIe 2.0, not PCIe 3.0. You need to use a PCIe 3.0 controller to get PCIe 3.0 speeds.eanazag - Monday, December 15, 2014 - link

I am assuming we are talking about the free ESXi Hypervisor in the test setup.SR-IOV (IOMMU) is not an enabled feature on ESXi with the free license. What this means is that networking is going to tax the CPU more heavily. Citrix Xenserver does support SR-IOV on the free product, which it is all free now - you just pay for support. This is a consideration to base the results of the testing methodology used here.

Another good way to test 10GbE is using iSCSI where the server side is a NAS and the single client is where the disk is attached. The iSCSI LUN (hard drive) needs have something going on with an SSD. It can just be 3 spindle HDDs in RAID 5. You can use disk test software to drive the benchmarking. If you opt to use Xenserver with Windows as the iSCSI client. Have the VM directly connect to the NAS instead of using Xenserver to the iSCSI LUN because you will hit a performance cap from VM to host in the typical add disk within Xen. This is in older 6.2 version. Creedance is not fully out of beta yet. I have done no testing on Creedance and the contained changes are significant to performance.

About two years ago I was working on coming up with the best iSCSI setup for VMs using HDDs in RAID and SSDs as caches. I was using Intel X540-T2's without a switch. I was working with Nexenta Stor and Sun/Oracle Solaris as iSCSI target servers run on physical hardware, Xen, and VMware. I encountered some interesting behavior in all cases. VMware's sub-storage yielded better hard drive performance. I kept running into an artifical performance limit because of the Windows client and how Xen handles the disks it provides. The recommendation was to add the iSCSI disk directly to the VM as the limit wouldn't show up there. VMware still imposed a performance ding on (Hit>10%) my setup. Physical hardware had the best performance for the NAS side.