The SSD Relapse: Understanding and Choosing the Best SSD

by Anand Lal Shimpi on August 30, 2009 12:00 AM EST- Posted in

- Storage

The Instruction That Changes (almost) Everything: TRIM

TRIM is an interesting command. It lets the SSD prioritize blocks for cleaning. In the example I used before, a block is cleaned only when the drive runs out of places to write things and has to dip into its spare area. With TRIM, if you delete a file, the OS sends a TRIM command to the drive along with the associated LBAs that are no longer needed. The TRIM command tells the drive that it can schedule those blocks for cleaning and add them to the pool of replacement blocks.

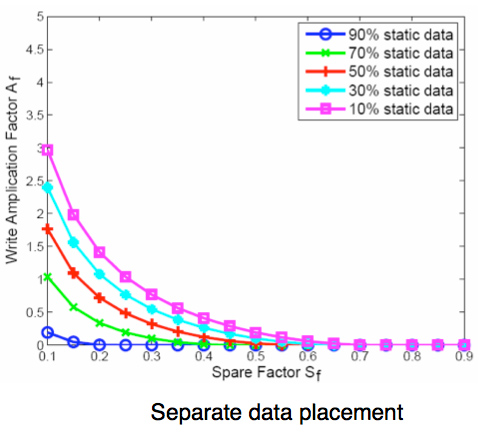

A used SSD will only have its spare area to use as a scratch pad for moving data around; on most consumer drives that’s around 7%. Take a look at this graph from a study IBM did on SSD performance:

Write Amplification vs. Spare Area, courtesy of IBM Zurich Research Laboratory

Note how dramatically write amplification goes down when you increase the percentage of spare area the drive has. In order to get down to a write amplification factor of 1 our spare area needs to be somewhere in the 10 - 30% range, depending on how much of the data on our drive is static.

Remember our pool of replacement blocks? This graph actually assumes that we have multiple pools of replacement blocks. One for frequently changing data (e.g. file tables, pagefile, other random writes) and one for static data (e.g. installed applications, data). If the SSD controller only implements a single pool of replacement blocks, the spare area requirements are much higher:

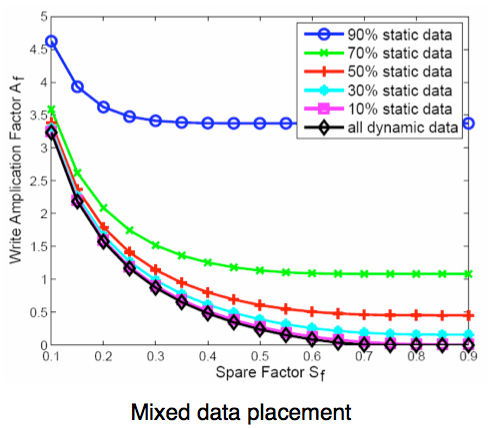

Write Amplification vs. Spare Area, courtesy of IBM Zurich Research Laboratory

We’re looking at a minimum of 30% spare area for this simpler algorithm. Some models don’t even drop down to 1.0x write amplification.

But remember, today’s consumer drives only ship with roughly 6 - 7% spare area on them. That’s under the 10% minimum even from our more sophisticated controller example. By comparison, the enterprise SSDs like Intel’s X25-E ship with more spare area - in this case 20%.

What TRIM does is help give well architected controllers like that in the X25-M more spare area. Space you’re not using on the drive, space that has been TRIMed, can now be used in the pool of replacement blocks. And as IBM’s study shows, that can go a long way to improving performance depending on your workload.

295 Comments

View All Comments

Anand Lal Shimpi - Monday, August 31, 2009 - link

wow I misspelled my own name :) Time to sleep for real this time :)Take care,

Anand

IntelUser2000 - Monday, August 31, 2009 - link

Looking at pure max TDP and idle power numbers and concluding the power consumption based on those figures are wrong.Look here: http://www.anandtech.com/cpuchipsets...px?i=3403&a...">http://www.anandtech.com/cpuchipsets...px?i=3403&a...

Modern drives quickly reach idle even between times where the user don't even know and at "load". Faster drives will reach lower average power because it'll work faster to get to idle. This is why initial battery life tests showed X25-M with much higher active/idle power figures got better battery life than Samsungs with less active/idle power.

Max power is important, but unless you are running that app 24/7 its not real at all, especially the max power benchmarks are designed to reach close to TDP as possible.

Anand Lal Shimpi - Monday, August 31, 2009 - link

I agree, it's more than just max power consumption. I tried to point that out with the last paragraph on the page:"As I alluded to before, the much higher performance of these drives than a traditional hard drive means that they spend much more time at an idle power state. The Seagate Momentus 5400.6 has roughly the same power characteristics of these two drives, but they outperform the Seagate by a factor of at least 16x. In other words, a good SSD delivers an order of magnitude better performance per watt than even a very efficient hard drive."

I didn't have time to run through some notebook tests to look at impact on battery life but it's something I plan to do in the future.

Take care,

Anand

IntelUser2000 - Monday, August 31, 2009 - link

Thanks, people pay too much attention to just the max TDP and idle power alone. Properly done, no real apps should ever reach max TDP for 100% of the duration its running at.cristis - Monday, August 31, 2009 - link

page 6: "So we’re at approximately 36 days before I exhaust one out of my ~10,000 write cycles. Multiply that out and it would take 36,000 days" --- wait, isn't that 360,000 days = 986 years?Anand Lal Shimpi - Monday, August 31, 2009 - link

woops, you're right :) Either way your flash will give out in about 10 years and perfectly wear leveled drives with no write amplification aren't possible regardless.Take care,

Anand

cdillon - Monday, August 31, 2009 - link

I gather that you're saying it'll give out after 10 years because a flash cell will lose its stored charge after about 10 years, not because the write-life will be surpassed after 10 years, which doesn't seem to be the case. The 10-year charge life doesn't mean they become useless after 10 years, just that you need to refresh the data before the charge is lost. This makes flash less useful for data archival purposes, but for regular use, who doesn't re-format their system (and thus re-write 100% of the data) at least once every 10 years? :-)Zheos - Monday, August 31, 2009 - link

"This makes flash less useful for data archival purposes, but for regular use, who doesn't re-format their system (and thus re-write 100% of the data) at least once every 10 years? :-)"I would like an input on that too, cuz thats a bit confusing.

GourdFreeMan - Tuesday, September 1, 2009 - link

Thermal energy (i.e. heat) allows the electrons trapped in the floating gate to overcome the potential well and escape, causing zeros (represented by a larger concentration of electrons in the floating gate) to eventually become ones (represented by a smaller concentration of electrons in the floating gate). Most SLC flash is rated at about 10 years of data retention at either 20C (68F) or 25C (77F). What Anand doesn't mention is that as a rule of thumb for every 9 degrees C (~16F) that the temperature is raised above that point, data retention lifespan is halved. (This rule of thumb only holds for human habitable temperatures... the exact relation is governed by the Arrhenius equation.)Wear leveling and error correction codes can be employed to mitigate this problem, which only gets worse as you try to store more bits per cell or use a smaller lithography process without changing materials or design.

Zheos - Tuesday, September 1, 2009 - link

Thank you GourdFreeMan for the additional input,But, if we format like every year or so , doesnt the countdown on data retention restart from 0 ? or after ~10 year (seems too be less if like you said temperature affect it) the SSD will not only fail at times but become unusable ? Or if we come to that point a format/reinstall would resolve the problem ?

I dont care about losing data stored after 10 years, what i do care is if the drive become ASSURELY unsusable after 10 year maximum. For drives that comes at a premium price, i don't like this if its the case.