The SSD Relapse: Understanding and Choosing the Best SSD

by Anand Lal Shimpi on August 30, 2009 12:00 AM EST- Posted in

- Storage

Intel's X25-M 34nm vs 50nm: Not as Straight Forward As You'd Think

It took me a while to understand exactly what Intel did with its latest drive, mostly because there are no docs publicly available on either the flash used in the drives or on the controller itself. Intel is always purposefully vague about important details, leaving everything up to clever phrasing of questions and guesswork with tests and numbers before you truly uncover what's going on. But after weeks with the drive, I think I've got it.

| X25-M Gen 1 | X25-M Gen 2 | |

| Flash Manufacturing Process | 50nm | 34nm |

| Flash Read Latency | 85 µs | 65 µs |

| Flash Write Latency | 115 µs | 85 µs |

| Random 4KB Reads | Up to 35K IOPS | Up to 35K IOPS |

| Random 4KB Writes | Up to 3.3K IOPS | Up to 6.6K IOPS (80GB) Up to 8.6K IOPS (160GB) |

| Sequential Read | Up to 250MB/s | Up to 250MB/s |

| Sequential Write | Up to 70MB/s | Up to 70MB/s |

| Halogen-free | No | Yes |

| Introductory Price | $345 (80GB) $600 - $700 (160GB) | $225 (80GB) $440 (160GB) |

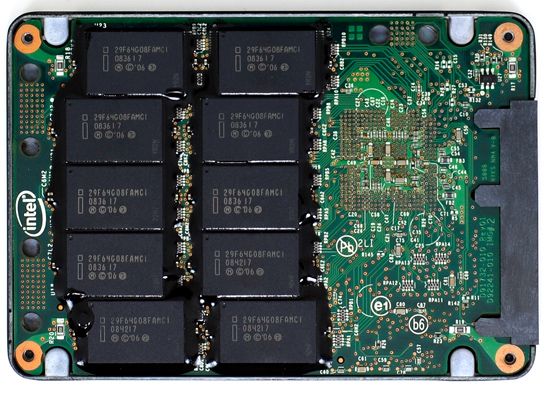

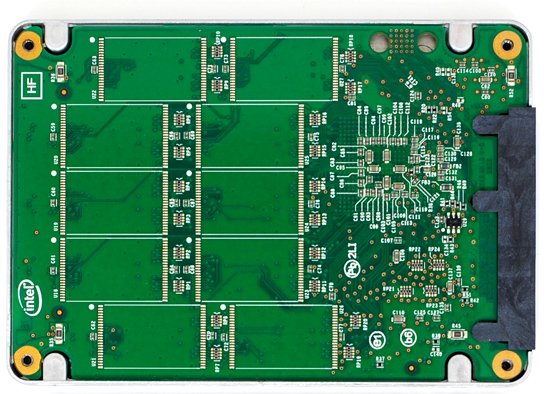

The old X25-M G1

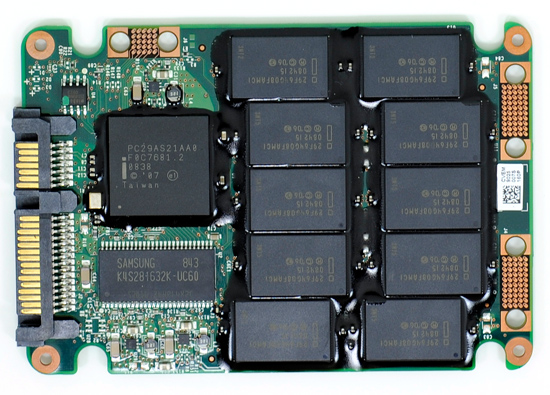

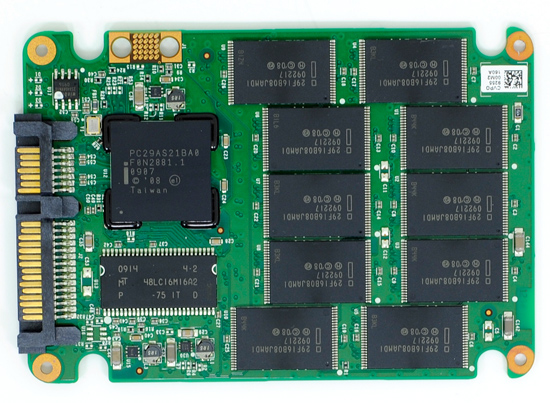

The new X25-M G2

Moving to 34nm flash let Intel drive the price of the X25-M to ultra competitive levels. It also gave Intel the opportunity to tune controller performance a bit. The architecture of the controller hasn't changed, but it is technically a different piece of silicon (that happens to be Halogen-free). What has changed is the firmware itself.

The old controller

The new controller

The new X25-M G2 has twice as much DRAM on-board as the previous drive. The old 160GB drive used a 16MB Samsung 166MHz SDRAM (CAS3):

Goodbye Samsung

The new 160GB G2 drive uses a 32MB Micron 133MHz SDRAM (CAS3):

Hello Micron

More memory means that the drive can track more data and do a better job of keeping itself defragmented and well organized. We see this reflected in the "used" 4KB random write performance, which is around 50% higher than the previous drive.

Intel is now using 16GB flash packages instead of 8GB packages from the original drive. Once 34nm production really ramps up, Intel could outfit the back of the PCB with 10 more chips and deliver a 320GB drive. I wouldn't expect that anytime soon though.

The old X25-M G1

The new X25-M G2

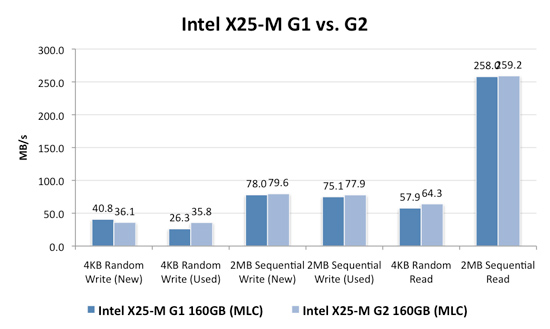

Low level performance of the new drive ranges from no improvement to significant depending on the test:

Note that these results are a bit different than my initial preview. I'm using the latest build of Iometer this time around, instead of the latest version from iometer.org. It does a better job filling the drives and produces more reliable test data in general.

The trend however is clear: the new G2 drive isn't that much faster. In fact, the G2 is slower than the G1 in my 4KB random write test when the drive is brand new. The benefit however is that the G2 doesn't drop in performance when used...at all. Yep, you read that right. In the most strenuous case for any SSD, the new G2 doesn't even break a sweat. That's...just...awesome.

The rest of the numbers are pretty much even, with the exception of 4KB random reads where the G2 is roughly 11% faster.

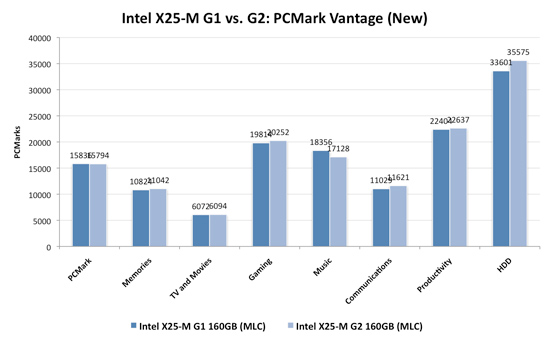

I continue to turn to PCMark Vantage as the closest indication to real world performance I can get for these SSDs, and it echoes my earlier sentiments:

When brand new, the G1 and the G2 are very close in performance. There are some tests where the G2 is faster, others where the G1 is faster. The HDD suite shows the true potential of the G2 and even there we're only looking at a 5.6% performance gain.

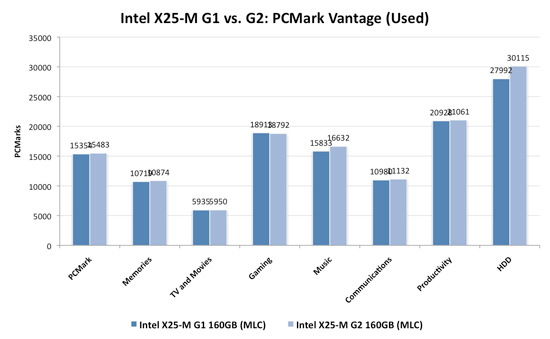

It's in the used state that we see the G2 pull ahead a bit more, but still not drastic. The advantage in the HDD suite is around 7.5%, but the rest of the tests are very close. Obviously the major draw to the 34nm drives is their price, but that can't be all there is to it...can it?

The new drives come with TRIM support, albeit not out of the box. Sometime in Q4 of this year, Intel will offer a downloadable firmware that enables TRIM on only the 34nm drives. TRIM on these drives will perform much like TRIM does on the OCZ drives using Indilinx' manual TRIM tool - in other words, restoring performance to almost new.

Because it can more or less rely on being able to TRIM invalid data, the G2 firmware is noticeably different from what's used in the G1. In fact, if we slightly modify the way I tested in the Anthology I can actually get the G1 to outperform the G2 even in PCMark Vantage. In the Anthology, to test the used state of a drive I would first fill the drive then restore my test image onto it. The restore process helped to fragment the drive and make sure the spare-area got some use as well. If we take the same approach but instead of imaging the drive we perform a clean Windows install on it, we end up with a much more fragmented state; it's not a situation you should ever encounter since a fresh install of Windows should be performed on a clean, secure erased drive, but it does give me an excellent way to show exactly what I'm talking about with the G2:

| PCMark Vantage (New) | PCMark Vantage HDD (New) | PCMark Vantage (Fragmented + Used) | PCMark Vantage HDD (Fragmented + Used) | |

| Intel X25-M G1 | 15496 | 32365 | 14921 | 26271 |

| Intel X25-M G2 | 15925 | 33166 | 14622 | 24567 |

| G2 Advantage | 2.8% | 2.5% | -2.0% | -6.5% |

Something definitely changed with the way the G2 handles fragmentation, it doesn't deal with it as elegantly as the G1 did. I don't believe this is a step backwards though, Intel is clearly counting on TRIM to keep the drive from ever getting to the point that the G1 could get to. The tradeoff is most definitely performance and probably responsible for the G2's ability to maintain very high random write speeds even while used. I should mention that even without TRIM it's unlikely that the G2 will get to this performance state where it's actually slower than the G1; the test just helps to highlight that there are significant differences between the drives.

Overall the G2 is the better drive but it's support for TRIM that will ultimately ensure that. The G1 will degrade in performance over time, the G2 will only lose performance as you fill it with real data. I wonder what else Intel has decided to add to the new firmware...

I hate to say it but this is another example of Intel only delivering what it needs to in order to succeed. There's nothing that keeps the G1 from also having TRIM other than Intel being unwilling to invest the development time to make it happen. I'd be willing to assume that Intel already has TRIM working on the G1 internally and it simply chose not to validate the firmware for public release (an admittedly long process). But from Intel's perspective, why bother?

Even the G1, in its used state, is faster than the fastest Indilinx drive. In 4KB random writes the G1 is even faster than an SLC Indilinx drive. Intel doesn't need to touch the G1, the only thing faster than it is the G2. Still, I do wish that Intel would be generous to its loyal customers that shelled out $600 for the first X25-M. It just seems like the right thing to do. Sigh.

295 Comments

View All Comments

Ardax - Tuesday, September 1, 2009 - link

Installing a non-OEM drive is not going to void the warranty on the rest of the system. And as the other commenter posted, your problem isn't reliability, it's performance. Anand's excellent article shows the performance dropoff of the Samsung drives.Finally, if you do get another SSD (or still have one currently), definitely disable Prefetching. SuperFetch and ReadyBoost are read-only as far as the SSD is concerned, but Prefetch optimizations do write to the drive. It selectively fragments files so that booting the system and launching the profiled applications do as much sequential reading of the HD as possible. Letting prefetch reorganize all those files is bad on any SSD, and extra bad on one where you're seeing write penalties.

...

And "One More Thing" (apologies to Steve Jobs)! Check out FlashFire (http://flashfire.org/)">http://flashfire.org/). It's a program designed to help out with low end SSDs. At a very basic view, what it does is use some of your RAM as a massive write-coalescing cache and puts that between the OS and your SSD. It collects a series of small random writes from the OS and applications and tries to turn them into a large sequential write for your SSD. It's beta, and I've never attempted to use it, but if it works for you it might be a life-saver.

heulenwolf - Friday, September 4, 2009 - link

Thanks again for the feedback, Ardax. Duly noted about the Dell warranty. They will continue to warrant the rest of the laptop, AFAIK, even if we install a 3rd party drive.Can you point to your source on the statements about how prefetch fragments files on the drive? Nothing I've read about it describes it as write intensive.

I'd like to point out that this SSD is not a low-performance unit, the kind Flashfire is supposed to help with. It was one of the fastest drives available last year, before Intel's drives came out and set the curve. When its performing normally, this system boots Vista in ~30 seconds. Its uses SLC flash with an order of magnitude more write cycles than comparable MLC-based drives. Were standard Windows installs the cause of these failures, we would have heard about MLC drives failing similarly within the first month.

Its also a business machine so loading alpha rev software on it for performance optimization isn't really an option. The known issues on Flashfire's site make it not worth the risk until its more mature.

ggathagan - Wednesday, September 16, 2009 - link

One possible work-around:I know that, for instance, if I buy a drive from Dell for one of my servers, that drive is covered under the Dell warranty for that server.

Dell sells a Kingston re-branded Intel X25-E drive and a Corsair Extreme SSD drive.

The Corsair Extreme series is an Indilinx SSD drive:

http://www.corsair.com/products/ssd_extreme/defaul...">http://www.corsair.com/products/ssd_extreme/defaul...

I don't know if this applies to non Dell-branded products that Dell sells, but it might be worth looking into.

TGressus - Wednesday, September 2, 2009 - link

"Disable Defrag, SuperFetch, ReadyBoost, and Application and Boot Prefetching"This is keen advice, especially for the OP's laptop SSD usage scenario. Definitely disable these services.

I'd also suggest disabling Automatic Restore Points on the SSD Volume(s) from the System Protection task in the "System" Control Panel. When enabled this setting can generate a lot file I/O and will add to block fragmentation and eventual garbage collection. http://en.wikipedia.org/wiki/System_Restore">http://en.wikipedia.org/wiki/System_Restore

Regular external backup and disk imaging should be just as effective, without the resource penalties.

heulenwolf - Friday, September 4, 2009 - link

TGressus, thanks for the feedback. With the exception of defrag, however, I can't find any real-world data about these services that leads me to beleive they place undue wear on the SSD. Sure, disabling them may free up marginal system resources for performance operation, but I haven't heard anything leading me to believe they're the cause of the repeated failures I saw. Since none of those features are check-box-disable-able items (again, beyound defrag) - they seem to require custom registry hacks - I'm not comfortable performing them on my business machine purely for performance optimization.I guess I've narrowed it down to options A or C from my original question:

A) Am I that 1 in a bazillion case of having gotten a bad system followed by a bad drive followed by another bad drive

B) Is there something about Vista - beyond auto defrag - that accelerates the wear and tear on these drives

C) Is there something about Samsung's early SSD controllers that drops them to a lower speed under certain conditions (e.g. poorly implemented SMART diagnostics)

D) Is my IT department right and all SSDs are evil ;)?

gstrickler - Monday, August 31, 2009 - link

Drive reliability does not appear to be a problem in your case. There is nothing in you description that indicates the drives failed, only the performance dropped to unacceptable levels. That is exactly the situation described by Anand's earlier tests of SSDs, especially with earlier firmware revisions (any brand) or with heavily used Samsung drives.I presume your swap space (paging file) is on the SSD? The symptoms you describe would occur if writing to the swap space (which Windows will do during boot up/login) is slow. You might be able to regain some performance and/or delay the reappearance of symptoms by simply moving your swap space off the SSD.

For your purposes, it sounds like your best solution would be to switch to an Intel or Indilinx drive, probably an SLC drive, but a larger MLC drive might work well also. Dell won't warranty the new drive, but it won't "void" your warranty either. You'll still have the remainder of the warranty from Dell on everything except the new SSD, which will be under warranty from the company making the drive. If you have a support contract with Dell, they might try to point to the non-Dell SSD as an issue, but at least with the Gold/Enterprise support group, I have not found Dell to do that type of finger pointing.

The Intel drives are now good at automatically cleaning up with repeated writing, while with an Indilinx drive, you may need to occasionally (perhaps every 6 months) run the "Wiper" utility to restore performance.

Also, you indicate your drive is about 3/4 full, if you can reduce that, you may see less performance hit also. You can do that by removing some data, moving data to a secondary drive (HD or SSD), or buying a larger SSD.

If you're working with large data files that you're not accessing and updating randomly (e.g. you're not primarily working with a large database), then you might benefit from having your OS and applications on the SSD, but use a HD for your data files and/or temp/swap space. Of course, make sure you have sufficient RAM to minimize any swapping regardless of whether you're using a HD or an SSD.

heulenwolf - Tuesday, September 1, 2009 - link

gstricker - duly noted about the Dell warranty.I have to disagree that drive reliability is not the issue for two reasons, only the first of which I'd mentioned before:

1) Dell's diagnostics failed on the SSD

2) Anand's test results show major slowdowns, but not from 100 MB/s 5 MB/s for both read and write operations. No matter what I did, even writing my own scripts to just read files as fast as it could, I couldn't get read access over 10 MB/s peak with average around 5. Its like the drive switched to an old PIO interface or something. The kinds of slowdowns in Anand's results do not lead to 15 minute boot times.

Its a laptop with only one drive bay so, yes, page file is on the SSD and a second drive isn't really an option. According to the windows 7 engineering blog, linked by Ardax above, SSD's are a great place to store pagefiles. Since the system has 4 GB of RAM, its not like the system has undue swap writing going on.

I can't imagine Samsung or Dell selling a drive with a 3 year warranty that would have to be replaced every 6 months under relatively normal OS wear and tear (swapping, prefetch). Vista was well past SP1 at the time the system was bought so they'd had plenty of time to qualify the drive for such uses. They'd both be out of business were this the case.

Agree that the best bet would be to switch brands but I'm kinda stuck on what's wrong with this one. Thanks for the feedback.

gstrickler - Thursday, September 3, 2009 - link

That it failed Dell's diagnostics might indicate a problem, but do you know for certain that Dell's diagnostics pass on a properly functioning Samsung SSD? I don't know the answer to that, but it needs to be answered.While Anand's tests don't show 90% drops in performance, his tests didn't continue to fragment the drive for months, so performance might continue to drop with increasing fragmentation.

More importantly, I've experienced problems with the Windows page file before, it does slow the system dramatically. Furthermore, the Windows page file does get written to as part of the boot process, so any performance problems with the page file will notably slow the boot, 15 minutes with Vista is not difficult to believe. While I haven't verified this on Vista, with NT4/W2k/XP the caching algorithm will allow reads to trigger paging other items out to disk, so even a simple read test can cause writes to the page file if the amount of data read approaches "free RAM". Again, performance problems with the page file could dramatically affect your results, even for a "read-only" test. Don't be so certain of your diagnosis unless you've eliminated these factors.

You should try removing the page file (or setting it to 2MB), then see what happens to performance.

heulenwolf - Friday, September 4, 2009 - link

I had the same thought about Dell's diagnostics. I've run them again on the latest refurb drive and found that it passes all drive-related tests. Unfortunately, Dell's diagnostics simply spit out an error code which only they hold the secret decoder-ring to so I have no idea what the diagnosis was. This result isn't conclusive but its another data point.To more fully describe the testing I did, I wrote a script that generated a random data matrix and timed writing it to file. I then read the data back in, timing only the read, and compared the two datasets to ensure no read/write errors. I looped through this process hundreds to thousands of times with file sizes from 4k up to 50 MB. Since I was using an interpreted language, I don't put much stock in the performance times, however, I was using the lowest level read and write functions available. Additionally, my 5-10 MB/s numbers come from watching Vista's Resource Monitor while the test was running, not from the program. No other measured component of the system was taxed so I don't think the CPU or being near the physical memory limit, for example, was holding it up.

donjuancarlos - Monday, August 31, 2009 - link

I have not found many articles on the net about SSDs and this one is even easy to understand.The only negative part about this article is the Lenovo T400 I am typing on (it has a Samsung drive :( ) And I have to agree, startup times are nothing special.