The SSD Relapse: Understanding and Choosing the Best SSD

by Anand Lal Shimpi on August 30, 2009 12:00 AM EST- Posted in

- Storage

A Quick Flash Refresher

DRAM is very fast. Writes happen in nanoseconds as do CPU clock cycles, those two get along very well. The problem with DRAM is that it's volatile storage; if the charge stored in each DRAM cell isn't refreshed, it's lost. Pull the plug and whatever you stored in DRAM will eventually disappear (and unlike most other changes, eventually happens in fractions of a second).

Magnetic storage, on the other hand, is not very fast. It's faster than writing trillions of numbers down on paper, but compared to DRAM it plain sucks. For starters, magnetic disk storage is mechanical - things have to physically move to read and write. Now it's impressive how fast these things can move and how accurate and relatively reliable they are given their complexity, but to a CPU, they are slow.

The fastest consumer hard drives take 7 milliseconds to read data off of a platter. The fastest consumer CPUs can do something with that data in one hundred thousandth that time.

The only reason we put up with mechanical storage (HDDs) is because they are cheap, store tons of data and are non-volatile: the data is still there even when you turn em off.

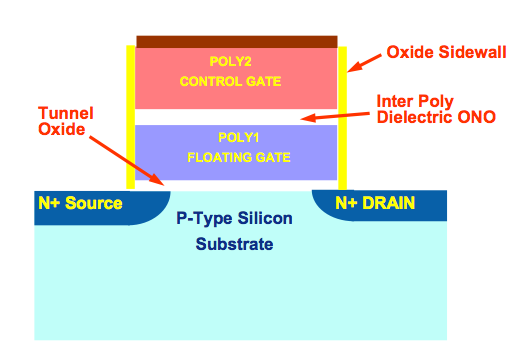

NAND flash gives us the best of both worlds. They are effectively non-volatile (flash cells can lose their charge but after about a decade) and relatively fast (data accesses take microseconds, not milliseconds). Through electron tunneling a charge is inserted into an N-channel MOSFET. Once the charge is in there, it's there for good - no refreshing necessary.

N-Channel MOSFET. One per bit in a NAND flash chip.

One MOSFET is good for one bit. Group billions of these MOSFETs together, in silicon, and you've got a multi-gigabyte NAND flash chip.

The MOSFETs are organized into lines, and the lines into groups called pages. These days a page is usually 4KB in size. NAND flash can't be written to one bit at a time, it's written at the page level - so 4KB at a time. Once you write the data though, it's there for good. Erasing is a bit more complicated.

To coax the charge out of the MOSFETs requires a bit more effort and the way NAND flash works is that you can't discharge a single MOSFET, you have to erase in larger groups called blocks. NAND blocks are commonly 128 pages, that means if you want to re-write a page in flash you have to first erase it and all 127 adjacent pages first. And allow me to repeat myself: if you want to overwrite 4KB of data from a full block, you need to erase and re-write 512KB of data.

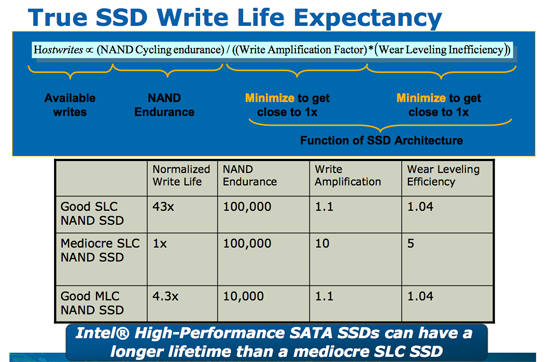

To make matters worse, every time you write to a flash page you reduce its lifespan. The JEDEC spec for MLC (multi-level cell) flash is 10,000 writes before the flash can start to fail.

Dealing with all of these issues requires that controllers get very crafty with how they manage writes. A good controller must split writes up among as many flash channels as possible, while avoiding writing to the same pages over and over again. It must also deal with the fact that some data is going to get frequently updated while others will remain stagnant for days, weeks, months or even years. It has to detect all of this and organize the drive in real time without knowing anything about how it is you're using your computer.

It's a tough job.

But not impossible.

295 Comments

View All Comments

GourdFreeMan - Tuesday, September 1, 2009 - link

You would, in fact, be incorrect. I refer you to ANSI/IEEE Std 1084-1986, which defines kilo, mega, etc. as powers of two when used to refer to sizes of computer storage. It was common practice to use such definitons in Computer Science from the 1970s until standards were changed in 1991. As many people reading Anandtech received their formal education during this time period, it is understandable that the usage is still commonplace.Undersea - Monday, August 31, 2009 - link

Where was this article two weeks ago before I bought my OCZ summit? I hope this little article will jump start samsung.Thanks for all the hard work :)

FrancoisD - Monday, August 31, 2009 - link

Hi Anand,Great article, as always. I've been following your site since the beginning and it's still the best one out there today!

I mainly use Mac's these days and was wondering if you knew anything about Apple's plans for TRIM??

Thanks for all the fantastic work, very technical yet easy to understand.

François

Anand Lal Shimpi - Monday, August 31, 2009 - link

Thanks for your support over the years :)No word on Apple's plans for TRIM yet, I am digging though...

Take care,

Anand

Dynotaku - Monday, August 31, 2009 - link

Amazing article as always, now I just need one that shows me how to install just Win 7 and my Steam folder to the SSD and move Program Files and "My Documents" or whatever it's called in Win7 to a mechanical disk.GullLars - Monday, August 31, 2009 - link

A really great article with loads of data.I only have one complaint. The 4kb random read/write tests in IOmeter was done with QD=3, this simulates a really light workload, and does not allow the controllers to make use of the potential of all their flash channels. I've seen intels x25-M scale up to 130-140 MB/s of 4KB random read @ QD=64 (medium load) with AHCI activated. I have not yet tested my Vertex SSDs or Mtron Pro's, but i suspect they also scale well beyond QD=3.

It would also be usefull to compare the different tests in the HDDsuite in PCmark vantage instead of only the total score.

Anand Lal Shimpi - Monday, August 31, 2009 - link

The reason I chose a queue depth of 3 is because that's, on average, what I found when I tried heavily (but realistically) loading some Windows desktop machines. I rarely found a queue depth over 5. The super high QDs are great for enterprise workloads but I don't believe they do a good job at showcasing single user desktop/notebook performance.I agree about the individual HDD suite tests, I was just trying to cut down on the number of graphs everyone had to mow through :)

Take care,

Anand

heulenwolf - Monday, August 31, 2009 - link

Anand,I'd like to add my thanks to the many in the comments. Your articles really do stand out in their completeness and clarity. Well done.

I'm hoping you or someone else in the forums can shed some light on a problem I'm having. I got talked into getting a Dell "Ultraperformance" SSD for my new work system last year. Its a Samsung-branded SLC SSD 64 GB capacity. As your results predict, its really snappy when its first loaded and performance degrades after a few months with the drive ~3/4 full. One thing I haven't seen predicted, though, is that the drives have only lasted 6 months. The first system I received was so unstable without explanation that we convinced Dell to replace the entire machine. Since then, I'm now on my second SSD refurb replacement under warranty. In both SDD failures, the drive worked normally for ~6 months, then performance dropped to 5-10 MB/sec, Vista boot times went up to ~15 minutes, and I paid dearly in time for every single click and keypress. Once everything finally loaded, the system behaved almost normally. Dell's own diagnostics pointed to bad drives, yet, in each case, the bad SSD continued to work just at super slow speeds. I was careful to disable Vista's automatic defrag with every install.

My IT staff has blamestormed first Vista (we're still mostly an XP shop) and now SSDs in general as the culprit. They want me to turn in the SSD and replace it with a magnetic hard drive. So, my question is how to explain this:

A) Am I that 1 in a bazillion case of having gotten a bad system followed by a bad drive followed by another bad drive

B) Is there something about Vista - beyond auto defrag - that accelerates the wear and tear on these drives

C) Is there something about Samsung's early SSD controllers that drops them to a lower speed under certain conditions (e.g. poorly implemented SMART diagnostics)

D) Is my IT department right and all SSDs are evil ;)?

Ardax - Monday, August 31, 2009 - link

Well, first you could point them to this article to point out how bad the Samsung SSDs are. Replace it with an Intel or Indilinx-based drive and you should be fine. Anecdotes so far indicate that people have been beating on them for months.As far as configuring Vista for SSD usage, MS posted in the Engineering Windows 7 Blog about what they're doing for SSDs. [url=http://blogs.msdn.com/e7/archive/2009/05/05/suppor...">http://blogs.msdn.com/e7/archive/2009/0...nd-q-a-f...]Article Link[/url].

The short version of it is this: Disable Defrag, SuperFetch, ReadyBoost, and Application and Boot Prefetching. All these technologies were created to work around the low random read/write performance of traditional HDs and are unnecessary (or unhealthy, in the case of defrag) with SSDs.

heulenwolf - Monday, August 31, 2009 - link

Thanks for the reply, Ardax. Unfortunately, the choice of SSD brand was Dell's. As Anand points out, OEM sales is where Samsung's seems to have a corner on the market. The choices are: Samsung "Ultraperformance" SSD, Samsung not-so-ultraperformance SSD, Magnetic HDD, or void the warranty by getting installing a non-Dell part. I could ask that we buy a non-Dell SSD but since installing it would preclude further warranty support from Dell and all SSDs have become the scapegoat, I doubt my request would be accepted. Additionally, the article doesn't say much about drive reliability which is the fundamental problem in my case.I'll look into the linked recommendations on Win 7 and SSDs. I had already done some research on these features and found the general concensus to be that leaving any of them enabled (with the exception of defrag) should do no harm.