The SSD Relapse: Understanding and Choosing the Best SSD

by Anand Lal Shimpi on August 30, 2009 12:00 AM EST- Posted in

- Storage

The Cleaning Lady and Write Amplification

Imagine you’re running a cafeteria. This is the real world and your cafeteria has a finite number of plates, say 200 for the entire cafeteria. Your cafeteria is open for dinner and over the course of the night you may serve a total of 1000 people. The number of guests outnumbers the total number of plates 5-to-1, thankfully they don’t all eat at once.

You’ve got a dishwasher who cleans the dirty dishes as the tables are bussed and then puts them in a pile of clean dishes for the servers to use as new diners arrive.

Pretty basic, right? That’s how an SSD works.

Remember the rules: you can read from and write to pages, but you must erase entire blocks at a time. If a block is full of invalid pages (files that have been overwritten at the file system level for example), it must be erased before it can be written to.

All SSDs have a dishwasher of sorts, except instead of cleaning dishes, its job is to clean NAND blocks and prep them for use. The cleaning algorithms don’t really kick in when the drive is new, but put a few days, weeks or months of use on the drive and cleaning will become a regular part of its routine.

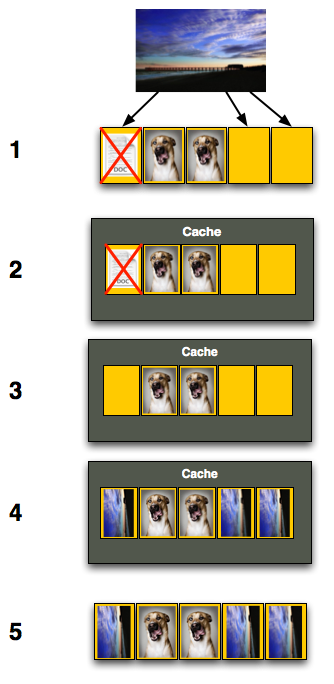

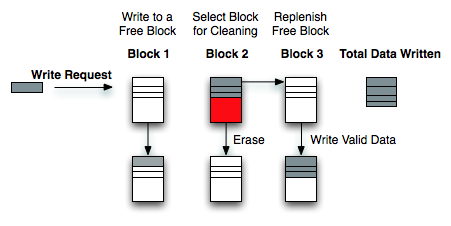

Remember this picture?

It (roughly) describes what happens when you go to write a page of data to a block that’s full of both valid and invalid pages.

In actuality the write happens more like this. A new block is allocated, valid data is copied to the new block (including the data you wish to write), the old block is sent for cleaning and emerges completely wiped. The old block is added to the pool of empty blocks. As the controller needs them, blocks are pulled from this pool, used, and the old blocks are recycled in here.

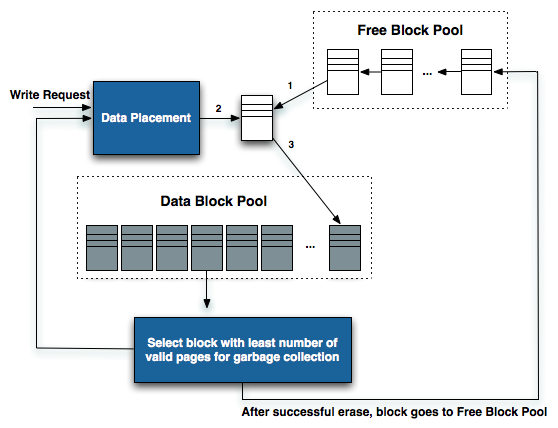

IBM's Zurich Research Laboratory actually made a wonderful diagram of how this works, but it's a bit more complicated than I need it to be for my example here today so I've remade the diagram and simplified it a bit:

The diagram explains what I just outlined above. A write request comes in, a new block is allocated and used then added to the list of used blocks. The blocks with the least amount of valid data (or the most invalid data) are scheduled for garbage collection, cleaned and added to the free block pool.

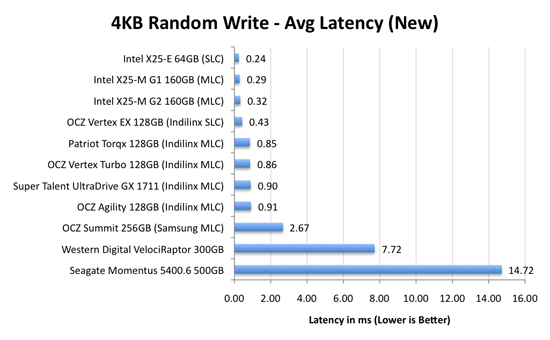

We can actually see this in action if we look at write latencies:

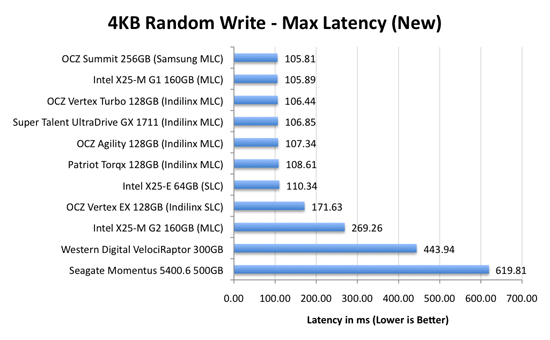

Average write latencies for writing to an SSD, even with random data, are extremely low. But take a look at the max latencies:

While average latencies are very low, the max latencies are around 350x higher. They are still low compared to a mechanical hard disk, but what's going on to make the max latency so high? All of the cleaning and reorganization I've been talking about. It rarely makes a noticeable impact on performance (hence the ultra low average latencies), but this is an example of happening.

And this is where write amplification comes in.

In the diagram above we see another angle on what happens when a write comes in. A free block is used (when available) for the incoming write. That's not the only write that happens however, eventually you have to perform some garbage collection so you don't run out of free blocks. The block with the most invalid data is selected for cleaning; its data is copied to another block, after which the previous block is erased and added to the free block pool. In the diagram above you'll see the size of our write request on the left, but on the very right you'll see how much data was actually written when you take into account garbage collection. This inequality is called write amplification.

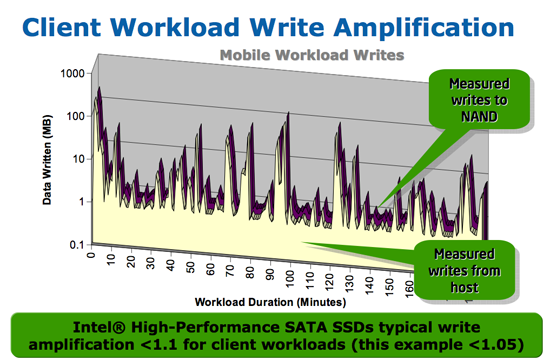

Intel claims very low write amplification on its drives, although over the lifespan of your drive a < 1.1 factor seems highly unlikely

The write amplification factor is the amount of data the SSD controller has to write in relation to the amount of data that the host controller wants to write. A write amplification factor of 1 is perfect, it means you wanted to write 1MB and the SSD’s controller wrote 1MB. A write amplification factor greater than 1 isn't desirable, but an unfortunate fact of life. The higher your write amplification, the quicker your drive will die and the lower its performance will be. Write amplification, bad.

295 Comments

View All Comments

nemitech - Monday, August 31, 2009 - link

opps - not ebay - it was NEWEGG.Loki726 - Monday, August 31, 2009 - link

Thanks a ton for including the pidgin compiler benchmarks. I didn't think that HD performance would make much of a difference (linking large builds might be a different story), but it is great to have numbers to back up that intuition. Keep it up.torsteinowich - Monday, August 31, 2009 - link

HiYou write that the Indilinx wiper tool collects a free page list from the OS, then wipes the pages. This sounds like a dangerous operation to me since the OS might allocate some of these blocks after the tool collects the list, but before they are wiped.

Have you received a good explanation for Indilinx about how they ensure file system integrity? As far as i know Windows cannot temporarily switch to read-only mode on an active file system (at least not the system drive). The only way i could see this tool working safely would be by booting off a different media and accessing the file system to be trimmed offline with a tool that correctly identifies the unused pages for the particular file system being used. I could be wrong of course, maybe windows 7 has a system call to temporarily freeze FS writes, but i doubt it.

has407 - Monday, August 31, 2009 - link

It: (1) creates a large temporary file (wiper.dat) which gobbles up all (or most) of the free space; (2) determines the LBA's occupied by that file; (3) tells the SSD to TRIM those LBA's; and then (4) deletes the temporary file (wiper.date).From the OS/filesystem perspective, it's just another app and another file. (A similar technique is used by, e.g., sysinternals Windows SDelete app to zero free space. For Windows you could also probably use the hooks used by defrag utilities to accomplis it, but that would be a lot more work.)

cghebert - Monday, August 31, 2009 - link

Anand,Great article. Once again you have outclassed pretty much every other site out there with the depth of content in this review. You should start marketing t-shirts that say "Everything I learned about SSDs I learned from AnandTech"

I did have a question about gaming benchmarks, since you made this statement:

" but as you'll see later on in my gaming tests the benefits of an SSD really vary depending on the game"

But I never saw any gaming benchmarks. Did I miss something?

nafhan - Monday, August 31, 2009 - link

Just wanted to say awesome review.I've been reading Anandtech since 2000, and while other sites have gone downhill or (apparently) succumbed to pressure from advertisers, you guys have continued to give in depth, critical reviews.

I also appreciate that you do some real analysis instead of just throwing 10 pages of charts online.

Thanks, and keep up the good work!

zysurge - Monday, August 31, 2009 - link

Awesome amazing article. So much information, presented clearly.Question, though? I have an Intel G2 160GB drive coming in the next few days for my Dell D830 laptop, which will be running Windows 7 x64.

Do I set the controller to ATA and use the Intel Matrix driver, or set it to AHCI and use Microsoft's driver? Will either provide an advantage? I realize neither will provide TRIM until Q4, but after the firmware update, both should, right?

Thanks in advance!

ggathagan - Wednesday, September 16, 2009 - link

From page 15 (Early Trim support...):Under Windows 7 that means you have to use a Microsoft made IDE or AHCI driver (you can't install chipset drivers from anyone else).

Mumrik - Monday, August 31, 2009 - link

but I can't live with less than 300GB on that drive, and SSDs in usable sizes still cost more than high end video cards :-(I really hope I'll be able to pick up a 300GB drive for 100-200 bucks in a year or so, but it is probably a bit too optimistic.

Simen1 - Monday, August 31, 2009 - link

This is simply wrong. Ask anyone over 10 years if they think this mathematical statement is true or false. 80 can never equal 74,5.Now, someone claims that 1 GB = 10^9 B and others claim that 1 GB is 2^30 B. Who is really right? What does the G and the B mean? Who defines that?

The answers is easy to find and document. B means Byte. G stands for Giga ans means 10^6, not 2^30. Giga is defined in the international system of units, SI.

No standardization organization have _ever_ defined Giga to be 2^30. But IEC, International Electrotechnical Commission, have defined "Gi" to 2^30. This is supposed to be used for digital storage so people wont be confused by all the misunderstandings around this. Misunderstandings that mainly comes from Microsoft and quite a few other big software vendors. Companies that ignore the mathematical errors in their software when they claim that 80GB = 74,5 GB, and ignore both international standards on how to shorten large numbers.