The SSD Relapse: Understanding and Choosing the Best SSD

by Anand Lal Shimpi on August 30, 2009 12:00 AM EST- Posted in

- Storage

The Cleaning Lady and Write Amplification

Imagine you’re running a cafeteria. This is the real world and your cafeteria has a finite number of plates, say 200 for the entire cafeteria. Your cafeteria is open for dinner and over the course of the night you may serve a total of 1000 people. The number of guests outnumbers the total number of plates 5-to-1, thankfully they don’t all eat at once.

You’ve got a dishwasher who cleans the dirty dishes as the tables are bussed and then puts them in a pile of clean dishes for the servers to use as new diners arrive.

Pretty basic, right? That’s how an SSD works.

Remember the rules: you can read from and write to pages, but you must erase entire blocks at a time. If a block is full of invalid pages (files that have been overwritten at the file system level for example), it must be erased before it can be written to.

All SSDs have a dishwasher of sorts, except instead of cleaning dishes, its job is to clean NAND blocks and prep them for use. The cleaning algorithms don’t really kick in when the drive is new, but put a few days, weeks or months of use on the drive and cleaning will become a regular part of its routine.

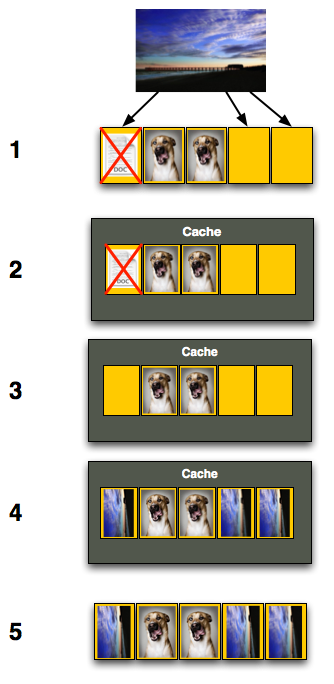

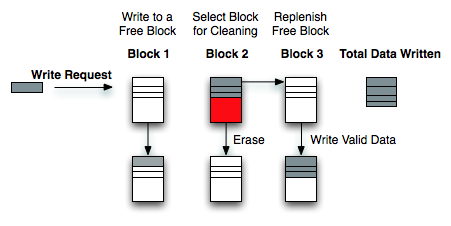

Remember this picture?

It (roughly) describes what happens when you go to write a page of data to a block that’s full of both valid and invalid pages.

In actuality the write happens more like this. A new block is allocated, valid data is copied to the new block (including the data you wish to write), the old block is sent for cleaning and emerges completely wiped. The old block is added to the pool of empty blocks. As the controller needs them, blocks are pulled from this pool, used, and the old blocks are recycled in here.

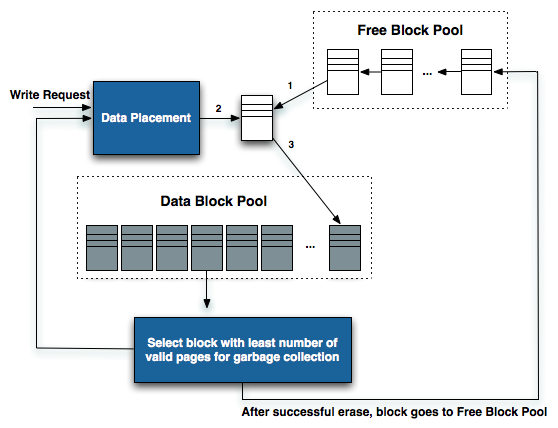

IBM's Zurich Research Laboratory actually made a wonderful diagram of how this works, but it's a bit more complicated than I need it to be for my example here today so I've remade the diagram and simplified it a bit:

The diagram explains what I just outlined above. A write request comes in, a new block is allocated and used then added to the list of used blocks. The blocks with the least amount of valid data (or the most invalid data) are scheduled for garbage collection, cleaned and added to the free block pool.

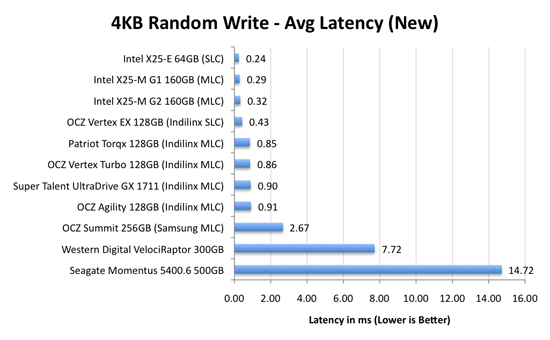

We can actually see this in action if we look at write latencies:

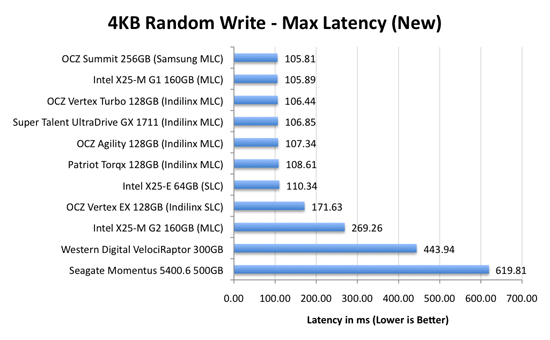

Average write latencies for writing to an SSD, even with random data, are extremely low. But take a look at the max latencies:

While average latencies are very low, the max latencies are around 350x higher. They are still low compared to a mechanical hard disk, but what's going on to make the max latency so high? All of the cleaning and reorganization I've been talking about. It rarely makes a noticeable impact on performance (hence the ultra low average latencies), but this is an example of happening.

And this is where write amplification comes in.

In the diagram above we see another angle on what happens when a write comes in. A free block is used (when available) for the incoming write. That's not the only write that happens however, eventually you have to perform some garbage collection so you don't run out of free blocks. The block with the most invalid data is selected for cleaning; its data is copied to another block, after which the previous block is erased and added to the free block pool. In the diagram above you'll see the size of our write request on the left, but on the very right you'll see how much data was actually written when you take into account garbage collection. This inequality is called write amplification.

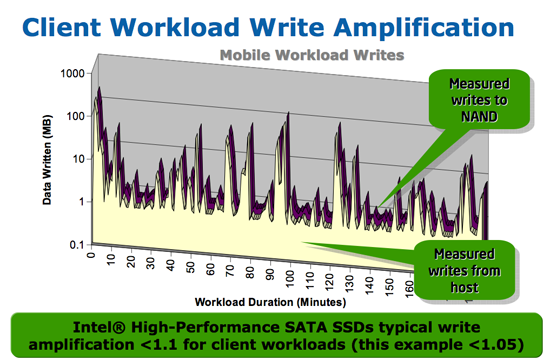

Intel claims very low write amplification on its drives, although over the lifespan of your drive a < 1.1 factor seems highly unlikely

The write amplification factor is the amount of data the SSD controller has to write in relation to the amount of data that the host controller wants to write. A write amplification factor of 1 is perfect, it means you wanted to write 1MB and the SSD’s controller wrote 1MB. A write amplification factor greater than 1 isn't desirable, but an unfortunate fact of life. The higher your write amplification, the quicker your drive will die and the lower its performance will be. Write amplification, bad.

295 Comments

View All Comments

shabby - Monday, August 31, 2009 - link

The 80gig g2 is $399 now!gfody - Tuesday, September 1, 2009 - link

The gen2 80gb is at $499 as of 12:00AM PSTmaxfisher05 - Monday, August 31, 2009 - link

As of right now (8/31) newegg has the 160GB Intel G2 listed at $899!!!!!!!!!!!!!!!!!!! To quote Anand "lolqtfbbq!"siliq - Monday, August 31, 2009 - link

Great article! Love reading this. Thanks Anand.We gather from this article that all the pain-in-@$$ about SSDs come from the inconsistency between the size of the read-write page and the erase block. When SSDs are reading/writing a page it's 4K, but the minimum size of erasing operation is 512K. Just wondering is there any possibility that manufacturers can come up with NAND chips that allows controllers to directly erase a 4K page without all the extra hassles. What are the obstacles that prevent manufacturers from achieving this today?

bji - Tuesday, September 1, 2009 - link

It is my understanding that flash memory has already been pushed to its limit of efficiency in terms of silicon usage in order to allow for the lowest possible per-GB price. It is much cheaper to implement sophisticated controllers that hide the erase penalty as much as possible than it is to "fix" the issue in the flash memory itself.It is absolutely possible to make flash memory that has the characteristics you describe - 4K erase blocks - but it would require a very large number of extra gates in silicon and this would push the cost up per GB quite a bit. Just pulling numbers out of the air, let's say it would cost 2x as much per GB for flash with 4K erase blocks. People already complain about the high cost per GB of SSD drives (well I don't - because I don't steal software/music/movies so I have trouble filling even a 60 GB drive), I can't imagine that it would make market sense for any company to release an SSD based on flash memory that costs $7 per GB, especially when incredible performance can be achieved using standard flash, which is already highly optimized for price/performance/size as much as possible, as long as a sufficiently smart controller is used.

Also - you should read up on NOR flash. This is a different technology that already exists, that has small erase blocks and is probably just what you're asking for. However, it uses 66% more silicon area than equivalent NAND flash (the flash used in SSD drives), so it is at least 66% more expensive. And no one uses it in SSDs (or other types of flash drives AFAIK) for this reason.

bji - Tuesday, September 1, 2009 - link

Oh I just noticed in the Wikipedia article about NOR flash, that typical NOR flash erase block sizes are also 64, 128, or 256 KB. So the eraseblocks are just as problematic there as in NAND flash. However, NOR flash is more easily bit-addressable so would avoid some of the other penalties associated with NAND that the smart contollers have to work around.So to make a NAND or NOR flash with 4K eraseblocks would probably make them both 2X - 4X more expensive. No one is going to do that - it would push the price back out to where SSDs were not viable, just as they were a few years ago.

siliq - Tuesday, September 1, 2009 - link

Amazing answers! Thank you very muchmorrie - Monday, August 31, 2009 - link

My laptop is limited to 4 GB swap. While that's enough for 99% of Linux users, I don't shut down my laptop, it's used as a desktop with dozens of apps running and hundreds of browser tabs. Therefore, after a few months of uptime, memory usage climbs above 4 GB. I have two hard drives in the laptop, and set up a software raid0 1GB swap partition, but I went with software raid1 for the other swap partition. So once the ram is used up for swap, the laptop slows noticeably, but after the raid0 swap partition fills up, the raid1 partition really slows it down. Once that fills up, it hits swap files (non raid) which slow it down more. But thanks to the kernel and the way swappiness works, once about 4 GB of Ram plus about 3 GB of physical swap is used, it really slows. I can gain a bit of speed by adding some physical swap files to increase the ratio of physical swap to ram swap (thus changing swappiness through other means), but this only works for another 1 GB of ram.No lectures or advice please, on how I'm using up memory or about how 4GB is more than sufficient, my uptimes are in the hundreds of days on this laptop and thanks to ADD/limited attention span, intermittent printer availability for printing out saved browser tabs and other reasons (old habits dying hard being one), my memory usage is what it is.

So, the big question is, since the laptop has an eSATA port, can I install one of these ssd drives in an externel SATA tray, connected via eSATA to the laptop and move physical swap partitions to the ssd? I believe that swap on the ssd would be a lot faster even on the eSATA wire, than swap on the drives in the laptop (they're 7200 rpm drives btw). I'm aware that using the ssd for swap would shorten it's life, but if it lasts a year till faster laptops with more memory are available (and I get used to virtual machines and saving state so I can limit open browser windows), I'll be happy.

Buying two of the drives and using them raided in the laptop is too costly right now, when prices drop that'll be a solution for this current laptop.

Externel SSD over eSATA for Linux swap on a laptop? Faster than my current setup?

hpr - Monday, August 31, 2009 - link

Sounds like you have some very small memory leak going on there.Have you tried that Firefox plugin that enables you to have your tabs but it doesn't really have a tab open in memory.

TooManyTabs

https://addons.mozilla.org/en-US/firefox/addon/942...">https://addons.mozilla.org/en-US/firefox/addon/942...

Have fun filling up thousands of tabs and having low memory usage.

gstrickler - Monday, August 31, 2009 - link

You should be able to use an SSD in an eSATA case, and yes, it should be faster than using your internal 7200 RPM drives. You probably want to use an Intel SSD for that (see page 19 of the article and note that the Intel drives don't drop off dramatically with usage).If you don't need to storage of your two internal 7200 RPM drives (or if you can get a sufficiently large SSD), you might be better off replacing one of them with an SSD and reconsider how you're allocating all your storage.

As for printer availability, seems to me it would make more sense to use a CUPS based setup to create PDFs rather than having jobs sit in a print queue indefinitely. Then, print the PDFs at your convenience when you have a printer available. I don't know how your printing setup currently works, but it sounds like doing so would reduce your swap space usage.