The Apple A15 SoC Performance Review: Faster & More Efficient

by Andrei Frumusanu on October 4, 2021 9:30 AM EST- Posted in

- Mobile

- Apple

- Smartphones

- Apple A15

A few weeks ago, we’ve seen Apple announce their newest iPhone 13 series devices, a set of phones being powered by the newest Apple A15 SoC. Today, in advance of the full device review which we’ll cover in the near future, we’re taking a closer look at the new generation chipset, looking at what exactly Apple has changed in the new silicon, and whether it lives up to the hype.

This year’s announcement of the A15 was a bit odder on Apple’s PR side of things, notably because the company generally avoided making any generational comparisons between the new design to Apple’s own A14. Particularly notable was the fact that Apple preferred to describe the SoC in context of the competition; while that’s not unusual on the Mac side of things, it was something that this year stood out more than usual for the iPhone announcement.

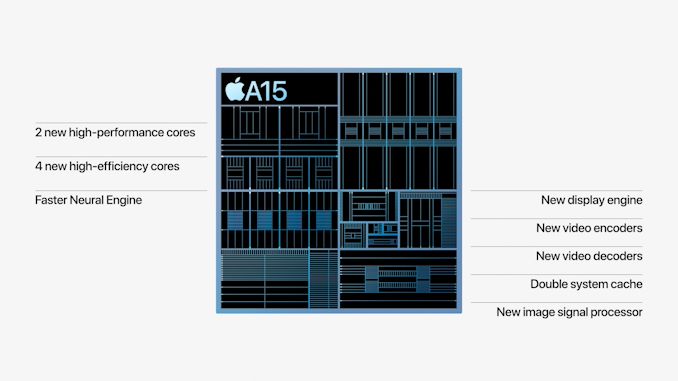

The few concrete factoids about the A15 were that Apple is using new designs for their CPUs, a faster Neural engine, a new 4- or 5-core GPU depending on the iPhone variant, and a whole new display pipeline and media hardware block for video encoding and decoding, alongside new ISP improvements for camera quality advancements.

On the CPU side of things, improvements were very vague in that Apple quoted to be 50% faster than the competition, and the GPU performance metrics were also made in such a manner, describing the 4-core GPU A15 being +30% faster than the competition, and the 5-core variant being +50% faster. We’ve put the SoC through its initial paces, and in today’s article we’ll be focusing on the exact performance and efficiency metrics of the new chip.

Frequency Boosts; 3.24GHz Performance & 2.0GHz Efficiency Cores

Starting off with the CPU side of things, the new A15 is said to feature two new CPU microarchitectures, both for the performance cores as well as the efficiency cores. The first few reports about the performance of the new cores were focused around the frequencies, which we can now confirm in our measurements:

| Maximum Frequency vs Loaded Threads Per-Core Maximum MHz |

||||||

| Apple A15 | 1 | 2 | 3 | 4 | ||

| Performance 1 | 3240 | 3180 | ||||

| Performance 2 | 3180 | |||||

| Efficiency 1 | 2016 | 2016 | 2016 | 2016 | ||

| Efficiency 2 | 2016 | 2016 | 2016 | |||

| Efficiency 3 | 2016 | 2016 | ||||

| Efficiency 4 | 2016 | |||||

| Maximum Frequency vs Loaded Threads Per-Core Maximum MHz |

||||||

| Apple A14 | 1 | 2 | 3 | 4 | ||

| Performance 1 | 2998 | 2890 | ||||

| Performance 2 | 2890 | |||||

| Efficiency 1 | 1823 | 1823 | 1823 | 1823 | ||

| Efficiency 2 | 1823 | 1823 | 1823 | |||

| Efficiency 3 | 1823 | 1823 | ||||

| Efficiency 4 | 1823 | |||||

Compared to the A14, the new A15 increases the peak single-core frequency of the two-performance core cluster by 8%, now reaching up to 3240MHz compared to the 2998MHz of the previous generation. When both performance cores are active, their operating frequency actually goes up by 10%, both now running at an aggressive 3180MHz compared to the previous generation’s 2890MHz.

In general, Apple’s frequency increases here are quite aggressive given the fact that it’s quite hard to push this performance aspect of a design, especially when we’re not expecting major performance gains on the part of the new process node. The A15 should be made on an N5P node variant from TSMC, although neither company really discloses the exact details of the design. TSMC claims a +5% frequency increase over N5, so for Apple to have gone further beyond this would have indicated an increase in power consumption, something to keep in mind of when we dive deeper into the power characteristics of the CPUs.

The E-cores of the A15 are now able to clock up to 2016MHz, a 10.5% increase over the A14’s cores. The frequency here is independent of the performance cores, as in the number of threads in the cluster doesn’t affect the other cluster, or vice-versa. Apple has done some more interesting changes to the little cores this generation, which we’ll come to in a bit.

Giant Caches: Performance CPU L2 to 12MB, SLC to Massive 32MB

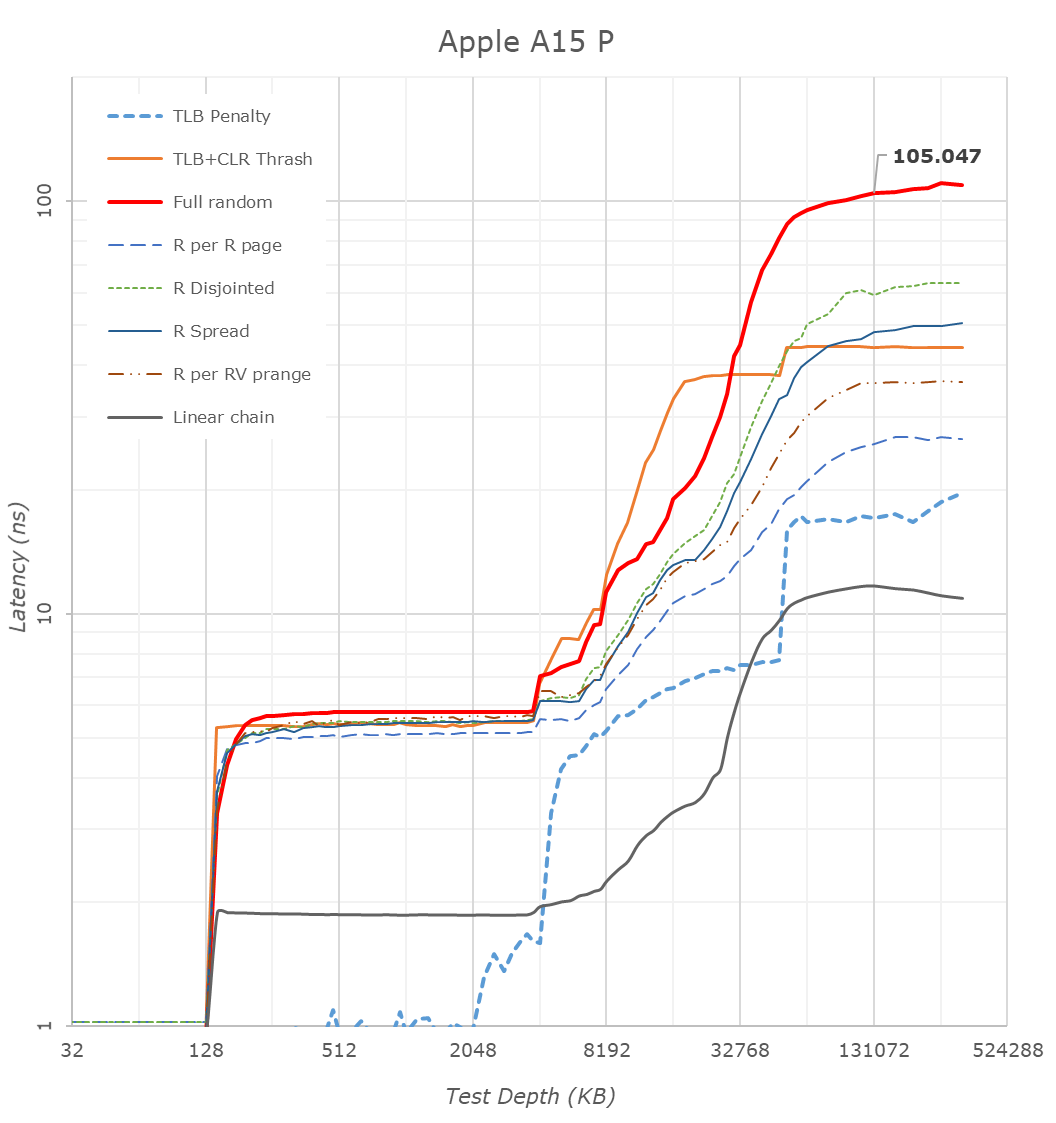

One more straightforward technical detail Apple revealed during its launch was that the A15 now features double the system cache compared to the A14. Two years ago we had detailed the A13’s new SLC which had grown from 8MB in the A12 to 16MB, a size that was also kept constant in the A14 generation. Apple claiming they’ve doubled this would consequently mean it’s 32MB now in the A15.

Looking at our latency tests on the new A15, we can indeed now confirm that the SLC has now doubled up to 32MB, further pushing the memory depth to reach DRAM. Apple’s SLC is likely to be a key factor in the power efficiency of the chip, being able to keep memory accesses on the same silicon rather than going out to slower, and more power inefficient DRAM. We’ve seen these types of last-level caches being employed by more SoC vendors, but at 32MB, the new A15 dwarfs the competition’s implementations, such as the 3MB SLC on the Snapdragon 888 or the estimated 6-8MB SLC on the Exynos 2100.

What Apple didn’t divulge, is also changes to the L2 cache of the performance cores, which has now grown by 50% from 8MB to 12MB. This was actually the same L2 size as on the Apple M1, only this time around it’s serving only two performance cores rather than four. The access latency appears to have risen from 16 cycles on the A14 to 18 cycles on the A15.

A 12MB L2 is again humongous, over double compared to the combined L3+L2 (4+1+3x0.5 = 6.5MB) of other designs such as the Snapdragon 888. It very much appears Apple has invested a lot of SRAM into this year’s SoC generation.

The efficiency cores this year don’t seem to have changed their cache sizes, remaining at 64KB L1D’s and 4MB shared L2’s, however we see Apple has increased the L2 TLB to 2048 entries, now covering up to 32MB, likely to facilitate better SLC access latencies. Interestingly, Apple this year now allows the efficiency cores to have faster DRAM access, with latencies now at around 130ns versus the +215ns on the A14, again something to keep in mind of in the next performance section of the article.

CPU Microarchitecture Changes: A Slow(er) Year?

This year’s CPU microarchitectures were a bit of a wildcard. Earlier this year, Arm had announced the new Armv9 ISA, predominantly defined by the new SVE2 SIMD instruction set, as well as the company’s new Cortex series CPU IP which employs the new architecture. Back in 2013, Apple was notorious for being the first on the market with an Armv8 CPU, the first 64-bit capable mobile design. Given that context, I had generally expected this year’s generation to introduce v9 as well, but however that doesn’t seem to be the case for the A15.

Microarchitecturally, the new performance cores on the A15 doesn’t seem to differ much from last year’s designs. I haven’t invested the time yet to look at every nook and cranny of the design, but at least the back-end of the processor is identical in throughput and latencies compared to the A14 performance cores.

The efficiency cores have had more changes, alongside some of the memory subsystem TLB changes, the new E-core now gains an extra integer ALU, bringing the total up to 4, up from the previous 3. The core for some time no longer could be called “little” by any means, and it seems to have grown even more this year, again, something we’ll showcase in the performance section.

The possible reason for Apple’s more moderate micro-architectural changes this year might be a storm of a few factors – Apple had notably lost their lead architect on the big performance cores, as well as parts of the design teams, to Nuvia back in 2019 (later acquired by Qualcomm earlier this year). The shift towards Armv9 might also imply some more work done on the design, and the pandemic situation might also have contributed to some non-ideal execution. We’ll have to examine next year’s A16 to really determine if Apple’s design cadence has slowed down, or whether this was merely just a slippage, or simply a lull before a much larger change in the next microarchitecture.

Of course, the tone here paints rather conservative improvement of the A15’s CPUs, which when looking at performance and efficiency, are anything but that.

204 Comments

View All Comments

name99 - Monday, October 4, 2021 - link

You see it for GPU compute, eghttps://browser.geekbench.com/v5/compute/compare/3...

Unclear why you get even BETTER than 25% in that case (these were not cherry picked results)

Are there more differences than Apple has told us (like the Pro, ie 6GB, models, are using two DIMMs and have twice the bandwidth?)

As for whether game results or Compute results better reflect the SoC, well...

Obviously Apple is using all this GPU/NPU stuff in some places like computational photography, where people like it. The Siri image recognition stuff is definitely getting more valuable (I tried plant recognition this week and was pleasantly surprised, though the UI remains clumsy and sub-optimal). Likewise translation advances by fits and starts, though again hampered by lousy UI; likewise we'll see how well the Live Text stuff works (so far the one time I tried it, I was not impressed, but that was a very complex image so maybe I was hoping for too much).

All these smarts are definitely valuable and, for many users, probably more valuable than a CPU 50% faster.

On the other hand so many NPU-hooked up functions still seem so freaking dumb! Everyone hates the keyboard error correction stuff, things like choosing the appropriate contact when you have two with the same name seem to have zero intelligence behind them, I've even heard Maps Siri call a succession of streets of the form S Oak Ave "Sangrida Oak Ave". (N, W, E were correct. First time I had no idea what I heard so I listened carefully from that point on. All S were pronounced as something like Sangrida!)

it's unclear (to me anywhere) where this NPU-adjacent dumbness comes from. Poorly trained models? Not enough NPU on my hardware, so I should go out and get new hardware? Different Apple groups (especially teams like Contacts and Reminders) using the NNU API's incorrectly because they have no in-team AI experience and are just guessing at what they are doing?

cha0z_ - Tuesday, October 5, 2021 - link

Check the results again, it does provide decent uplift in peformance (peak), but apple decided to keep it at lower power figures in sustain performance and while doing so they achieve slightly higher performance vs the 4 core GPU. Instead of faster performance they decided to use the 5th GPU for lower power draw in thermally limited scenarios (sustained performance).name99 - Monday, October 4, 2021 - link

It's worth comparing the SPEC2017 results with https://www.anandtech.com/show/16252/mac-mini-appl... which gives the M1 results; the simple summary comparison hides a lot.In particular we can see that most of the int benchmarks are much the same; in other words not much apparent change in IPC, and now A15 matching M1's frequency. We do see a few minor M1 wins because it has a wider path to DRAM.

The interesting cases are the massive jumps -- omnetpp and xalanc. What's with those?

I'm not wild about the methodology in this paper:

https://dl.acm.org/doi/pdf/10.1145/3446200

but it does has a few interesting plots. Of particular relevance is Fig 4, which (look at the red triangles) gives us the working set size of the SPEC2017 programs.

Omnetpp is characterized as 64MB, but with enough locality (or the SoC doing a good job of detecting streaming data and not caching it) the difference between the previous cache space available and the current cache space may explain most of the boost.

The other big change is xalanc, and we see that its working set is right at 8MB. You could try to make an argument about caches, but I don't think that's right. Instead I'd urge you to compare the A15 result, the A14 result (which I am guessing, Andrei can confirm, was measured this run, using XCode 13), and the M1 result.

The value for A14 xalanc (and the rather less interesting x264) are notably higher, like ~10..15% higher. This suggests a compiler (or, harder to imagine, an OS) change -- most likely something like one apparently small tweak in a loop that now allows a scalar loop to be vectorized, or (less likely, but not impossible) that restructures the direction of memory traversal.

So I'd conclude that, in a way, we are ultimately back to where we were after the announcement and the first GB5 values!

- performance essentially tracking the frequency improvement

- for very particular pieces of code, which just happen to be larger than the pervious L2+SLC could capture, but which now fit into L2+SLC, a better than expected boost (only really relevant to omnetpp)

- for other very particular pieces of code which just happen to match the pattern, a nice boost from latest XCode (but not limited to just this CPU/SoC)

But no evidence of anything but the most minor IPC-relevant modifications to the P core. Energy mods, of course, always desirable, and probably necessary to make that frequency boost useful rather than a gimmick, but not IPC boosts.

It would be interesting if those who track these things were to report anything significant in code gen by the newest XCode. Last time I looked at this stuff (not quite a year ago)

- complex support was still in progress, with lousy use of the ARMv8 complex instructions (Some use, but far from optimal). I'd like to hope that's all fixed, but it seems unlikely to be relevant to xalanc.

- there was ongoing talk of compiler level support for matrices (not just AMX, but support for various TPUs, and for various matrix instructions being added across ISA's). Again, interesting and hopefully having made progress, but not relevant here.

- the usual never-ending "better support, clean up and restructure nested loops" and "better vectorized code", and those two seem the most likely candidates?

Andrei Frumusanu - Tuesday, October 5, 2021 - link

Please avoid using the M1 numbers here, those were on macOS and on a different compiler version.Xalanc is memory allocator sensitive and that's the major contributable to the M1 and A14 differences as iOS is running some sort of aggregator allocator similar to jemalloc.

The x264 differences are due to Xcode13 using a new LLVM 12 toolchain, Android NDKr23 had the same improvements, see : https://community.arm.com/developer/tools-software...

name99 - Tuesday, October 5, 2021 - link

Thanks for the memory allocator detail!But basically the point remains -- everything converges on essentially the same IPC (modulo larger L2 and SLC); just substantially improved energy.

Reason I went down this path was the *apparent* substantial jump between the M1 SPEC2017 numbers and the A15 numbers, which I wanted to resolve.

name99 - Monday, October 4, 2021 - link

"This year’s CPU microarchitectures were a bit of a wildcard. Earlier this year, Arm had announced the new Armv9 ISA, predominantly defined by the new SVE2 SIMD instruction set, as well as the company’s new Cortex series CPU IP which employs the new architecture. Back in 2013, Apple was notorious for being the first on the market with an Armv8 CPU, the first 64-bit capable mobile design. Given that context, I had generally expected this year’s generation to introduce v9 as well, but however that doesn’t seem to be the case for the A15."One thing we all forgot, or overlooked, was the announcement earlier this year of SME (Scalable Matrix Extension) which along with the other stuff it does, adds a wrinkle to SVE via the addition of SVE/2 Streaming Mode.

Is it possible that Apple has decided to (for the second time) delay implementing because these changes (addition of Streaming Mode and SME) change things sufficiently that you might as well design for them from the start?

There's obviously value in learning-by-doing, even if you can't ship the final product you want.

But there's also obvious value in trying to avoid fragmenting the ISA base as much as possible.

Is it possible that Apple have concluded (having fixed the immediate problems with v8 aggressively every year) that going forward a better model is more something like an ISA update every 4 or so years (and so fairly clearly differentiated classes of compiler target) rather than annual updates? Starting with delivering an SVE/SME that's fully featured (at least as of mid 2021) rather than two successive versions of SVE, the first without SME and SVE streaming?

ARM seems to have decided to hell with it, they're going to accept this ISA incompatibility and ship V1 with SVE, and N2 with SVE2-lite (ie no SME/streaming). Probably an acceptable choice given those are data center designs.

In Apple's world, ideally finalization of code within the App Store down to the precise CPU of each customer would solve this issue. But Apple may have concluded some combination of the legal fights around the App Store and perhaps real-world difficulty of debugging by devs under these circumstances where they can never be sure quite what binary each user has installed, have rendered this infeasible?

(Honestly I'd hope that the legal issues force things the other way, including forcing the App Store to provide more developer value by doing a much better job of constant app improvement -- both per-CPU finalization, and constant recompilation of older code with newer compilers, along with much better support for debugging help. Well, we'll see. Maybe, with the current rickety state of compiler trustworthiness, that vision is still too much to hope for?)

OreoCookie - Tuesday, October 5, 2021 - link

I think you are spot-on: I don’t think there would have been a similarly large payoff as compared with going from 32 bit to 64 bit. Given all the external parameters, pandemic, staff leaving, going with a tock cycle is a prudent choice. Especially if Apple not only undersold the improvements, but could have genuinely made more of a deal about them focussing on efficiency with this release. Given how much faster they are than their competition, I think focussing on efficiency is a good thing.Further, *if* Apple had decided on adopting a new instruction set, I would have expected to traces of that in the toolchain, e. g. in llvm.

name99 - Tuesday, October 5, 2021 - link

Yeah, the one thing one sees in the toolchain (eg Andrei's link above) https://community.arm.com/developer/tools-software...is just how immature SVE compiling still is.

I don't want to complain about that -- compilers are HARD! But releasing HW on the hope that the compiler will get there is a tough sell.

On the one hand, yes, it is harder for compiler devs 9and everyone else, like those who write specialized optimized assembly) to make progress without HW.

On the other hand, you only get one chance to make a first impression, and if you blow it with a fragmented ISA, a poor implementation, or unimpressive performance (*cough* AVX512 *cough*) it's hard to recover from that.

I guess Apple see little downside in having ARM bear the costs of being the pioneer this time round.

OreoCookie - Thursday, October 7, 2021 - link

Yes, the maturity of the toolchain is another major factor: part of Apple’s secret sauce is the tight integration of software and hardware. Its SoCs are designed to accelerate e. g. JavaScript and reference counting (https://twitter.com/Catfish_Man/status/13262384342...Another thing is that at least for some of the new capabilities that SVE brings are probably — at least in part — covered by other specialized hardware on Apple’s SoCs.

PS AVX512 pipelines are also massively power hungry, so that’s another trade-off to consider.

williwgtr - Tuesday, October 5, 2021 - link

It may be faster but what good is that if you want to play 20 minutes you can not low FPS, the CPU setting is aggressive to prevent it from getting hot