The NVIDIA GeForce RTX 2060 6GB Founders Edition Review: Not Quite Mainstream

by Nate Oh on January 7, 2019 9:00 AM EST

In the closing months of 2018, NVIDIA finally released the long-awaited successor to the Pascal-based GeForce GTX 10 series: the GeForce RTX 20 series of video cards. Built on their new Turing architecture, these GPUs were the biggest update to NVIDIA's GPU architecture in at least half a decade, leaving almost no part of NVIDIA's architecture untouched.

So far we’ve looked at the GeForce RTX 2080 Ti, RTX 2080, and RTX 2070 – and along with the highlights of Turing, we’ve seen that the GeForce RTX 20 series is designed on a hardware and software level to enable realtime raytracing and other new specialized features for games. While the RTX 2070 is traditionally the value-oriented enthusiast offering, NVIDIA's higher price tags this time around meant that even this part was $500 and not especially value-oriented. Instead, it would seem that the role of the enthusiast value offering is going to fall to the next member in line of the GeForce RTX 20 family. And that part is coming next week.

Launching next Tuesday, January 15th is the 4th member of the GeForce RTX family: the GeForce RTX 2060 (6GB). Based on a cut-down version of the same TU106 GPU that's in the RTX 2070, this new part shaves off some of RTX 2070's performance, but also a good deal of its price tag in the process. And for this launch, like the other RTX cards last year, NVIDIA is taking part by releasing their own GeForce RTX 2060 Founders Edition card, which we are taking a look at today.

| NVIDIA GeForce Specification Comparison | ||||||

| RTX 2060 Founders Edition | GTX 1060 6GB (GDDR5) | GTX 1070 (GDDR5) |

RTX 2070 | |||

| CUDA Cores | 1920 | 1280 | 1920 | 2304 | ||

| ROPs | 48 | 48 | 64 | 64 | ||

| Core Clock | 1365MHz | 1506MHz | 1506MHz | 1410MHz | ||

| Boost Clock | 1680MHz | 1709MHz | 1683MHz | 1620MHz FE: 1710MHz |

||

| Memory Clock | 14Gbps GDDR6 | 8Gbps GDDR5 | 8Gbps GDDR5 | 14Gbps GDDR6 | ||

| Memory Bus Width | 192-bit | 192-bit | 256-bit | 256-bit | ||

| VRAM | 6GB | 6GB | 8GB | 8GB | ||

| Single Precision Perf. | 6.5 TFLOPS | 4.4 TFLOPs | 6.5 TFLOPS | 7.5 TFLOPs FE: 7.9 TFLOPS |

||

| "RTX-OPS" | 37T | N/A | N/A | 45T | ||

| SLI Support | No | No | Yes | No | ||

| TDP | 160W | 120W | 150W | 175W FE: 185W |

||

| GPU | TU106 | GP106 | GP104 | TU106 | ||

| Transistor Count | 10.8B | 4.4B | 7.2B | 10.8B | ||

| Architecture | Turing | Pascal | Pascal | Turing | ||

| Manufacturing Process | TSMC 12nm "FFN" | TSMC 16nm | TSMC 16nm | TSMC 12nm "FFN" | ||

| Launch Date | 1/15/2019 | 7/19/2016 | 6/10/2016 | 10/17/2018 | ||

| Launch Price | $349 | MSRP: $249 FE: $299 |

MSRP: $379 FE: $449 |

MSRP: $499 FE: $599 |

||

Like its older siblings, the GeForce RTX 2060 (6GB) comes in at a higher price-point relative to previous generations, and at $349 the cost is quite unlike the GeForce GTX 1060 6GB’s $299 Founders Edition and $249 MSRP split, let alone the GeForce GTX 960’s $199. At the same time, it still features Turing RT cores and tensor cores, bringing a new entry point for those interested in utilizing GeForce RTX platform features such as realtime raytracing.

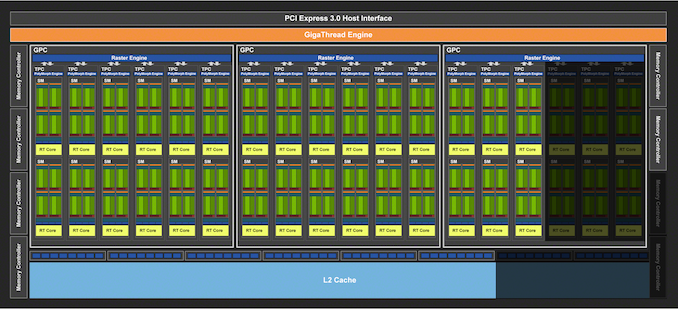

Diving into the specs and numbers, the GeForce RTX 2060 sports 1920 CUDA cores, meaning we’re looking at a 30 SM configuration, versus RTX 2070’s 36 SMs. As the core architecture of Turing is designed to scale with the number of SMs, this means that all of the core compute features are being scaled down similarly, so the 17% drop in SMs means a 17% drop in the RT Core count, a 17% drop in the tensor core count, a 17% drop in the texture unit count, a 17% drop in L0/L1 caches, etc.

Unsurprisingly, clockspeeds are going to be very close to NVIDIA’s other TU106 card, RTX 2070. The base clockspeed is down a bit to 1365MHz, but the boost clock is up a bit to 1680MHz. So on the whole, RTX 2060 is poised to deliver around 87% of the RTX 2070’s compute/RT/texture performance, which is an uncharacteristically small gap between a xx70 card and an xx60 card. In other words, the RTX 2060 is in a good position to punch above its weight in compute/shading performance.

However TU106 has taken a bigger trim on the backend, and in workloads that aren’t pure compute, the drop will be a bit harder. The card is shipping with just 6GB of GDDR6 VRAM, as opposed to 8GB on its bigger brother. The result of this is that NVIDIA is not populating 2 of TU106’s 8 memory controllers, resulting in a 192-bit memory bus and meaning that with the use of 14Gbps GDDR6, RTX 2060 only offers 75% of the memory bandwidth of the RTX 2070. Or to put this in numbers, the RTX 2060 will offer 336GB/sec of bandwidth to the RTX 2070’s 448GB/sec.

And since the memory controllers, ROPs, and L2 cache are all tied together very closely in NVIDIA’s architecture, this means that ROP throughput and the amount of L2 cache are also being shaved by 25%. So for graphics workloads the practical performance drop is going to be greater than the 13% mark for compute throughput, but also generally less than the 25% mark for ROP/memory throughput.

Speaking of video memory, NVIDIA has called this the RTX 2060 but early indications are that there will be different configurations of RTX 2060s with less VRAM and possibly fewer CUDA cores and other hardware resources. Hence, it seems forward-looking to refer to the product mentioned in this article as the RTX 2060 (6GB); as you might recall, the GTX 1060 6GB was launched as the ‘GTX 1060’ and so appeared as such in our launch review, up until a month later with the release of the ‘GTX 1060 3GB’, a branding that does not indicate its lower-performing GPU configuration unrelated to frame buffer size. Combined with ongoing GTX 1060 naming shenanigans, as well as with GTX 1050 variants (and AMD’s own Polaris naming shenanigans also of note), it seems prudent to make this clarification now in the interest of future accuracy and consumer awareness.

| NVIDIA GTX 1060 Variants Specification Comparison |

|||||||

| GTX 1060 6GB | GTX 1060 6GB (9 Gbps) |

GTX 1060 6GB (GDDR5X) | GTX 1060 5GB (Regional) | GTX 1060 3GB | |||

| CUDA Cores | 1280 | 1280 | 1280 | 1280 | 1152 | ||

| Texture Units | 80 | 80 | 80 | 80 | 72 | ||

| ROPs | 48 | 48 | 48 | 40 | 48 | ||

| Core Clock | 1506MHz | 1506MHz | 1506MHz | 1506MHz | 1506MHz | ||

| Boost Clock | 1708MHz | 1708MHz | 1708MHz | 1708MHz | 1708MHz | ||

| Memory Clock | 8Gbps GDDR5 | 9Gbps GDDR5 | 8Gbps GDDR5X | 8Gbps GDDR5 | 8Gbps GDDR5 | ||

| Memory Bus Width | 192-bit | 192-bit | 192-bit | 160-bit | 192-bit | ||

| VRAM | 6GB | 6GB | 6GB | 5GB | 3GB | ||

| TDP | 120W | 120W | 120W | 120W | 120W | ||

| GPU | GP106 | GP106 | GP104* | GP106 | GP106 | ||

| Launch Date | 7/19/2016 | Q2 2017 | Q3 2018 | Q3 2018 | 8/18/2016 | ||

Moving on, NVIDIA is rating the RTX 2060 for a TDP of 160W. This is down from the RTX 2070, but only slightly, as those cards are rated for 175W. Cut-down GPUs have limited options for reducing their power consumption, so it’s not unusual to see a card like this rated to draw almost as much power as its full-fledged counterpart.

All-in-all, the GeForce RTX 2060 (6GB) is quite the interesting card, as the value-enthusiast segment tends to be more attuned to price and power consumption than the performance-enthusiast segment. Additionally, as a value-enthusiast card and potential upgrade option it will also need to perform well on a wide range of older and newer games – in other words, traditional rasterization performance rather than hybrid rendering performance.

Meanwhile, looking at evaluating the RTX 2060 itself, measuring generalizable hybrid rendering performance remains unclear. Linked to the Windows 10 October 2018 Update (1809), DXR has been rolled-out fairly recently. 3DMark’s DXR benchmark, Port Royal, is due on January 8th, while for realtime raytracing Battlefield V is the sole title with it for the moment, with optimization efforts are ongoing as seen in their recent driver efforts. Meanwhile, it seems that some of Turing's other advanced shader features (Variable Rate Shading) are only currently available in Wolfenstein II.

Of course, RTX support for a number of titles have been announced and many are due this year, but there is no centralized resource to keep track of availability. It’s true that developers are ultimately responsible for this information and their game, but on the flipside, this has required very close cooperation between NVIDIA and developers for quite some time. In the end, RTX is a technology platform spearheaded by NVIDIA and inextricably linked to their hardware, so it’s to the detriment of potential RTX 20 series owners in researching and collating what current games can make use of which specialized hardware features they purchased.

| Planned NVIDIA Turing Feature Support for Games | |||||

| Game | Real Time Raytracing | Deep Learning Supersampling (DLSS) | Turing Advanced Shading | ||

| Anthem | Yes | ||||

| Ark: Survival Evolved | Yes | ||||

| Assetto Corsa Competizione | Yes | ||||

| Atomic Heart | Yes | Yes | |||

| Battlefield V | Yes (available) |

Yes | |||

| Control | Yes | ||||

| Dauntless | Yes | ||||

| Darksiders III | Yes | ||||

| Deliver Us The Moon: Fortuna | Yes | ||||

| Enlisted | Yes | ||||

| Fear The Wolves | Yes | ||||

| Final Fantasy XV | Yes (available in standalone benchmark) |

||||

| Fractured Lands | Yes | ||||

| Hellblade: Senua's Sacrifice | Yes | ||||

| Hitman 2 | Yes | ||||

| In Death | Yes | ||||

| Islands of Nyne | Yes | ||||

| Justice | Yes | Yes | |||

| JX3 | Yes | Yes | |||

| KINETIK | Yes | ||||

| MechWarrior 5: Mercenaries | Yes | Yes | |||

| Metro Exodus | Yes | ||||

| Outpost Zero | Yes | ||||

| Overkill's The Walking Dead | Yes | ||||

| PlayerUnknown Battlegrounds | Yes | ||||

| ProjectDH | Yes | ||||

| Remnant: From the Ashes | Yes | ||||

| SCUM | Yes | ||||

| Serious Sam 4: Planet Badass | Yes | ||||

| Shadow of the Tomb Raider | Yes | ||||

| Stormdivers | Yes | ||||

| The Forge Arena | Yes | ||||

| We Happy Few | Yes | ||||

| Wolfenstein II | Yes, Variable Shading (available) |

||||

So the RTX 2060 (6GB) is in a better situation than the RTX 2070. With comparative GTX 10 series products either very low on stock (GTX 1080, GTX 1070) or at higher prices (GTX 1070 Ti), there’s less potential for sales cannibalization. And as Ryan mentioned in the AnandTech 2018 retrospective on GPUs, with leftover Pascal inventory due to the cryptocurrency bubble, there’s much less pressure to sell Turing GPUs at lower prices. So the RTX 2060 leaves the existing GTX 1060 6GB (1280 cores) and 3GB (1152 cores) with breathing room. That being said, $350 is far from the usual ‘mainstream’ price-point, and even more expensive than the popular $329 enthusiast-class GTX 970.

Across the aisle, the recent Radeon RX 590 in the mix, though its direct competition is the GTX 1060 6GB. Otherwise, the Radeon RX Vega 56 is likely the closer matchup in terms of performance. Even then, AMD and its partners are going to have little choice here: either they're going to have to drop prices to accomodate the introduction of the RTX 2060, or essentially wind down Vega sales.

Unfortunately we've not had the card in for testing as long as we would've liked, but regardless the RTX platform performance testing is in the same situation as during the RTX 2070 launch. Because the technology is still in the early days, we can’t accurately determine the performance suitability of RTX 2060 (6GB) as an entry point for the RTX platform. So the same caveats apply to gamers considering making the plunge.

| Q1 2019 GPU Pricing Comparison | |||||

| AMD | Price | NVIDIA | |||

| Radeon RX Vega 56 | $499 | GeForce RTX 2070 | |||

| $449 | GeForce GTX 1070 Ti | ||||

| $349 | GeForce RTX 2060 (6GB) | ||||

| $335 | GeForce GTX 1070 | ||||

| Radeon RX 590 | $279 | ||||

| $249 | GeForce GTX 1060 6GB (1280 cores) |

||||

| Radeon RX 580 (8GB) | $200/$209 | GeForce GTX 1060 3GB (1152 cores) |

|||

134 Comments

View All Comments

B3an - Monday, January 7, 2019 - link

More overpriced useless shit. These reviews are very rarely harsh enough on this kind of crap either, and i mean tech media in general. This shit isn't close to being acceptable.PeachNCream - Monday, January 7, 2019 - link

Professionalism doesn't demand harshness. The charts and the pricing are reliable facts that speak for themselves and let a reader reach conclusions about the value proposition or the acceptability of the product as worthy of purchase. Since opinions between readers can differ significantly, its better to exercise restraint. These GPUs are given out as media samples for free and, if I'm not mistaken, other journalists have been denied pre-NDA-lift samples by blasting the company or the product. With GPU shortages all around and the need to have a day one release in order to get search engine placement that drives traffic, there is incentive to tenderfoot around criticism when possible.CiccioB - Monday, January 7, 2019 - link

It all depends on what is your definition of "shit".Shit may be something that for you costs too much (so shit is Porche, Lamborghini and Ferrari, but for some else, also Audi, BMW and Mercedes and for some one else also all C cars) or may be something that does not work as expected or under perform with respect to the resources it has.

So for someone else it may be shit a chip that with 230mm^q, 256GB/s of bandwidth and 240W perform like a chip that is 200mm^2, 192GB/s of bandwidth and uses half the power.

Or it may be a chip that with 480mm^2, 8GB of latest HBM technology and more than 250W perform just a bit better than a 314mm^2 chip with GDDR5X and that uses 120W less.

On each one its definition of "shit" and what should be bought to incentive real technological progress.

saiga6360 - Tuesday, January 8, 2019 - link

It's shit when your Porsche slows down when you turn on its fancy new features.Retycint - Tuesday, January 8, 2019 - link

The new feature doesn't subtract from its normal functions though - there is still an appreciable performance increase despite the focus on RTS and whatnot. Plus, you can simply turn RTS off and use it like a normal GPU? I don't see the issue heresaiga6360 - Tuesday, January 8, 2019 - link

If you feel compelled to turn off the feature, then perhaps it is better to buy the alternative without it at a lower price. It comes down to how much the eye candy is worth to you at performance levels that you can get from a sub $200 card.CiccioB - Tuesday, January 8, 2019 - link

It's shit when these fancy new features are kept back by the console market that has difficult at handling less than half the polygons that Pascal can, let alone the new Turing CPUs.The problem is not the technology that is put at disposal, but it is the market that is held back by obsolete "standards".

saiga6360 - Tuesday, January 8, 2019 - link

You mean held back by economics? If Nvidia feels compelled to sell ray tracing in its infancy for thousands of dollars, what do you expect of console makers who are selling the hardware for a loss? Consoles sell games, and if the games are compelling without the massive polygons and ray tracing then the hardware limitations can be justified. Besides, this hardly can be said of modern consoles that can push some form of 4K gaming at 30fps of AAA games not even being sold on PC. Ray tracing is nice to look at but it hardly justifies the performance penalties at the price point.CiccioB - Wednesday, January 9, 2019 - link

The same may be said for 4K: fancy to see but 4x the performance vs FulllHD is too much.But as you can se, there are more and more people looking for 4K benchmarks to decide which card to buy.

I would trade better graphics vs resolution any day.

Raytraced films on bluray (so in FullHD) are way much better than any rasterized graphics at 4K.

The path for graphics quality has been traced. Bear with it.

saiga6360 - Wednesday, January 9, 2019 - link

4K vs ray tracing seems like an obvious choice to you but people vote with their money and right now, 4K is far less cost prohibitive for the eye-candy choice you can get. One company doing it alone will not solve this, especially at such cost vs performance. We got to 4K and adaptive sync because it is an affordable solution, it wasn't always but we are here now and ray tracing is still just a fancy gimmick too expensive for most. Like it or not, it will take AMD and Intel to get on board for ray tracing on hardware across platforms, but before that, a game that truly shows the benefits of ray tracing. Preferably one that doesn't suck.