The Samsung Galaxy Note7 (S820) Review

by Joshua Ho on August 16, 2016 9:00 AM ESTDisplay

Moving past the design the next point of interest is going to be the display which is one of the major elements of any smartphone, and pretty much the first thing you’re going to notice when you power on the phone. It’s easy to look at a display and provide some fluff about how the colors pop and how the high contrast leads to dark, inky blacks, but to rely solely on subjective observation really fails to capture the full extent of what a display is really like. If you put two displays side by side, you can tell that one is more visible outdoors, but there’s no way of distinguishing whether this is the case because of differences in display reflectance or display luminance. Other factors like gamut and gamma can also affect perceived visibility which is the basis for technologies like Apical’s Assertive Display system.

In order to try and separate out these various effects and reduce the need for relative testing we can use testing equipment that allows for absolute values which allow us to draw various conclusions about the ability of a display to perform to a certain specification. While in some cases more is generally speaking better, there are some cases where this isn’t necessarily true. An example of this is gamut and gamma. Although from an engineering perspective the ability to display extremely wide color gamuts is a good thing, we’re faced with the issue of standards compliance. For the most part we aren’t creating content that is solely for our own consumption, so a display needs to accurately reproduce content as the content creator intended. On the content creation side, it’s hard to know how to edit a photo to be shared if you don’t know how it will actually look on other people’s hardware. This can lead to monetary costs as well if you print photos from your phone that look nothing like the on-device preview.

To test all relevant aspects of a mobile display, our current workflow uses X-Rite’s i1Display Pro for cases where contrast and luminance accuracy is important, and the i1Pro2 spectrophotometer for cases where color accuracy is the main metric of interest. In order to put this hardware to use we use SpectraCal/Portrait Display’s CalMAN 5 Ultimate for its highly customizable UI and powerful workflow.

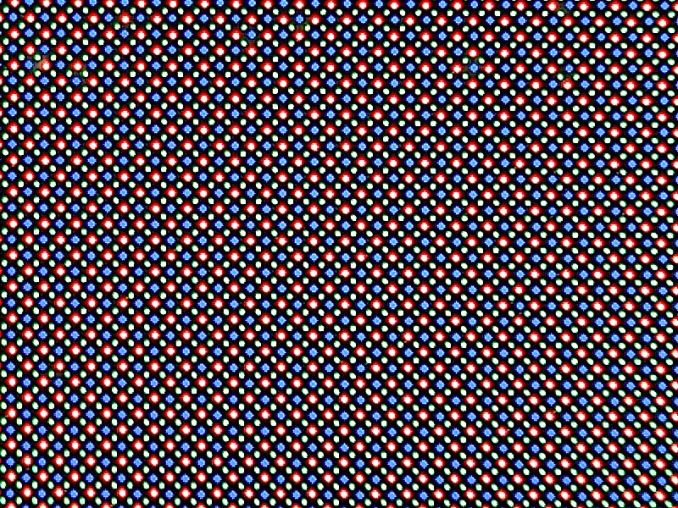

Before we get into the results though I want to discuss a few choice aspects of the Galaxy Note7’s display. At a high level this is a 5.7 inch 1440p Super AMOLED display that is made by Samsung with a PenTile subpixel matrix that uses two subpixels per logical pixel in a diamond arrangement. The display driver supports panel self-refresh as a MIPI DSI command panel rather than a video panel. In the Snapdragon 820 variant of this device it looks like there isn’t a dynamic FPS system and a two lane system is used so the display is rendered in halves. The panel identifies itself as S6E3HA5_AMB567MK01 which I’ve never actually seen anywhere else, but if we take the leap of guessing that the first half is the DDIC this uses a slightly newer revision of DDIC than the S6E3HA3 used in the Galaxy S7. I’m guessing this allows for the HDR mode that Samsung is advertising, but the panel is likely to be fairly comparable to the Galaxy Note5 given that the Galaxy S7 panel is fairly comparable to what we saw in the Galaxy S6.

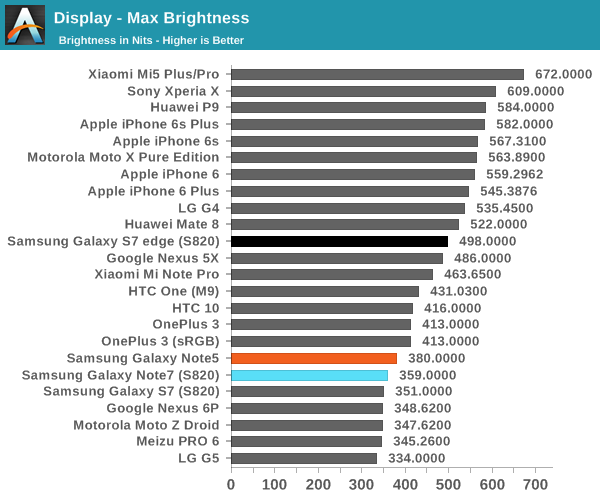

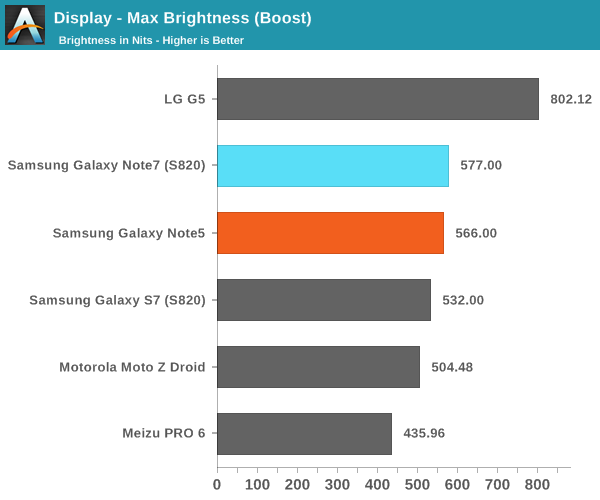

Looking at the brightness of the display, it’s pretty evident that the Galaxy Note7 is a bright panel, especially when compared to things like the HTC 10 and LG G5. The G5 does reach “800 nits” with its auto brightness boost, but the true steady state is nowhere near that point while the Galaxy Note7’s display can actually stay at its boost brightness for a reasonable amount of time and I’ve never really noticed a case where the boost brightness couldn’t be sustained if the environment dictated it.

Before we get into the calibration of the display it’s probably also worth discussing the viewing angles. As you might have guessed, the nature of PenTile and AMOLED have noticeable effects on viewing angles, but in different ways. As AMOLED places light emitters closer to the surface of the glass and doesn’t have a liquid crystal array to affect light emission, contrast and luminance are maintained significantly better than a traditional LCD. However, due to the use of PenTile it is still very obvious that there is a lot of color shifting as viewing angles vary. There are still some interference effects when you vary viewing angles as well. In this regard, LCDs seen in phones like the iPhone 6s are still better here. You could argue that one is more important than the other so I’d call this a wash, but AMOLED could stand to improve here.

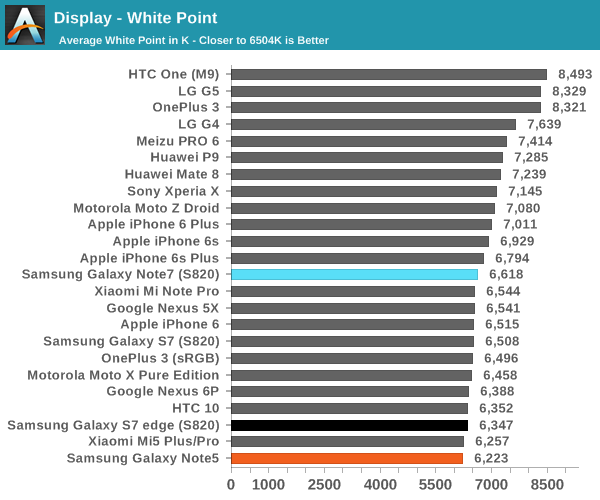

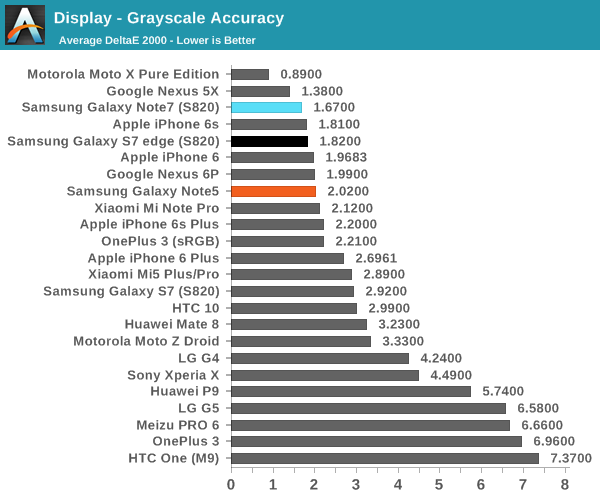

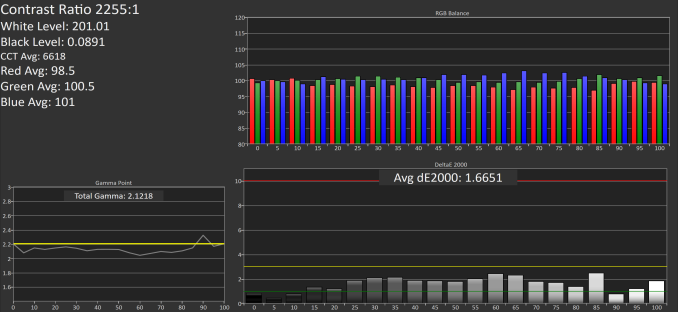

Moving on to grayscale and other parts of the display calibration testing it’s worth mentioning that all of these tests are done in Basic mode which is something I would suggest using in these AMOLED devices in order to improve both calibration accuracy and battery life as brightness is generally controlled by PWM while hue is controlled by voltage, so constraining the gamut actually reduces power draw of the display. Putting this comment aside, the grayscale calibration is really absurdly good here. Samsung could afford to slightly increase the target gamma from 2.1 to 2.2 but the difference is basically indistinguishable even if you had a perfectly calibrated monitor to compare to the Note7 we were sampled. Color temperature here is also neutral with none of the green push that often plagues Samsung AMOLEDs. There’s basically no room to discuss for improvement here because the calibration is going to be almost impossible to distinguish from perfect.

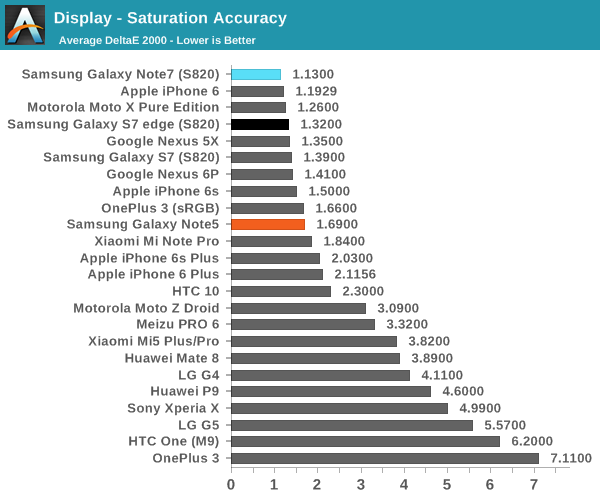

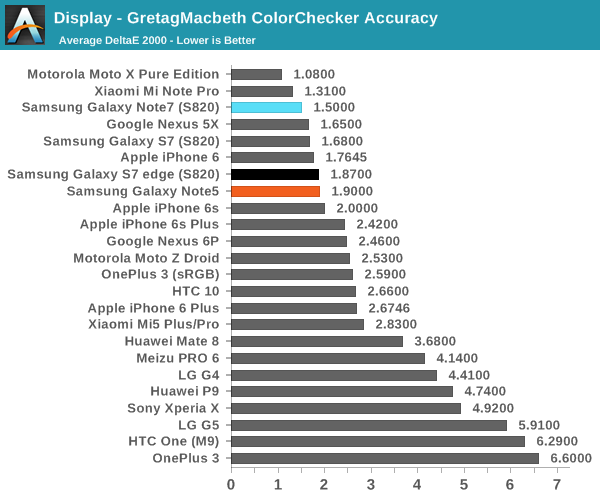

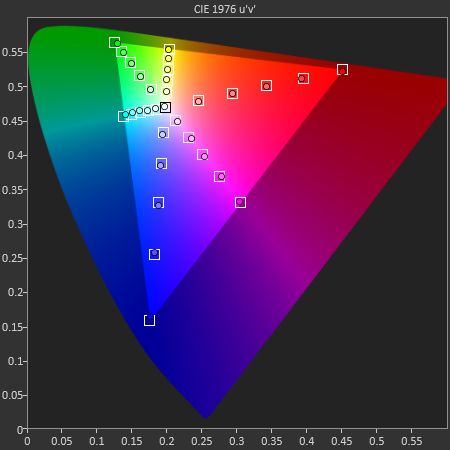

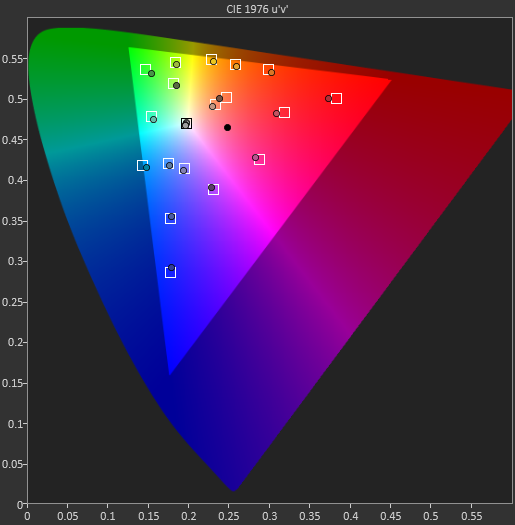

In the saturations test again Samsung has basically nailed the sRGB gamut here to the extent that it’s going to be basically impossible to distinguish it from a reference monitor. I really have nothing else to say here because Samsung has no room to improve here. Of course, saturation sweeps are just one part of the whole story, so we can look at the GMB ColorChecker to see how well the Note7’s display can reproduce common hues.

In the Gretag MacBeth ColorChecker test a number of common tones including skin, sky, and foliage are represented as well as other common colors. Again, Samsung is basically perfect here. They might need to push up the saturation of reds slightly higher but it’s basically impossible to tell this apart from a reference monitor. If you want to use your phone for photo editing, online shopping, watching videos, sharing photos, or pretty much anything where images are reproduced on more than one device, the Galaxy Note7 is going to be a great display. It may not be much of a step up from the Galaxy Note5, but at this point the only avenues that Samsung really needs to improve on is the maximum brightness at realistic APLs above 50% and power efficiency. It would also be good to see wider color gamuts in general, but I suspect the value of such things is going to continue to be limited until Google and Microsoft actually make a serious effort at building color management into the OS. It might also make sense to try and improve color stability with changes in viewing angle, but I suspect that AMOLED faces greater constraints here relative to LCD due to the need to improve the aging characteristics of the display. Regardless, it’s truly amazing just how well Samsung can execute when they make something a priority.

202 Comments

View All Comments

name99 - Friday, August 19, 2016 - link

For crying out loud. Read the damn comments to that article.Bottom line is it doesn't prove what you and Andrei seem to think it proves.

CloudWiz - Sunday, August 21, 2016 - link

Simply because the scheduler is able to schedule a workload across multiple threads does not mean it is taking "full advantage of 4 or more cores". Browser performance is still heavily single-threaded whether you like it or not. Read the comments on Andrei's article.I'm going by Anandtech results here, and the E7420 suffers incredibly on battery life when on LTE compared to Wifi. Without results for the E8890 I can't say for sure, but with not even Qualcomm having found a way to get LTE battery life to even EQUAL Wifi (sure they're getting close, but there's still a small delta) I severely doubt that Samsung can do it, given their E7420 difficulties. Also, personal experience is highly unreliable and unless you have some time-lapse video to prove it, I won't believe you when all the data available says otherwise.

Sure Safari is more efficient. (It's also far more performant, but that's another story.) And yes I will concede that the 6s renders at a fairly low resolution. However you must keep in mind that the 6s Plus actually renders at 2208 x 1242 which is 75% the pixels of 1440p, much closer than you would think. And render resolution on GS6/7 might not even be the display resolution - most Android apps don't bother to render at such a high resolution because most phone's don't have 1440p displays. And given screen technologies in 2016, if Apple switched to 1440p LCD I doubt there would be a 2 hour+ impact to battery life. Phones like the HTC 10 with 1440p LCD displays with the same chip as the GS7 can achieve better battery life than it with the same size battery. This is not only a testament to S820's inefficiencies but also Samsung's implementation inefficiencies. And no, the GS6/7 are not able to "keep up". I've already stated how the GS6 absolutely cannot compare with the 6s or 6s Plus, and even with the GS7 the S820 version barely edges out the 6s with a battery nearly twice the size, with even the more optimized E8890 version being unable to top the 6s Plus with a larger battery. These differences can't be attributed solely to browser inefficiencies or screen densities. Samsung's implementations are simply not efficient compared to Apple or even HTC with their S820 implementation and Huawei with their Kirin 950.

jlabelle2 - Monday, August 22, 2016 - link

- if Apple switched to 1440p LCD I doubt there would be a 2 hour+ impact to battery lifeHow do you explain then the huge drop of battery life between the MacBook from the MacBook Air?

- And no, the GS6/7 are not able to "keep up". I've already stated how the GS6 absolutely cannot compare with the 6s or 6s Plu

The iPhone 6S did not exist when the GS6 was released. The S6 had (slightly) better battery life than the iPhone 6, despite a screen bigger, with 3 times more pixels to push.

The facts do not back up your claims.

CloudWiz - Thursday, August 25, 2016 - link

You have to consider what you're comparing here. On one side you have smartphones which have screens not even 6 inches diagonally. On the other side you have full-blown computers which have screens more than twice the size diagonally. There's a reason most computers haven't moved far past the 1440p-1600p mark. The screens are so big that the power consumption gets unreasonable. The subpixels on computers have to be much larger than on a phone. A 1440p LCD on the HTC 10, for example, will not consume the same power as a 1440p Macbook screen. In fact, it consumes far less, allowing the phone to have basically the same web browsing battery life with a much smaller battery. In addition, the Macbook has a 25% smaller battery compared to the Air, further giving it the disadvantage.I stated that the 6s and 6s Plus destroy the GS6, correct? I never mentioned the 6 at all. Don't try to twist my words. The facts do indeed back up my claims and if you can't see that maybe you should take a look at those charts again. I do concede that the 6 series were terrible phones all around (terrible SoC, terrible display, terrible modem, terrible design, terrible Touch ID) but with the 6s Apple fixed almost all of these issues.

Finally, the S6 actually had worse battery life than the 6 in web browsing and in gaming (taking into account frame rates). What are you basing your (non-existent) claims off of?

sonicmerlin - Sunday, August 28, 2016 - link

You're hugely exaggerating the iPhone 6's deficiencies. Touch ID was it slow but it was far more accurate than any other fingerprint scanner implemented on a mobile device up to that point.The design was the exact same as the 6S. The modem had more LTE bands than had ever been integrated into a single device. The screen wasn't vastly different than the 6S screen either. And the SoC had great single core performance. The battery even lasted longer than the 6S.

lilmoe - Saturday, August 27, 2016 - link

Please don't be offended, but I cannot take you, or any other biased user, seriously when you're claiming that someone's argument is unreliable, then go on and prove the opposite using the same (and/or worse) approach they did. Yes, I've read the comments on that article (all of them actually), and contributed/replied to lots.-- Simply because the scheduler is able to schedule a workload across multiple threads does not mean it is taking "full advantage of 4 or more cores"

A good example of what I mean above. Your statement may (and I say: may) be correct if there WASN'T any DIRECTLY related data to prove it wrong. When 4 or more cores are ramping up (and actually computing data) during app loading and scrolling (including browsers, particularly Samsung's browser), then it sure as hell means that these apps (all the ones tested actually) ARE taking advantage of the extra cores. Unless the scheduler is "mysteriously" loading the cores with bogus compute loads, that is (enter appropriate meme here). Multi-core workloads ARE the future, Apple is sure to follow. It's taking a LONG while, but it's coming. We've also pointed out that there is LOTS of overhead in Android that needs work, and that most certain won't be remedied with larger, faster big cores. On the contrary it would be worse for efficiency.

"I'm going by Anandtech results here"

AHHHHHHH, right there is the caveat, my dear commentator. If YOU had actually read the comment section, you'd know just how much we're complaining about the inconsistency of Anandtech's charts. You never get the same phones/models, consistently, on a series of comparisons; you get the GS7 (Exynos) VS iPhone 6S+ on one, then the GS7e (S820) VS iPhone 6/6S (not the plus) on the other, considering both iPhone models are NOT the same phone, with different resolutions and different screen size/resolution (AND different process nodes among even the same models). It's relatively safe to claim, at this point, that those inconsistencies are intentional, while Anandtech's "excuse" is that not all phones are at the same "lab" at the same time. Selective results at its best. There's absolutely no effort in extracting external factors and testing HARDWARE for what it is. One could argue that the end user is getting a package as a whole, but that's also inconsistent with Anandtech's past and present testing methodology, where at times they claim that they're testing hardware, and times you get a review largely clouded by "personal opinion" like ^this one and the one before. If you really are reading the comment section, you'd see us mention that this review is personal opinion, and adds nothing to what's being said and shown online already. We can deduct the outcome, but we want to know the REASON. Anandtech fails to deliver.

Back to the argument about radios. You haven't tested the devices yourself. You haven't used any as your daily driver. I have. Anandtech's results, charts and whatnot don't only contradict my findings (and many others) in one department, but MOST. It's safe to assume that their testing methodology is flawed, seriously flawed. They absolutely do NOT take into consideration any real world usage, NOR do they completely isolate external factors to test hardware, NOR is there any sort of deep dive or tweaking to justify their claims. For WiFi, they cherry picked the least common scenario to fault the device and they didn't show any performance/reliability data for more common workloads.

I own a Galaxy S7 Edge (Exynos), since day 1. I actually go out and know lots of people, for business and leisure. My family, friends and clients own iPhones (various generations, mostly latest), Galaxies, HTCs, LGs and Huaweis. Guess who has the best reception. Guess who has better and more reliable WiFi. Guess who has the better rear camera (front camera is almost a wash now after updates, and sometimes better. It was worse at launch), BATTERY LIFE, SCREEN, features, etc, etc, etc... This isn't limited to this generation of Galaxy S/Notes, it's been this way since the S4/Note 2 (aesthetics aside). The only drawback on current generation Galaxies is reception COMPARED to previous plastic built Galaxies; they're still BETTER than the competition.

Again, what are we comparing here? Actual hardware? Or real world usage? Performance consistency? I don't know at this point. Anandtech reviews are anything but consistent, and nowhere as easy for an objective user to extract the truer result between the lines. They weren't perfect, but it was easy to deduct the facts from the claims. Now, you get a deep dive of why X is better than Y, but then you get a "statement" of why Z is better than X without anything sort of rational reasoning (sugar coated with "personal taste").

Did you read the camera review? What was Josh comparing there? If it's about post processing, then Samsung isn't worried about his "taste", what they're worried about is what MOST OF THEIR USERS like and want. Their USERS want colorful, vivid and more USABLE images, not a blurry mess. Nothing beats Samsung's camera there, especially with the new focusing system. Again, what's being compared here? Sensor quality or his own taste in post processing? If it's sensor quality, I haven't seen a side by side comparison of RAW photos using the same settings, have you??

-- Sure Safari is more efficient.

Let's stop there then, because we agree. At this point, software optimization can (and does) completely shadow any "potential" inefficiencies in radios. Everyone agrees that Chrome isn't the best optimized browser for ANY platform. Safari has built-in ad blocking, Chrome doesn't. Samsung's browser supports ad-blocking, but Anandtech didn't bother making the comparisons' more apples to apples. They claimed that it performed worse or the same as Chrome. ***BS***. Samsung's Game-Tuner enables the user to run Samsung's browser (or any other app, not just games) in 1080p and even 720p modes, but again, Anandtech didn't bother. I sure as hell noticed a SIGNIFICANT increase in render performance, battery consumption, and lower clock speeds when lowering the resolution (I run my apps and browsers in 1080p mode exclusively, and my games at 720p with no apparent visual difference, but with HUGE performance benefits).

Other than the FACT that these phones are NOT running the same software (OS, apps/games, even if they were the same "titles"), Javascript benchmarks, in particular, are an absolute mind-F***. You get VASTLY different results with different browsers on the same platform, and different results using the same browser on different platforms. Any sort of software optimization can drastically change the results more so than any difference in IPC or clock speed. Any reviewer (or computer scientists for the matter) worth their weight would never, NEVER, claim that a freagin' CPU is faster based on browser benchmarks UNLESS those CPUs were running the SAME VERSION browser, on the SAME platform, using the SAME settings, AND the SAME OS. Anandtech, among others, are "mysteriously" refusing to bring this point to light, and instead chose to fool the minds of their audiences with deliberate false assumptions. Most commentators know this, so how do you expect me, and others, to take you seriously. With that said, javascript (at this piont) is the least deciding factor of browser performance (especially on mobile).

-- This is not only a testament to S820's inefficiencies but also Samsung's implementation inefficiencies

How so? Where's the log data to back this up? Where's the deep dive? Where, in Samsung's software, is the reason for that? How can it be fixed?

This community is infested with false claims, inconsistent results, and bad methods of testing. Youtube is littered with "speedtests" and "RAM management" tests that have no evident value in everyday user experience, and FAIL to exclude external factors like router-scheduling and Google Play Services. No one runs and shuffles 10s of apps and 5 games at the same time.

I'm a regular commentator here, and I've bashed Samsung more than praising them on various subjects. I'm the first to point out the shortcomings of their tradeoffs. But I also know that these shortcomings are far, FAR less user-intrusive for the majority of consumers.

lilmoe - Tuesday, August 16, 2016 - link

"The GT7600 was only beaten in GFXBench this year by the Adreno 530 and surpasses both Adreno 530 and the T880MP12 in Basemark (it also has equal performance to the T880 in Manhattan). You make it sound like the GT7600 is multiple generations behind while it is not. It absolutely crushes the Adreno 430 and the T760MP8 in the Exynos 7420. The GX6450 in the A8 was underpowered but the GT7600 is not."You mean better drivers, right? You mean better a optimized benchmark for a particular GPU on a particular platform, VS a the "same" benchmark not optimized for any particular GPU on another platform...

Even with that handicap, the GS6/7 still manage longer battery life playing games. Amazing right?

CloudWiz - Sunday, August 21, 2016 - link

Whether or not a phone has "better drivers" or an "optimized benchmark" doesn't matter. Sure I can let Basemark go if you so wish, but GFXBench is cross-platform and not optimized (so far as I know) for either iOS or Android. The fact is that offscreen performance is very similar between all devices, and that your statement about the GT7600 'long being surpassed' is false. It's been half a generation since it's been surpassed, and I have no doubts that whatever goes in the A10 will again reclaim the GPU performance crown for Apple. And then Qualcomm/Samsung will pass it again next year - that's how technology works. But the fact that GT7600 is so close to Adreno 530 and T880MP12 despite being half a year older and on an inferior process is a testament to Apple and PowerVR. You couldn't say the same for GX6450 or even G6430.Also, have fun playing a game for 4 hours at 10 fps when it'd be far more enjoyable to play it for 2 hours at 30+ fps.

lilmoe - Saturday, August 27, 2016 - link

What are we comparing here? Unused performance or efficiency as whole? What matters in gaming desktops isn't the same for mobile devices (Smartphones). Some benchmarks are comparing Metal to OpenGL ES 3.1 when they should be comparing a lower level API to its competitor, ie: Vulcan.Mali GPUs have far surpassed PowerVR in efficiency, for a while. You can actually measure that in both benchmarks (battery portions) and real life gaming.

-- Also, have fun playing a game for 4 hours at 10 fps when it'd be far more enjoyable to play it for 2 hours at 30+ fps.

Part of the reason why I can't take you seriously (again, no offense). What game exactly runs at 10fps even at full resolution? I haven't seen any. But it's also good that you do acknowledge that Apple's implementation isn't exactly the most power efficient.

That being said, I run all my games at 720p (just like the iPhone) using Samsung's game tuner app, and not only do they run faster now, but the battery life (which was class leading at full res) is even better. Complaining that Samsung has larger batteries is like complaining that Apple has larger/wider cores. Because, again, what are we comparing here? Hardware? Or user experience??? It's not clear at this point, but the GS7 wins on both accounts at this particular workload.

Game-tuner (and the latest resolution controls in the Note7) has completely diminished my concerns with the resolution race. It doesn't matter to me anymore. They can go 4K (or complete waco 8K) for all I care, as long as I can lower the resolution. I'm baffled how there isn't a complete section about this app/feature (and Samsung's underlying software to enable it). I also bring this up because you get a benchmark tuned-down to 1080p (on my GS7 at least) results more FPS than the 1080p "offscreen" test for "some" reason. After seeing this, I'm even more conservative about these benchmarks.....

jlabelle2 - Monday, August 22, 2016 - link

- the modem on the S6 makes it last a ridiculously short amount of time on LTE and even on Wifi the 6s lasts half an hour longerDo you realize when you wrote that, that the iPhone has a ... Qualcomm modem ?

There is nothing magic about iPhone hardware that people try to make you believe.

It is crazy when you realize that the Note 7, with a bigger screen, with 50% more pixels, can still browse on LTE longer than the iPhone 6S Plus, while being significantly smaller.